☁️AWS Machine Learning Blog•Freshcollected in 9m

Fine-tune Nova models on Bedrock

💡Master fine-tuning Nova on Bedrock for domain tasks with hands-on guide

⚡ 30-Second TL;DR

What Changed

Prepare high-quality training data for domain-specific improvements

Why It Matters

Enables developers to customize powerful Nova models for specific tasks, improving performance over base models and reducing inference costs in production.

What To Do Next

Prepare your dataset and invoke Bedrock's fine-tuning API for Amazon Nova models.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

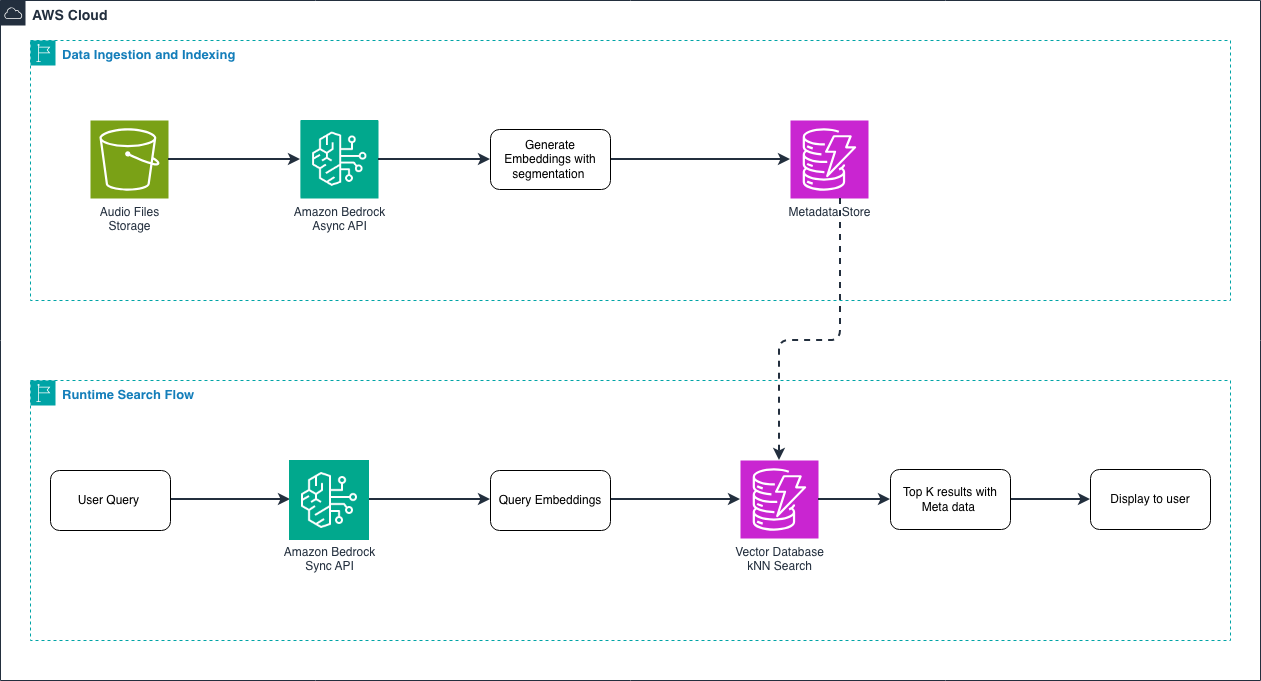

- •Amazon Nova models utilize a multimodal architecture designed for high-throughput, low-latency inference, specifically optimized for the Bedrock managed fine-tuning pipeline.

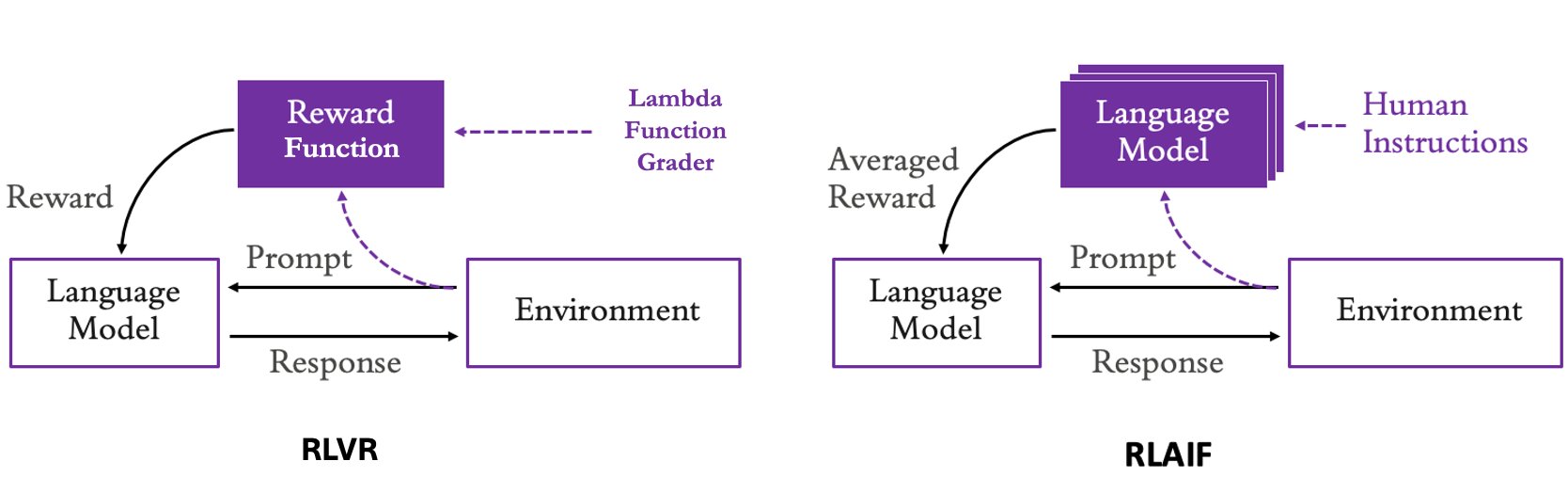

- •The fine-tuning process for Nova models leverages Parameter-Efficient Fine-Tuning (PEFT) techniques, such as LoRA, to minimize compute costs and training time compared to full-parameter fine-tuning.

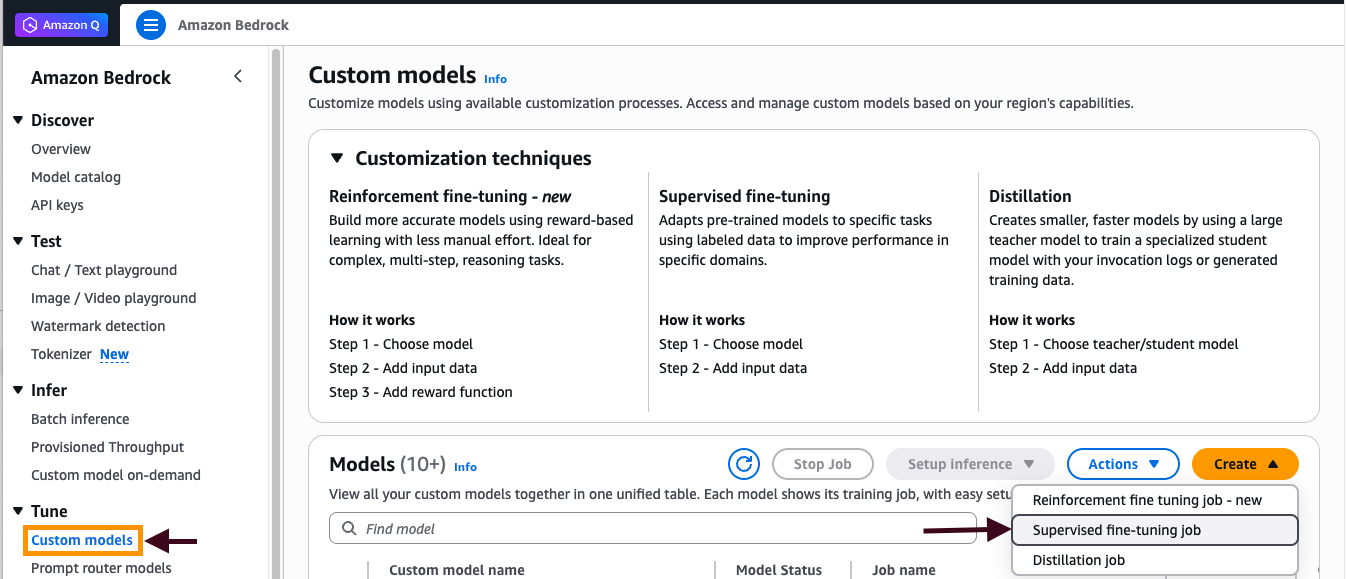

- •Bedrock provides automated data validation and formatting checks within the console to ensure training datasets meet the specific schema requirements for Nova's instruction-following capabilities.

📊 Competitor Analysis▸ Show

| Feature | Amazon Nova (Bedrock) | Google Vertex AI (Gemini) | Azure OpenAI (GPT-4o) |

|---|---|---|---|

| Fine-tuning Method | Managed PEFT/LoRA | Managed PEFT/LoRA | Managed Fine-tuning |

| Pricing Model | Per-token training + Provisioned Throughput | Per-token training + Node-hour usage | Per-token training + Provisioned Throughput |

| Benchmark Focus | Latency/Cost Efficiency | Multimodal Reasoning | General Purpose Reasoning |

🛠️ Technical Deep Dive

- Architecture: Nova models are built on a transformer-based architecture optimized for multimodal inputs (text, image, video).

- Training Infrastructure: Fine-tuning jobs are executed on isolated, ephemeral compute clusters managed by Bedrock, ensuring data privacy and security.

- Hyperparameter Support: Users can tune learning rate, batch size, and epoch count; Bedrock provides default configurations based on dataset size.

- Evaluation: Integration with Bedrock Model Evaluation allows for side-by-side comparison of base vs. fine-tuned models using automated metrics (e.g., ROUGE, BLEU) and human-in-the-loop workflows.

🔮 Future ImplicationsAI analysis grounded in cited sources

Enterprise adoption of custom Nova models will shift from general-purpose LLMs to specialized, domain-specific agents.

The reduced latency and cost of fine-tuned Nova models make real-time, high-accuracy agentic workflows economically viable for production environments.

AWS will expand Bedrock's fine-tuning capabilities to include automated synthetic data generation for Nova models.

As data quality remains the primary bottleneck for fine-tuning, AWS is incentivized to integrate native tools that reduce the manual burden of dataset curation.

⏳ Timeline

2024-12

AWS announces the launch of the Amazon Nova model family.

2025-03

Amazon Bedrock introduces initial support for fine-tuning select Nova model variants.

2026-02

AWS expands fine-tuning capabilities for Nova to include broader multimodal support.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗