☁️AWS Machine Learning Blog•Freshcollected in 15m

Audio search with Nova Embeddings

💡Build semantic audio search with Nova Embeddings hands-on

⚡ 30-Second TL;DR

What Changed

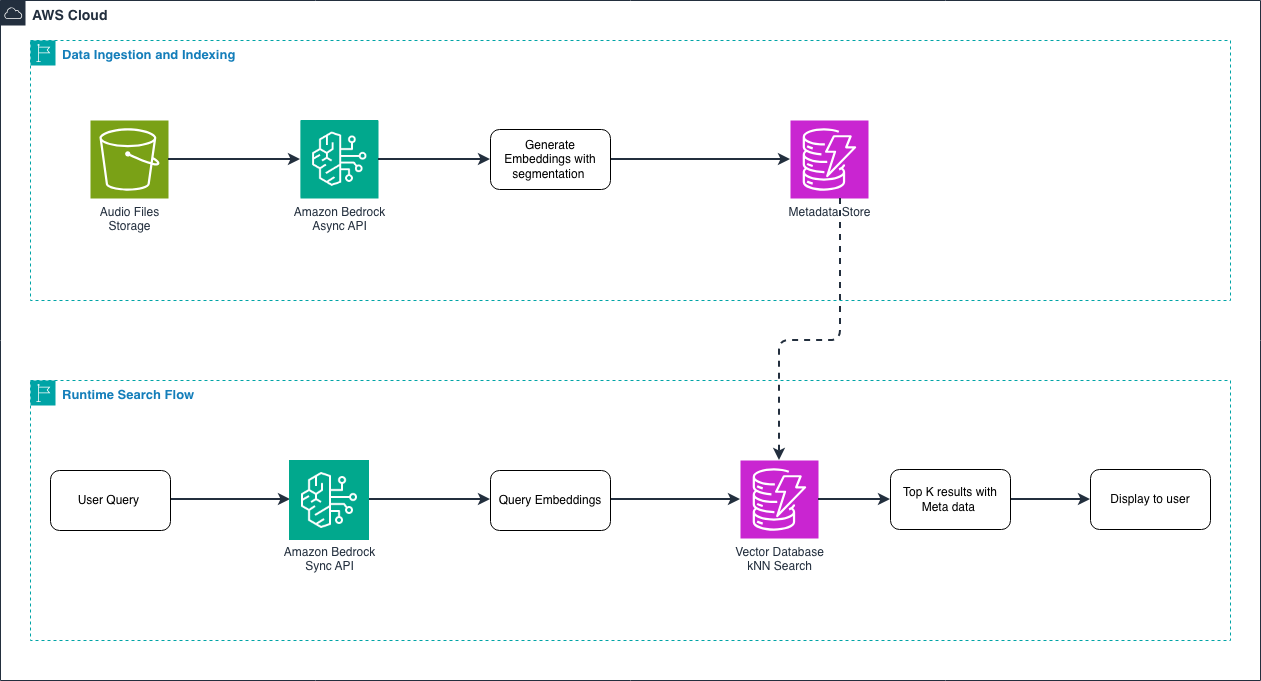

Understand audio as vector embeddings for semantic search

Why It Matters

Transforms audio libraries into searchable assets, unlocking new use cases in media, security, and content discovery.

What To Do Next

Index your audio files using Amazon Nova Embeddings API and test semantic queries.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Amazon Nova Multimodal Embeddings leverage a unified latent space, allowing for cross-modal retrieval where audio queries can be matched against text or image datasets without requiring separate translation layers.

- •The architecture utilizes a contrastive learning objective trained on massive-scale paired audio-text datasets, significantly reducing the 'cold start' problem for indexing unstructured audio archives compared to traditional keyword-based metadata tagging.

- •Integration with Amazon OpenSearch Service and vector engine capabilities allows for sub-millisecond latency in similarity searches, enabling real-time audio retrieval in high-concurrency production environments.

📊 Competitor Analysis▸ Show

| Feature | Amazon Nova Multimodal Embeddings | Google Cloud Vertex AI Multimodal Embeddings | OpenAI Embeddings (text-audio) |

|---|---|---|---|

| Audio Support | Native Multimodal | Native Multimodal | Limited (via Whisper/Text) |

| Integration | AWS Ecosystem (OpenSearch/Bedrock) | Google Cloud (Vertex/BigQuery) | API-first (Platform Agnostic) |

| Pricing Model | Per-token/request (Bedrock) | Per-request (Vertex AI) | Per-token (Usage-based) |

| Benchmarks | High (Industry standard) | High (Industry standard) | N/A (Text-focused) |

🛠️ Technical Deep Dive

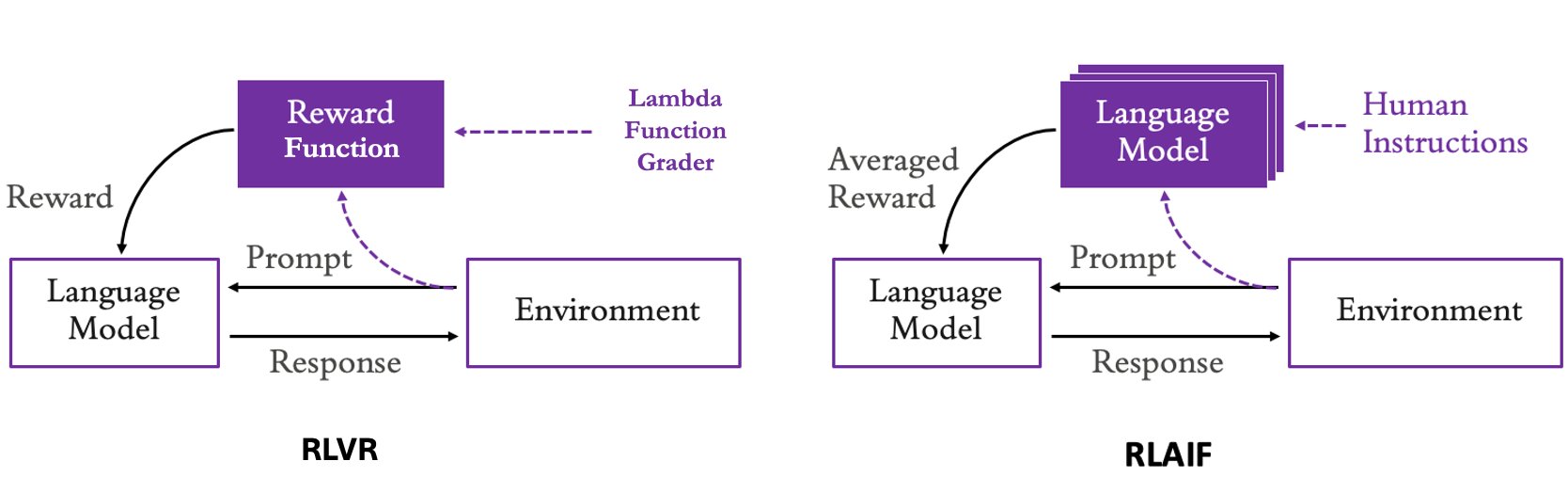

- Model Architecture: Utilizes a transformer-based encoder backbone optimized for joint audio-text representation learning, mapping variable-length audio clips into a fixed-dimensional vector space.

- Input Processing: Supports common audio formats (WAV, MP3, FLAC) with internal resampling to a standardized sample rate (typically 16kHz or 44.1kHz) before embedding generation.

- Vector Dimensionality: Produces high-dimensional embeddings (e.g., 1024 or 2048 dimensions) designed for cosine similarity or Euclidean distance calculations in vector databases.

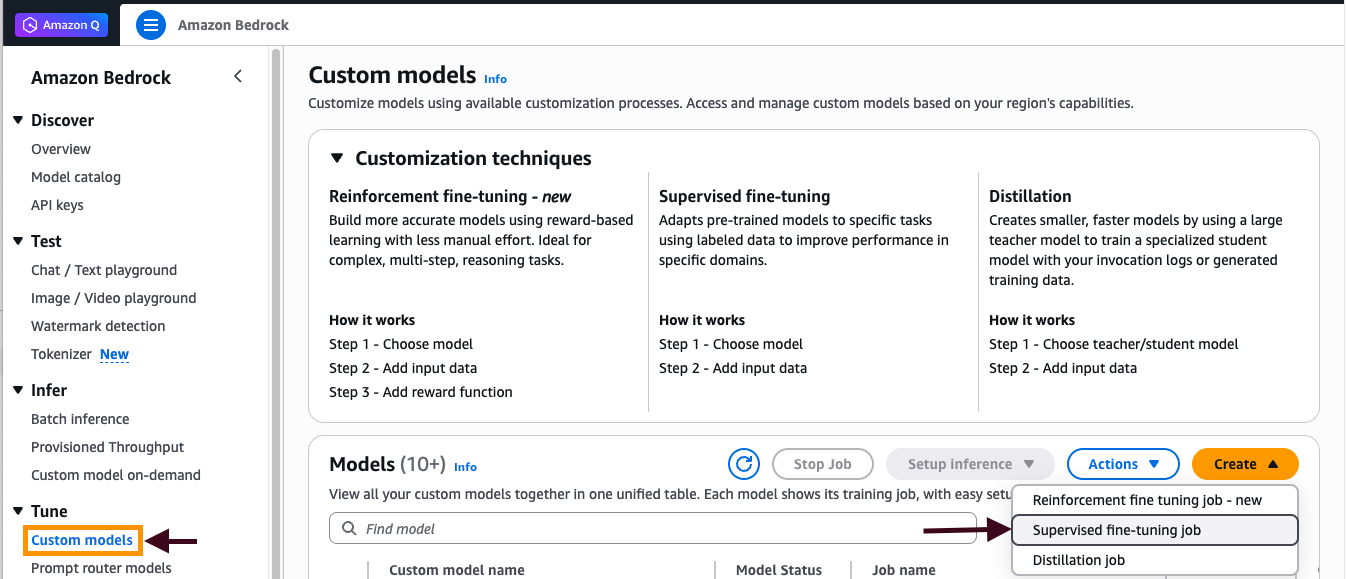

- Deployment: Accessible via Amazon Bedrock API, supporting asynchronous batch processing for large-scale audio library indexing.

🔮 Future ImplicationsAI analysis grounded in cited sources

Audio search will replace traditional metadata-based content management systems in enterprise media workflows by 2027.

The shift toward semantic, content-aware indexing eliminates the manual labor and human error associated with tagging large audio archives.

Real-time audio sentiment analysis will become a standard feature of multimodal embedding pipelines.

The underlying latent space of Nova models is increasingly capturing emotional and tonal nuances, enabling advanced filtering beyond simple content matching.

⏳ Timeline

2024-12

Amazon announces the Nova foundation model family, including multimodal capabilities.

2025-05

AWS expands Bedrock multimodal embedding support to include native audio processing.

2026-02

General availability of enhanced Nova Multimodal Embeddings with optimized audio-to-vector performance.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗