🇨🇳cnBeta (Full RSS)•Stalecollected in 18h

DeepSeek V4, Hunyuan Launch Rumored for April

💡DeepSeek V4 rumor: next open model to challenge GPT-4o?

⚡ 30-Second TL;DR

What Changed

DeepSeek V4 rumored for April 2026 release via White Whale Lab leak.

Why It Matters

Intensifies open-weight LLM competition, potentially offering cost-effective alternatives to proprietary models.

What To Do Next

Test Healer Alpha and Hunter Alpha on OpenRouter for early DeepSeek V4 insights.

Who should care:Developers & AI Engineers

🧠 Deep Insight

Web-grounded analysis with 7 cited sources.

🔑 Enhanced Key Takeaways

- •DeepSeek V4 is led by Liang Wenfeng and features a trillion-parameter Mixture of Experts (MoE) architecture, activating about 37 billion parameters per token for efficient inference[1][2][6].

- •DeepSeek V4 incorporates the Engram memory architecture and supports a 1 million token context window for superior long-term memory and complex interactions[3][5].

- •Tencent's new Hunyuan model has approximately 30 billion parameters, emphasizing long-context processing and Agent task evaluation[1][2].

🛠️ Technical Deep Dive

- •DeepSeek V4 employs a Mixture of Experts (MoE) architecture with a total of 1 trillion parameters, activating approximately 37B parameters per token to maintain manageable inference costs[6].

- •It features native multimodal integration during pre-training, supporting text generation, image understanding/generation, video generation, and cross-modal reasoning[6].

- •Key innovations include the Engram memory architecture for enhanced information retention, a 1 million token context window, and optimizations for domestic Chinese chips like Huawei and Cambricon[3][5][4].

- •DeepSeek V4 builds on a January paper detailing 'conditional memory' mechanisms, with collaborations like Baidu for AI search capabilities[3].

🔮 Future ImplicationsAI analysis grounded in cited sources

DeepSeek V4 will outperform GPT-5.2 and Claude Opus 4.5 on key benchmarks

⏳ Timeline

2024-12

DeepSeek V3 launched, establishing strong open-source benchmarks

2025-01

DeepSeek published paper on conditional memory mechanisms

2026-01

DeepSeek's last major launch before V4

2026-02

Multiple rumors of DeepSeek V4 mid-February release, including V4 Lite appearance

2026-03-02

Reports of imminent DeepSeek V4 multimodal release this week

2026-03-11

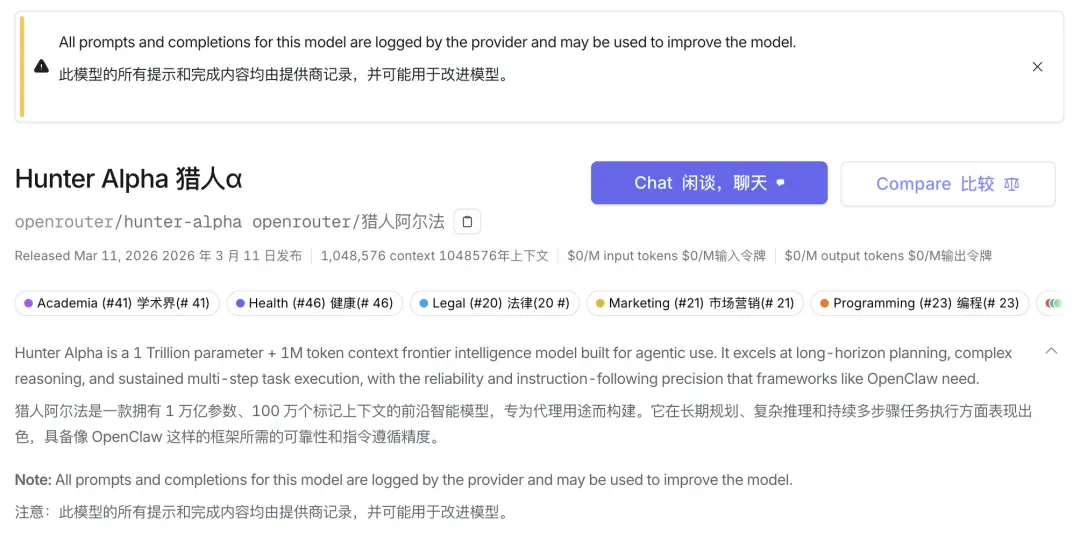

OpenRouter added Healer Alpha and Hunter Alpha models

2026-03-12

BelugaLab leaked April launch details for DeepSeek V4 and Hunyuan

📎 Sources (7)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- phemex.com — Deepseek V4 and Tencents Hunyuan Model Set for April Launch 66427

- kucoin.com — Deepseek V4 and Tencent S New Hunyuan Model Expected to Launch in April

- youtube.com — Watch

- technode.com — Deepseek Plans V4 Multimodal Model Release This Week Sources Say

- vertu.com — Deepseek V4 Complete Guide 2026

- nxcode.io — Deepseek V4 Release Specs Benchmarks 2026

- overchat.ai — Deepseek 4

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗