🗾ITmedia AI+ (日本)•Freshcollected in 40m

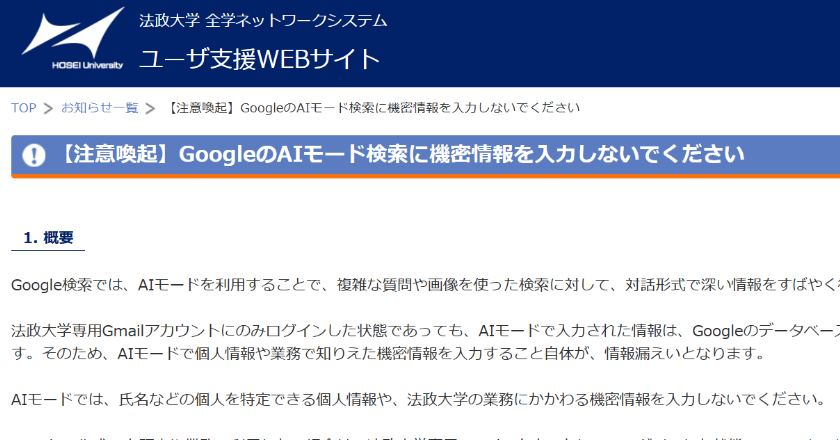

Universities Warn: Avoid Personal Data in Google AI Search

💡Google AI Search stores inputs for training—privacy alert for devs!

⚡ 30-Second TL;DR

What Changed

Rikkyo University issuing campus-wide alerts on AI mode risks

Why It Matters

Highlights privacy risks in AI search tools, urging caution among users and developers. May influence enterprise adoption of similar features due to data retention concerns.

What To Do Next

Review Google AI Overviews data retention policy before testing sensitive queries.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Google's Terms of Service for consumer-facing AI features explicitly state that user feedback and interactions may be reviewed by human annotators to improve model performance, creating a privacy loophole for sensitive data.

- •Japanese universities are increasingly adopting 'AI Usage Guidelines' that categorize data into sensitivity tiers, specifically prohibiting the input of 'Confidential' or 'Personal' data into public-facing LLMs like Google Search AI or ChatGPT.

- •The risk is exacerbated by 'data persistence' where even if a user deletes their search history, the underlying training data sets may have already ingested the information, making it technically difficult to 'unlearn' specific personal identifiers.

📊 Competitor Analysis▸ Show

| Feature | Google AI Search | OpenAI ChatGPT (Free) | Microsoft Copilot |

|---|---|---|---|

| Data Training Opt-out | Available via settings | Available via settings | Available via settings |

| Enterprise Privacy | No (Consumer mode) | No (Consumer mode) | Yes (Enterprise mode) |

| Human Review | Yes | Yes | Yes |

🛠️ Technical Deep Dive

- •Google Search's AI mode utilizes a Retrieval-Augmented Generation (RAG) architecture that dynamically pulls from the web index and user interaction logs.

- •Data ingestion pipelines for model fine-tuning often utilize automated filtering, but these filters are optimized for safety (e.g., hate speech) rather than PII (Personally Identifiable Information) redaction.

- •The 'AI mode' operates on a feedback loop where user prompts are stored in Google's 'My Activity' logs, which are then processed by automated pipelines for reinforcement learning from human feedback (RLHF).

🔮 Future ImplicationsAI analysis grounded in cited sources

Universities will mandate local, air-gapped LLM deployments for research.

The inability to guarantee data privacy in public cloud-based AI models will force institutions to host open-source models on internal servers to protect intellectual property.

Google will introduce a 'Privacy-First' toggle for AI search.

Increasing regulatory pressure and institutional bans will force Google to offer a mode that explicitly excludes user inputs from training datasets to retain enterprise and academic users.

⏳ Timeline

2023-02

Google announces Bard (now Gemini) and begins integrating generative AI into search experiments.

2024-05

Google officially rolls out AI Overviews in Search to the general public in the United States.

2025-01

Japanese Ministry of Education, Culture, Sports, Science and Technology (MEXT) issues updated guidelines on generative AI use in schools.

2026-04

Rikkyo University and other Japanese institutions issue formal warnings regarding PII input into AI-enhanced search tools.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗