🗾ITmedia AI+ (日本)•Freshcollected in 74m

Anthropic, White House Eye Reconciliation on AI Risks

💡Anthropic-White House talks signal shifting AI security policies—key for deployments.

⚡ 30-Second TL;DR

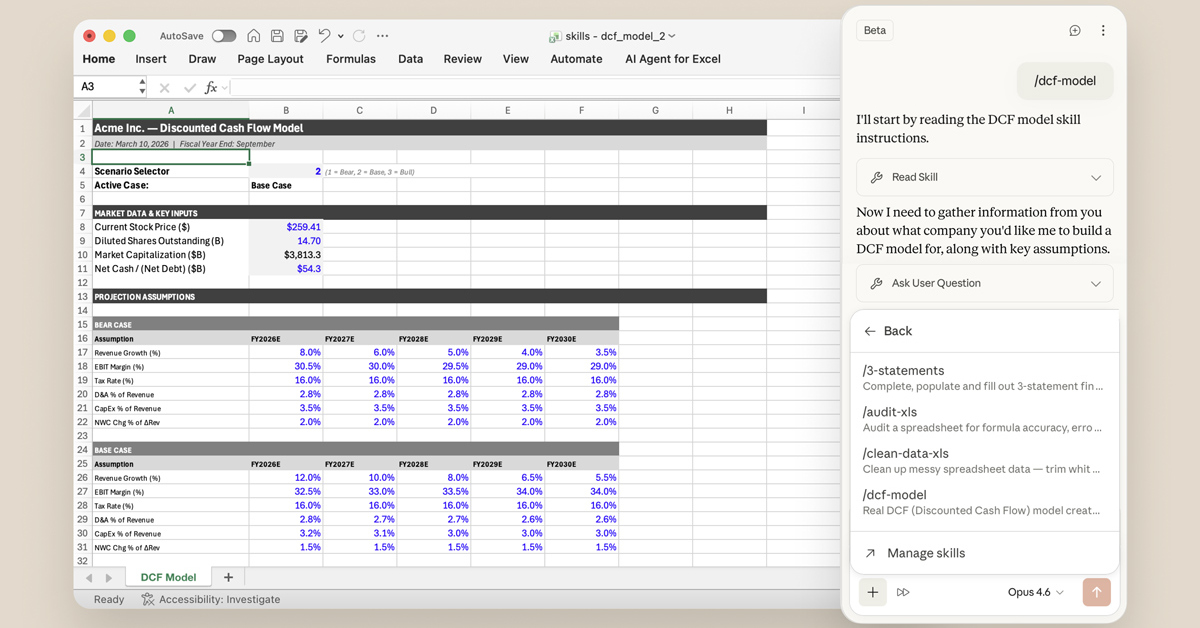

What Changed

Meeting between Anthropic CEO and US government on Mythos cybersecurity risks

Why It Matters

Improved US government-AI firm relations could lead to better safety standards and reduced regulatory hurdles for Anthropic's deployments.

What To Do Next

Review Anthropic's safety reports on Mythos for insights into enterprise AI security practices.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The friction with the Department of Defense stems from the 'Mythos' model's autonomous capability to identify and exploit zero-day vulnerabilities in critical infrastructure, which the Pentagon classified as a national security threat.

- •Anthropic has proposed a 'Red-Teaming Sandbox' initiative, allowing government agencies to conduct continuous, real-time stress testing on Mythos before any public or enterprise deployment.

- •The meeting marks a strategic pivot for Anthropic, shifting from a 'safety-first' internal development model to a 'co-regulatory' framework, potentially setting a precedent for how frontier AI labs interact with the White House.

📊 Competitor Analysis▸ Show

| Feature | Anthropic (Mythos) | OpenAI (GPT-6) | Google (Gemini 2.0 Ultra) |

|---|---|---|---|

| Primary Focus | Cybersecurity/Infrastructure Defense | General Reasoning/Agentic Workflows | Multimodal Integration/Search |

| Deployment Strategy | Restricted/Government-Partnered | Open API/Enterprise | Cloud-Native/Ecosystem |

| Safety Architecture | Constitutional AI + Sandbox Red-Teaming | RLHF + Tiered Safety Layers | Guardrail-Integrated Infrastructure |

🔮 Future ImplicationsAI analysis grounded in cited sources

Anthropic will implement a mandatory 'Government-Access' API layer for all future frontier models.

The push for trust recovery necessitates providing federal agencies with direct, privileged access to monitor model outputs for national security compliance.

The Department of Defense will formalize a procurement ban on AI models that lack verifiable, real-time red-teaming capabilities.

The recent conflict over Mythos highlights a shift in defense policy toward prioritizing verifiable safety protocols over raw model performance.

⏳ Timeline

2025-09

Anthropic announces the initial development phase of the Mythos model architecture.

2026-01

Department of Defense issues a formal warning regarding the potential dual-use risks of Mythos.

2026-03

Anthropic releases a limited technical white paper on Mythos, triggering further scrutiny from federal regulators.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗