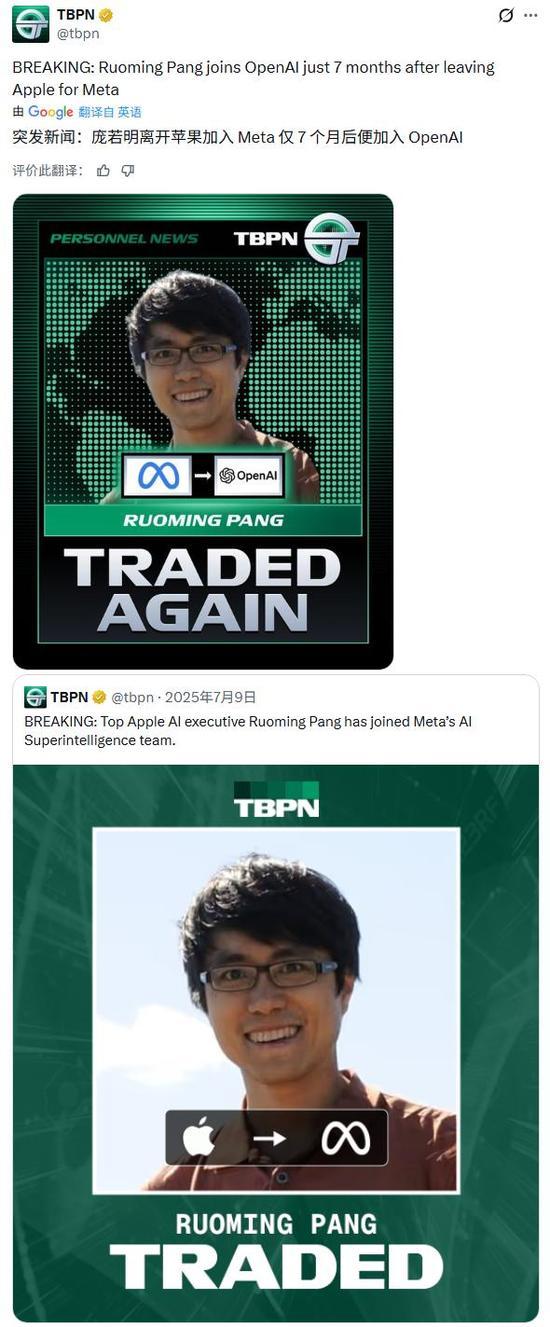

Pang Ruoming Joins OpenAI from Meta

💡OpenAI poaches Meta's foundation model star—talent shifts signal model breakthroughs ahead.

⚡ 30-Second TL;DR

What Changed

Pang Ruoming, foundation models expert, hired by OpenAI on Feb 26.

Why It Matters

Escalates talent wars among AI labs, potentially boosting OpenAI's frontier model advancements. Signals high value of Chinese AI expertise in global race.

What To Do Next

Review Pang Ruoming's Apple/Meta publications on arXiv for foundation model techniques.

🧠 Deep Insight

Web-grounded analysis with 5 cited sources.

🔑 Enhanced Key Takeaways

- •Pang spent over 15 years at Google, co-founding Zanzibar (Google's global authorization system) and contributing to Bigtable-based search systems and the Lingvo deep learning framework for TPUs[1][2][4].

- •At Apple since 2021, Pang led a 100+ engineer team developing foundation models for Apple Intelligence, including multimodal capabilities, MM1 model, and the open-source AXLearn framework[1][4].

- •Pang joined Meta's Superintelligence Labs (MSL) in July 2025 to oversee AI infrastructure under Alexandr Wang and Nat Friedman, amid Zuckerberg's aggressive recruitment drive[1][3].

🛠️ Technical Deep Dive

- •Co-founded Google's Zanzibar authorization platform (2012-2017), improving reliability to 99.999% as sole lead, used across Google services[1][4].

- •Contributed to Bigtable indexed structured search and ZipIt projects at Google, adopted by over 1,000 internal projects[4].

- •Co-led development of Babelfish/Lingvo deep learning framework at Google Brain, the most widely used on TPUs, surpassing AdBrain and DeepMind[4].

- •At Apple, led full-process large model development: pre-training architectures, post-training tuning, inference efficiency, and multimodal text/image capabilities[4].

- •Contributed to Apple's MM1 multimodal large model and AXLearn open-source framework for efficient large-scale AI training (2.1k GitHub stars)[4].

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (5)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- businesstoday.in — From Apple to Meta to Openai in 7 Months Who Is Ruoming Pang 518181 2026 02 26

- timesofindia.indiatimes.com — 128822996

- observer.com — Ruoming Pang Apple AI Executive Poached Meta

- eu.36kr.com — 3372785740699142

- tipranks.com — Openai Poaches Former Apple Leader From Meta As Big Tech AI Clash Deepens

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗