OpenAI Builds Interruptible Voice Model

💡OpenAI voice now adapts to interruptions instantly—game-changer for real-time voice AI apps.

⚡ 30-Second TL;DR

What Changed

Bidirectional model handles user interruptions in real-time

Why It Matters

Advances voice AI toward human-like conversations, enhancing applications in assistants and telephony for more engaging user experiences.

What To Do Next

Experiment with current ChatGPT voice mode to anticipate bidirectional improvements in interruption handling.

🧠 Deep Insight

Web-grounded analysis with 7 cited sources.

🔑 Enhanced Key Takeaways

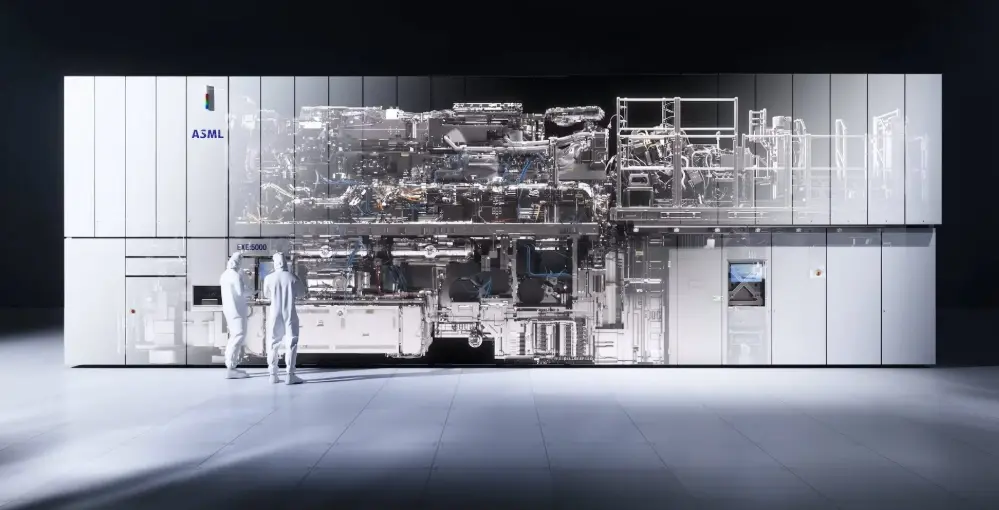

- •OpenAI unified its engineering, product, and research teams in January 2026 specifically to build new audio models and personal devices, signaling a strategic pivot toward voice as the primary human-computer interface[4].

- •The new audio model scheduled for Q1 2026 features full-duplex conversation capability, enabling the AI to speak simultaneously with users while handling real-time interruptions with near-zero latency[2][4].

- •OpenAI acquired design firm io (founded by Jony Ive) for $6.5 billion in May 2025, indicating serious hardware ambitions to create a voice-centric AI device by 2026–2027[4].

- •Advanced Voice Mode, launched in July 2024, processes audio directly without text conversion intermediaries, delivering superior conversational fluency compared to Standard Voice Mode and traditional voice assistants[3][4].

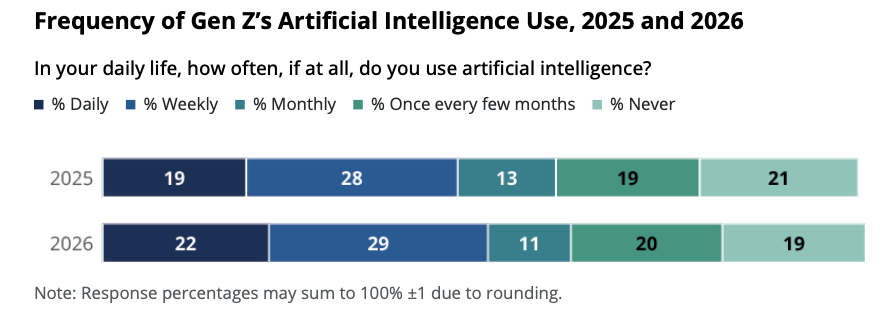

- •ChatGPT's voice capabilities have driven user growth to 900 million weekly active users by February 2026—more than double the 400 million from February 2025—outpacing legacy voice assistants like Siri and Alexa[4].

📊 Competitor Analysis▸ Show

| Feature | ChatGPT Advanced Voice Mode | Traditional Voice Assistants (Siri/Alexa/Google Assistant) | ChatGPT Standard Voice Mode |

|---|---|---|---|

| Conversation Type | Full-duplex, real-time interruption handling | Single-turn, limited multi-turn dialogue | Speech-to-text-to-speech conversion |

| Response Latency | Under 3 seconds with near-zero latency (Q1 2026 model) | Variable, typically 1-2 seconds | 3+ seconds |

| Emotional Expression | Recognizes and expresses sarcasm, empathy, excitement | Limited emotional nuance | Minimal emotional variation |

| Language Support | 50+ languages with continuous translation | 10-20 languages | 50+ languages |

| Underlying Technology | Large Language Models (LLMs) with deep learning | Traditional NLP systems | LLM-based but text-intermediated |

| User Base (Feb 2026) | 900M weekly active users | Declining market share | Being phased out |

| Pricing | $20/month for Advanced Mode; Free tier available | Free (embedded in devices) | Free (being retired) |

🛠️ Technical Deep Dive

- Architecture: Advanced Voice Mode processes audio directly without text conversion, enabling lower latency and better emotional expression preservation[3]

- Speech Recognition: Uses Whisper, OpenAI's open-source speech recognition system, to transcribe spoken words into text[1]

- Text-to-Speech: Powered by a new text-to-speech model capable of generating human-like audio from text and a few seconds of sample speech[1]

- Full-Duplex Capability: Q1 2026 model designed to handle real-time interruptions, speak simultaneously with users, and respond faster than current voice implementations[2][4]

- Voice Synthesis: Collaborated with professional voice actors to create five distinct voice options, with similar partnerships extending to third parties like Spotify for Voice Translation features[1]

- Latency Target: Near-zero latency for the new flagship audio model, addressing earlier screenless AI device failures caused by latency and reliability issues[2]

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (7)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- OpenAI — Chatgpt Can Now See Hear and Speak

- youtube.com — Watch

- qcall.ai — Chatgpt Voice Mode Review

- emarketer.com — Faq on Voice AI How Chatgpt Openai Eclipsing Siri Alexa

- help.openai.com — 6825453 Chatgpt Release Notes

- community.openai.com — 1372477

- medialist.info — Chatgpt 2026 the Voice Becomes the Interface

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗