☁️AWS Machine Learning Blog•Freshcollected in 47m

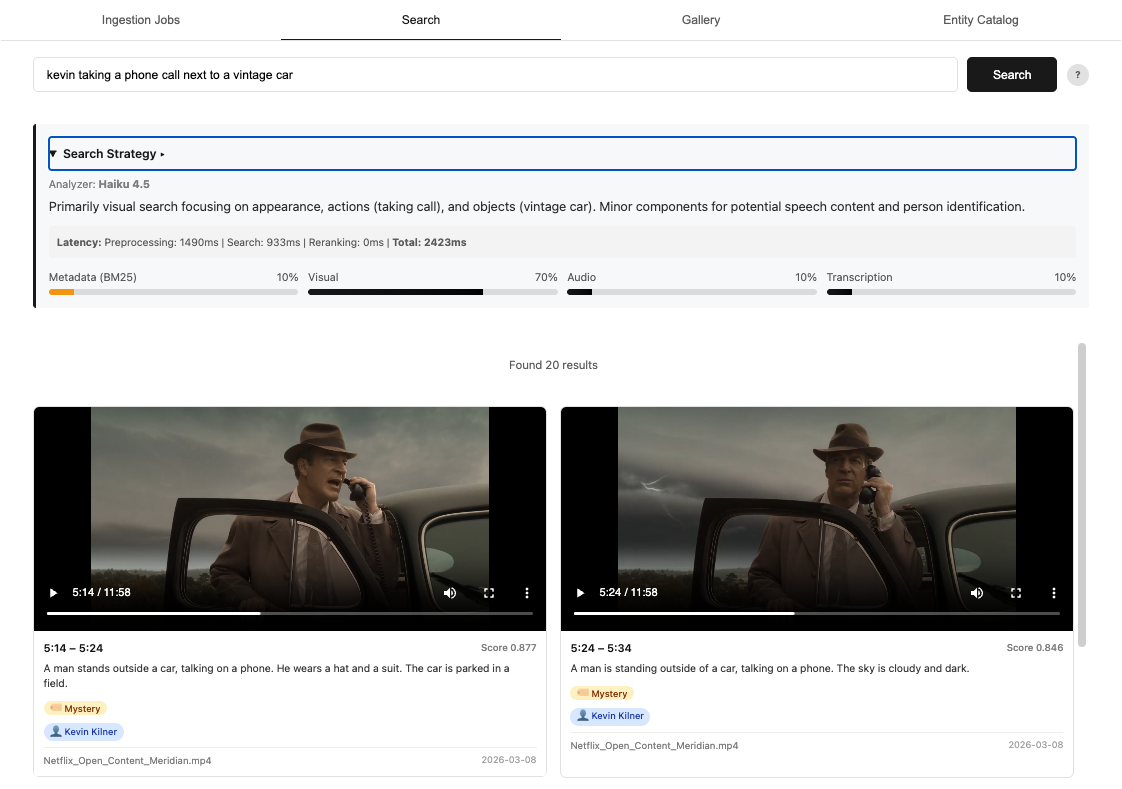

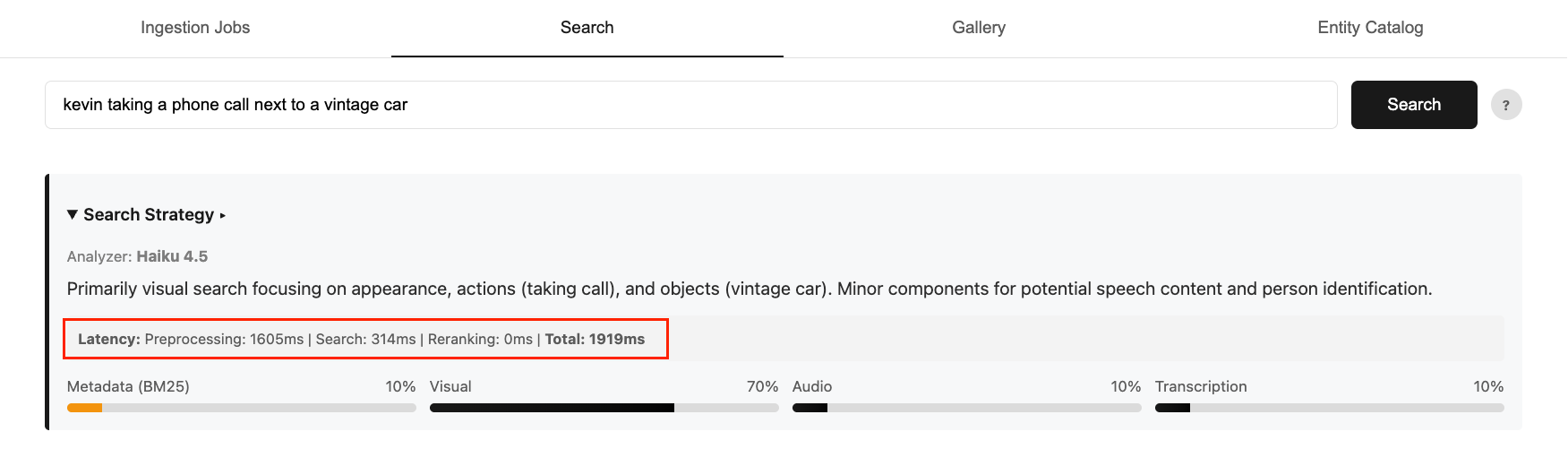

Nova Model Distillation Optimizes Video Search

💡Slash video search costs 95% + 50% faster latency via Nova distillation on Bedrock

⚡ 30-Second TL;DR

What Changed

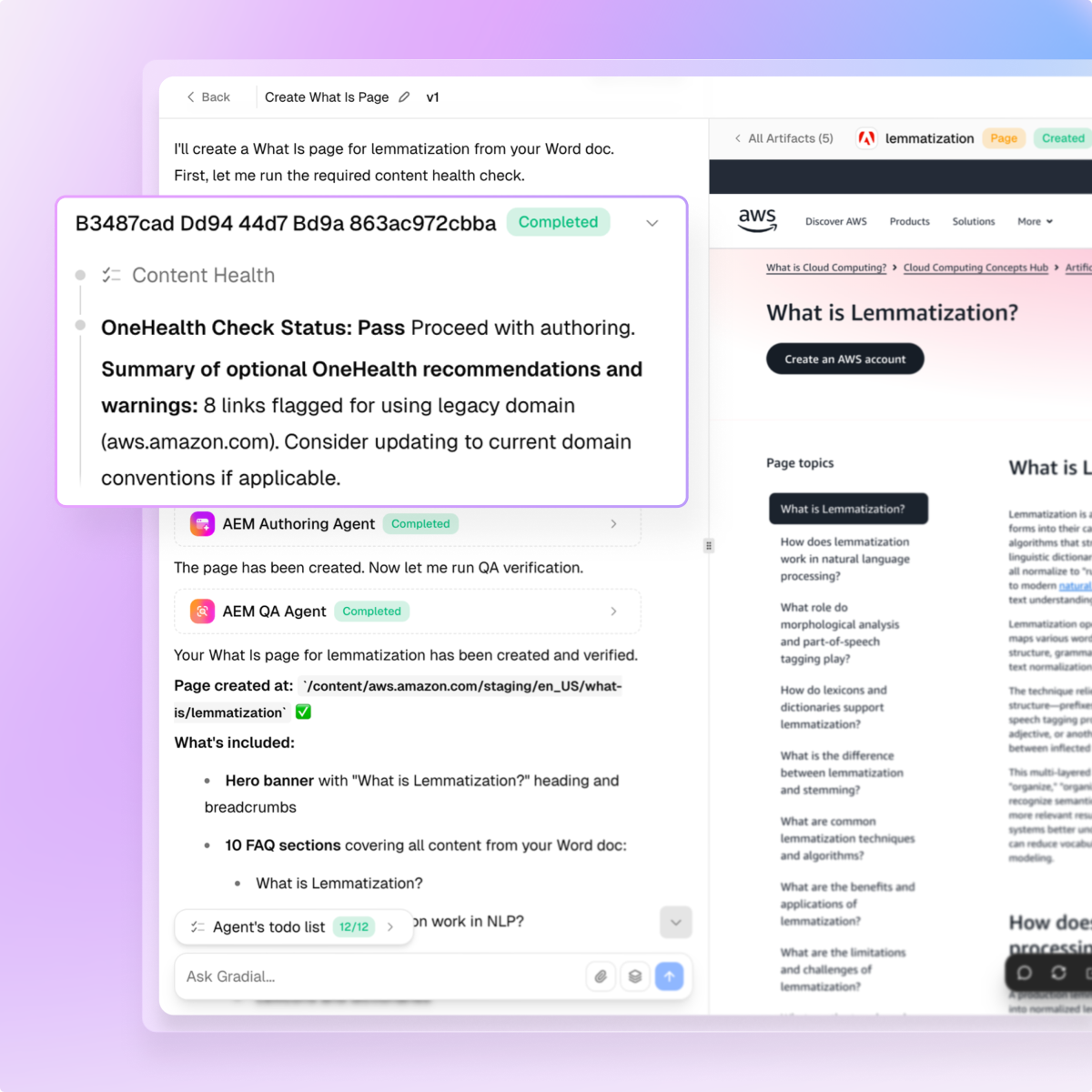

Model Distillation customizes models on Amazon Bedrock

Why It Matters

Drastically lowers costs and speeds up inference for production video search apps. Enables scalable AI deployments without quality loss, benefiting AWS users in multimedia applications.

What To Do Next

Deploy Model Distillation on Amazon Bedrock using Nova Premier as teacher for your video routing tasks.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The distillation process utilizes a 'teacher-student' architecture where Nova Micro is trained on the log-probability distributions of Nova Premier, specifically optimizing for the classification heads used in intent routing.

- •This implementation leverages Amazon Bedrock's managed distillation pipeline, which automates the synthetic data generation process by using the teacher model to label unlabeled video metadata sets.

- •The performance gains are specifically attributed to the reduction in KV cache memory footprint, allowing Nova Micro to run on smaller, more cost-effective instance types within the Bedrock infrastructure.

📊 Competitor Analysis▸ Show

| Feature | Amazon Bedrock (Nova Distillation) | Google Vertex AI (Model Garden) | OpenAI (Fine-tuning API) |

|---|---|---|---|

| Distillation Method | Managed Teacher-Student | Custom Pipeline | Standard Fine-tuning |

| Primary Focus | Cost/Latency Optimization | Model Customization | Task Performance |

| Video Search Routing | Native Support | Requires Custom Integration | Requires Custom Integration |

| Cost Reduction | Up to 95% | Varies by Model | N/A (Performance focus) |

🛠️ Technical Deep Dive

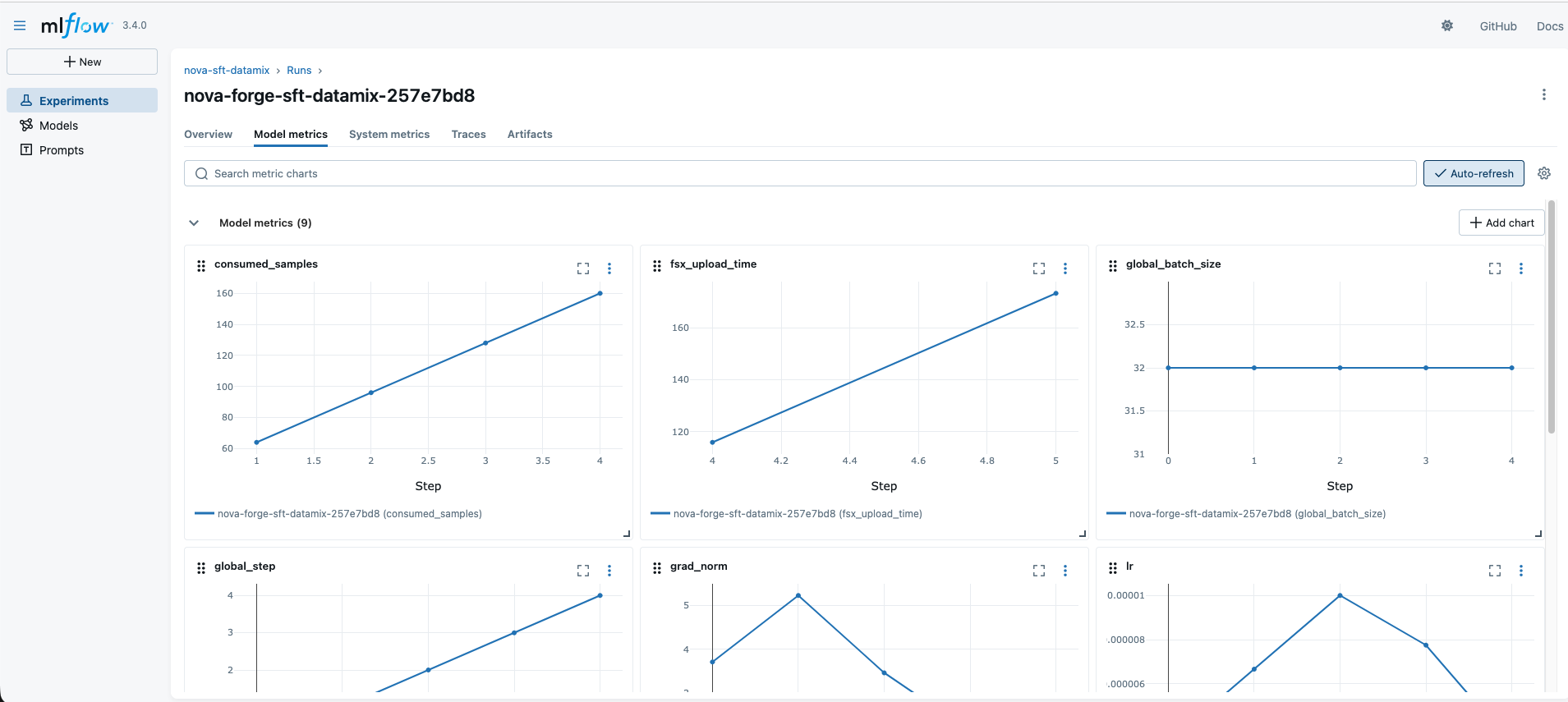

- •Distillation utilizes Knowledge Distillation (KD) loss functions, minimizing the Kullback-Leibler (KL) divergence between the teacher's output logits and the student's predictions.

- •The routing mechanism employs a lightweight classification head added to the Nova Micro architecture, trained specifically to map video semantic embeddings to intent categories.

- •Infrastructure deployment utilizes Amazon Bedrock's provisioned throughput, allowing for the specific optimization of the student model's weights for the target video search domain.

- •The process involves a multi-stage training pipeline: (1) Teacher inference on raw video metadata, (2) Dataset curation of high-confidence teacher outputs, (3) Supervised fine-tuning of the student model.

🔮 Future ImplicationsAI analysis grounded in cited sources

Automated distillation will become the standard for edge-deployed LLMs.

The significant cost and latency improvements demonstrated by Nova Micro suggest that cloud-to-edge model migration will increasingly rely on automated distillation pipelines.

Intent routing will shift from heuristic-based to model-based systems.

The high accuracy of distilled routing models makes traditional rule-based intent classification obsolete for complex, multi-modal search applications.

⏳ Timeline

2024-12

Amazon announces the launch of the Amazon Nova model family.

2025-05

Amazon Bedrock introduces managed model distillation features for enterprise customers.

2026-02

Expansion of Nova Micro capabilities to support specialized domain-specific routing tasks.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗