☁️AWS Machine Learning Blog•Freshcollected in 3m

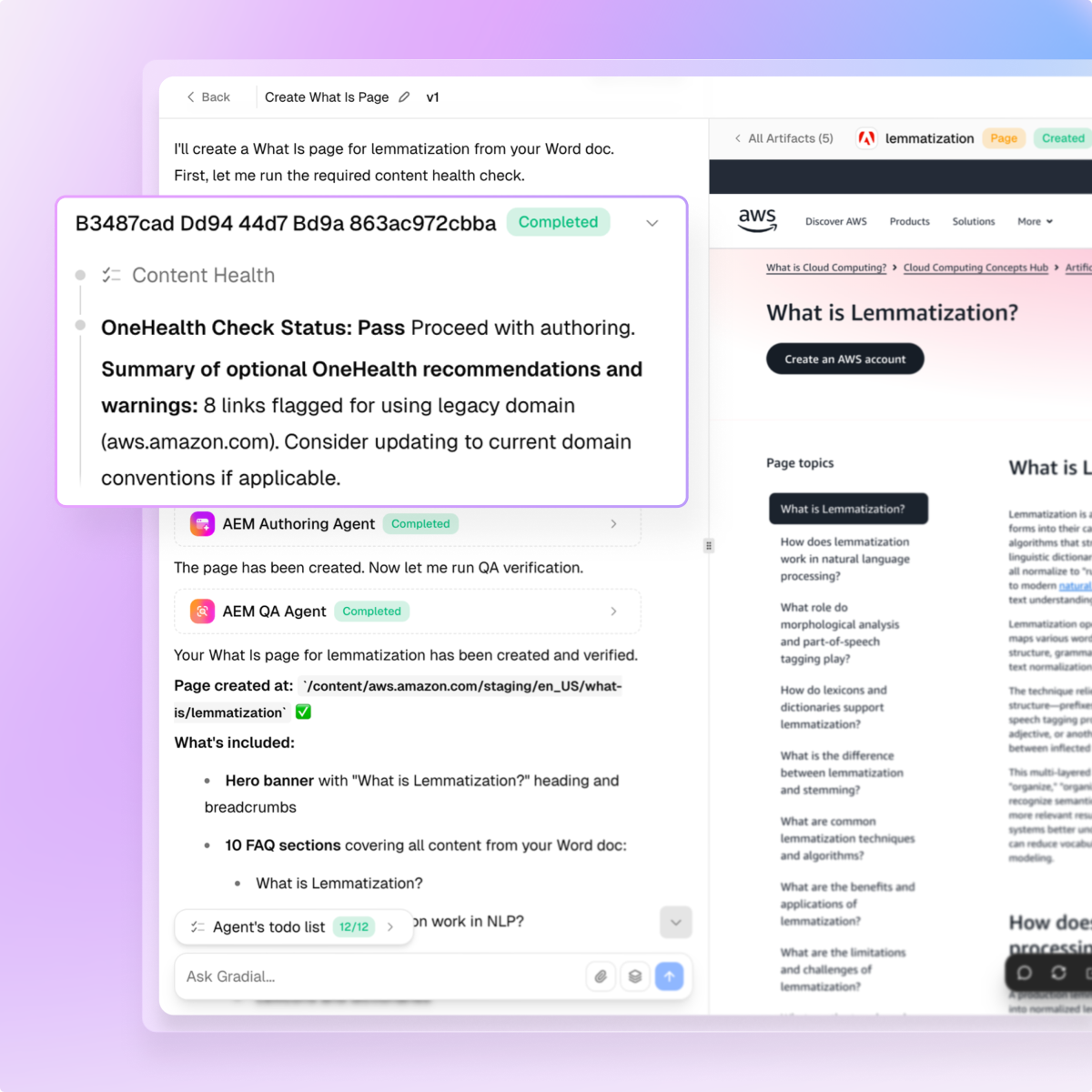

Fine-Tune Nova Models with Data Mixing

💡Master fine-tuning AWS Nova models with data mixing for custom AI apps

⚡ 30-Second TL;DR

What Changed

Step-by-step data preparation for fine-tuning

Why It Matters

Empowers developers to customize powerful Nova models efficiently, accelerating AI application development on AWS.

What To Do Next

Install Nova Forge SDK and run the data mixing fine-tuning tutorial on a Nova model.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Amazon Nova models utilize a multi-modal architecture designed specifically for high-throughput, low-latency inference, distinguishing them from general-purpose LLMs by optimizing for cost-efficiency in production environments.

- •The Nova Forge SDK implements a proprietary data-mixing strategy that allows developers to dynamically weight synthetic data against curated human-annotated datasets to mitigate catastrophic forgetting during fine-tuning.

- •Integration with AWS Bedrock's managed infrastructure enables automated checkpointing and distributed training orchestration, reducing the operational overhead typically associated with custom model fine-tuning pipelines.

📊 Competitor Analysis▸ Show

| Feature | Amazon Nova (via Forge) | Google Vertex AI (Gemini) | OpenAI (Fine-tuning API) |

|---|---|---|---|

| Data Mixing | Native SDK support | Limited/Manual | Limited/Manual |

| Infrastructure | AWS Bedrock Managed | Vertex AI Managed | OpenAI Managed |

| Model Focus | Cost/Latency Efficiency | Multi-modal/Reasoning | General Purpose/Ecosystem |

🛠️ Technical Deep Dive

- Architecture: Nova models utilize a transformer-based backbone optimized for native multi-modality (text, image, video) without requiring separate adapter layers.

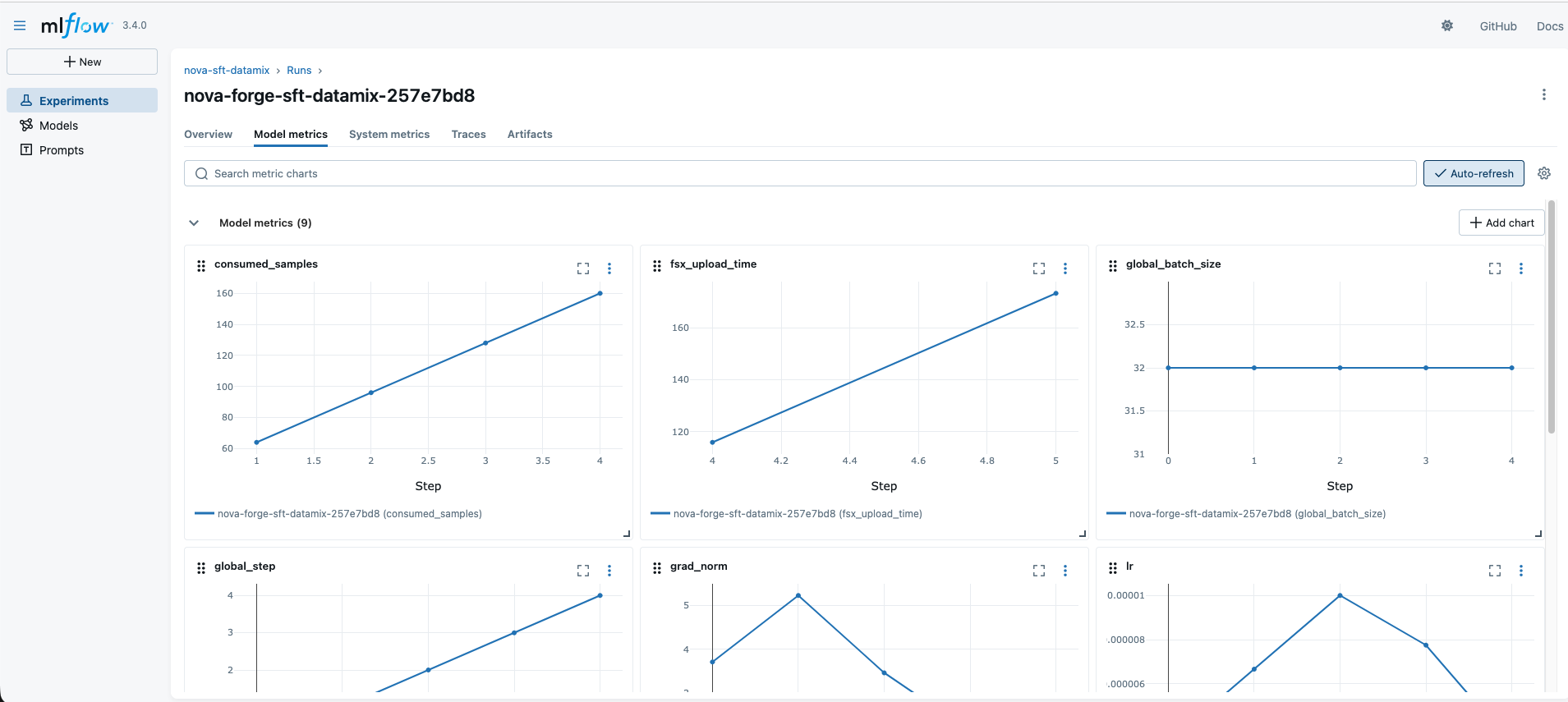

- Data Mixing Mechanism: The Forge SDK employs a weighted sampling algorithm that adjusts the loss function based on the ratio of synthetic-to-human data provided in the training manifest.

- Training Infrastructure: Leverages AWS Trainium and Inferentia accelerators to optimize the fine-tuning process, specifically targeting reduced memory footprint for large context window training.

- Evaluation Framework: Includes automated benchmarks for RAG-specific performance and hallucination rate tracking integrated directly into the training loop.

🔮 Future ImplicationsAI analysis grounded in cited sources

AWS will transition toward automated data-mixing as a standard feature for all Bedrock fine-tuning jobs.

The success of the Nova Forge SDK's data-mixing capabilities suggests a shift toward reducing the manual effort required for high-quality dataset curation.

Fine-tuning costs for enterprise-grade models will decrease by at least 30% by 2027.

Increased efficiency in training infrastructure and the adoption of automated data-mixing techniques directly lower the compute resources required for model convergence.

⏳ Timeline

2024-12

AWS announces the Amazon Nova foundation model family at AWS re:Invent.

2025-03

Initial release of the Nova Forge SDK for streamlined model customization.

2025-09

Integration of advanced data-mixing capabilities into the Nova Forge SDK.

2026-02

Expansion of fine-tuning support for multi-modal inputs within AWS Bedrock.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗