Monarch API Unlocks Supercomputer Training

💡Easier supercomputer access for distributed training – vital for scaling RL models.

⚡ 30-Second TL;DR

What Changed

New API for easy distributed training on supercomputers

Why It Matters

This lowers barriers for scaling ML models on supercomputers, enabling faster experimentation for researchers and builders handling massive datasets.

What To Do Next

Test Monarch API by submitting a sample distributed RL job via PyTorch Blog guide.

🧠 Deep Insight

Web-grounded analysis with 5 cited sources.

🔑 Enhanced Key Takeaways

- •Monarch shifts from the traditional multi-controller (SPMD) model to a single-controller architecture, allowing a single Python script to orchestrate distributed resources across an entire cluster as if they were local objects.

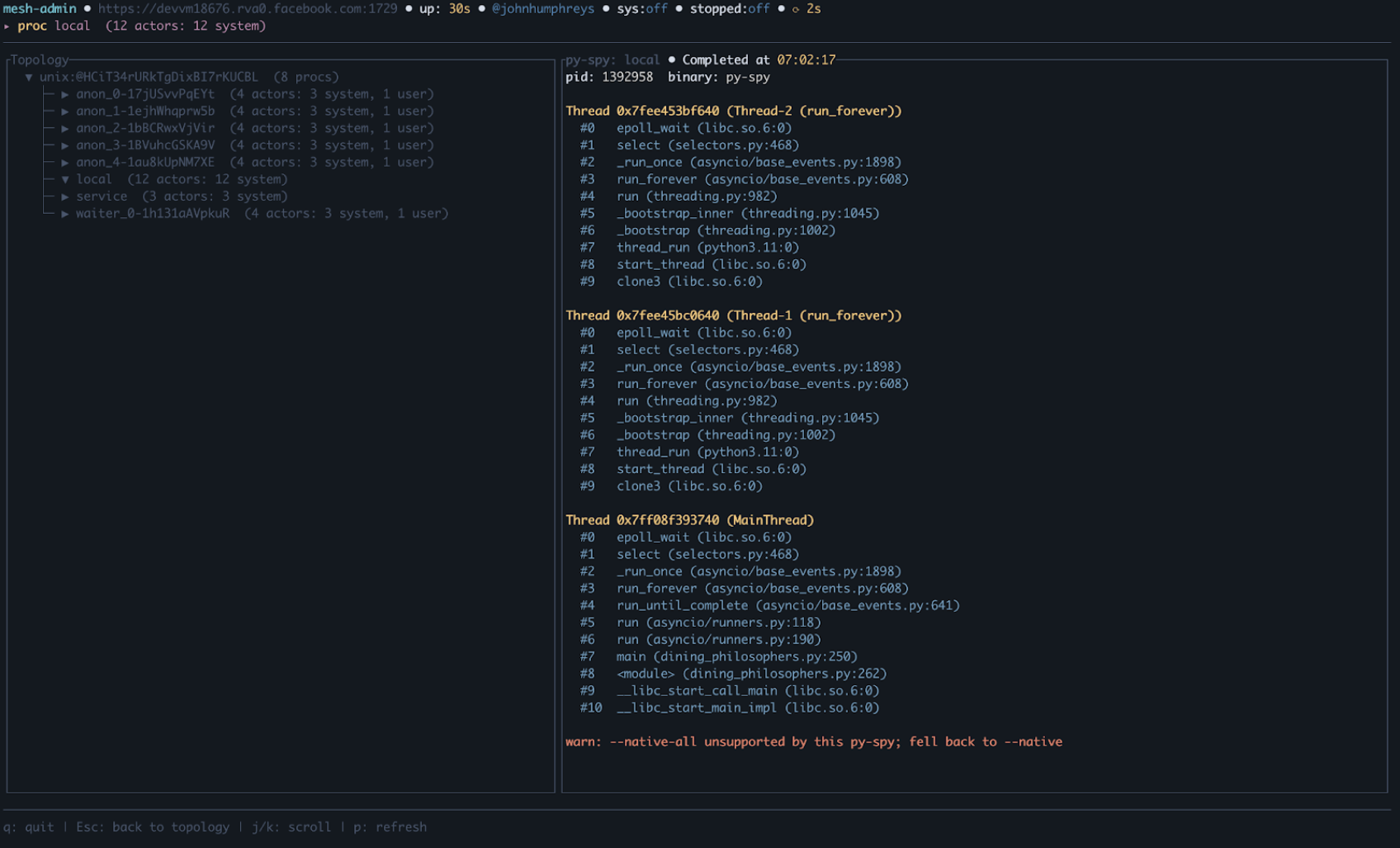

- •The framework utilizes 'process meshes' and 'actor meshes' to organize compute resources, enabling developers to slice, broadcast, and manipulate distributed nodes using intuitive Pythonic constructs like loops and futures.

- •To optimize performance, Monarch separates the control plane (messaging) from the data plane, utilizing RDMA (Remote Direct Memory Access) for high-throughput, zero-copy GPU-to-GPU data transfers.

📊 Competitor Analysis▸ Show

| Feature | Monarch | Ray | Dask |

|---|---|---|---|

| Primary Model | Single-controller (Orchestration) | Distributed Task/Actor | Distributed Task/Dataframe |

| PyTorch Native | Yes (Deep integration) | Via libraries | Via libraries |

| Data Transfer | RDMA-optimized | Plasma Store / Arrow | Pickle / Cloudpickle |

| Best For | Large-scale PyTorch training/RL | General purpose distributed Python | Data science/parallel computing |

🛠️ Technical Deep Dive

- •Architecture: Single-controller model where one script manages process/actor meshes; backend implemented in Rust.

- •Communication: Separates control plane (messaging) from data plane (RDMA transfers using libibverbs).

- •Fault Tolerance: Implements supervision trees where failures propagate up, enabling fine-grained, user-defined recovery logic.

- •Distributed Tensors: Provides sharded tensors that integrate with PyTorch, supporting direct GPU-to-GPU memory transfers.

- •Debugging: Supports standard Python pdb breakpoints within remote actor meshes, with a TUI (Terminal User Interface) for mesh administration.

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (5)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- vertexaisearch.cloud.google.com — Auziyqfxendb57pmi2nvm Jqpkc0tlflpvz Jtfu7pypnboqicfuztfc3rhcserzllfoqqbqrohcygplxw Elutj Oz Bgdrmqubpq9uqdaitodhhqvxexmfq74j C Vah Wmldwqyd4ab2ibcmbv Etxw==

- vertexaisearch.cloud.google.com — Auziyqegwnkcvx7mpxt 5hdczbprboxenqpa Ahcrreu9pfe8jfoufu Talcuvdidjqrjakmox0tk9ddlxkyvyjbia4k280hljkzmde7mkjsinhrbw1ygqa9lj9vtfqy37a6favepg 9zxrij2erioylcluj

- vertexaisearch.cloud.google.com — Auziyqf8jh2ud3epx2txo B23ylhhv5jhiwjglfuz7b4 N67apk3n2maq60hropwe2vssbmwaomoy7vqjvybozzwtlejintfvv0cs9e Tcwnhvx37dbljwua61 Laivboft Zm6z5uwwvorh41ydsrqt Fyqs4mfmf1dkru0 Bk9ymk2pbad230yycoa Mzgpwhninz Cx4 Nff8on Frxu=

- vertexaisearch.cloud.google.com — Auziyqfu5ujc3kf7j52swl6l9ongvaulso6l93lrihls4vrdnxsir2rvnewthrikzh8tb2xqb5vf228dwn2xgvsex5rdiyswkl1h Xb Gygyjff8z Jagasaylsoztzomojlmylnzhmk7xuquedmcniwbvtwqw==

- vertexaisearch.cloud.google.com — Auziyqgecmwcbhekgqbybil3wmv6vwndblkuwwzsdxvyvb8 W Jgpkaof4742k51izkyz9swvk6flcf Sudghnvcdw Rstipkyoz9qu3w9jfufibgxznsddx2fb21uidzf4ibdqfwjpoabc=

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: PyTorch Blog ↗