📋TestingCatalog•Freshcollected in 30m

Mistral Launches Medium 3.5 and Le Chat Work Mode

💡Mistral's 128B model with 256K context excels in coding/vision—devs, try sandbox now!

⚡ 30-Second TL;DR

What Changed

Mistral Medium 3.5 128B model with 256K context window launched

Why It Matters

This launch bolsters Mistral's offerings with a high-parameter model for advanced coding and vision, accessible to developers via familiar tools. It enables efficient experimentation in sandboxed environments.

What To Do Next

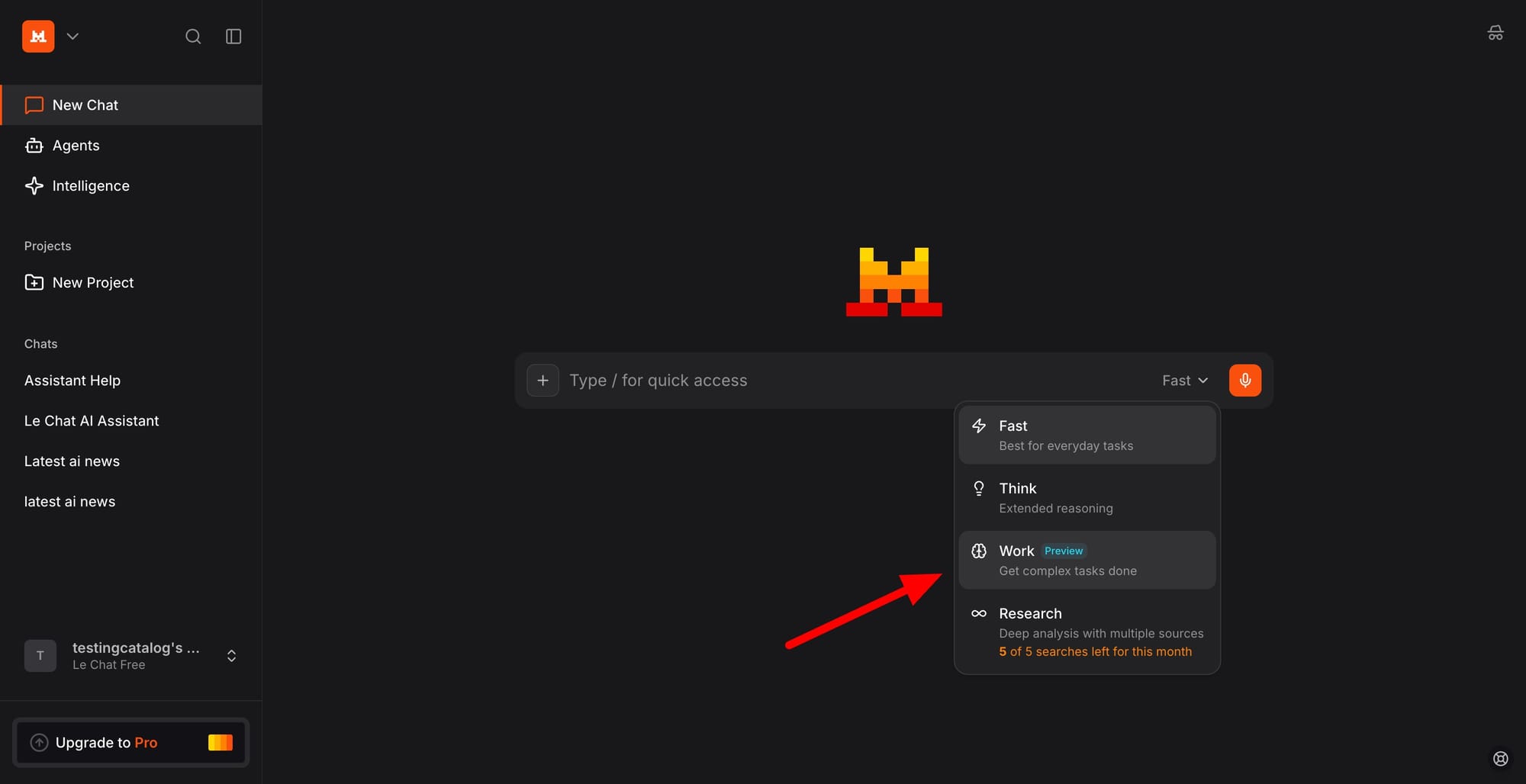

Test Mistral Medium 3.5 via Vibe CLI for sandbox coding and vision tasks.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Mistral Medium 3.5 utilizes a novel 'Mixture-of-Experts' (MoE) architecture optimized for lower latency inference compared to its predecessor, Mistral Medium 3.0.

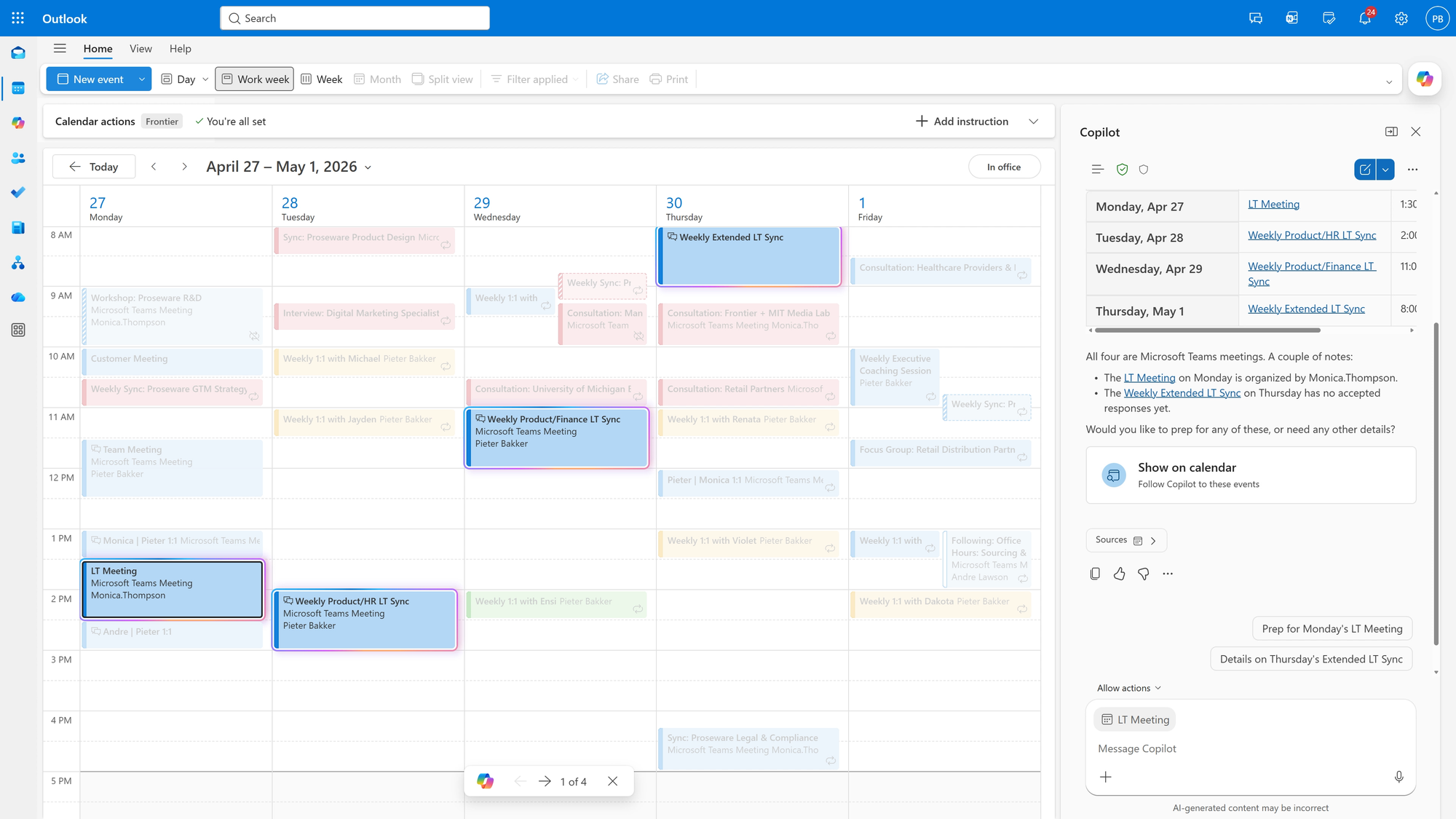

- •The 'Work Mode' in Le Chat integrates directly with enterprise-grade document management systems, allowing for real-time RAG (Retrieval-Augmented Generation) on private company data repositories.

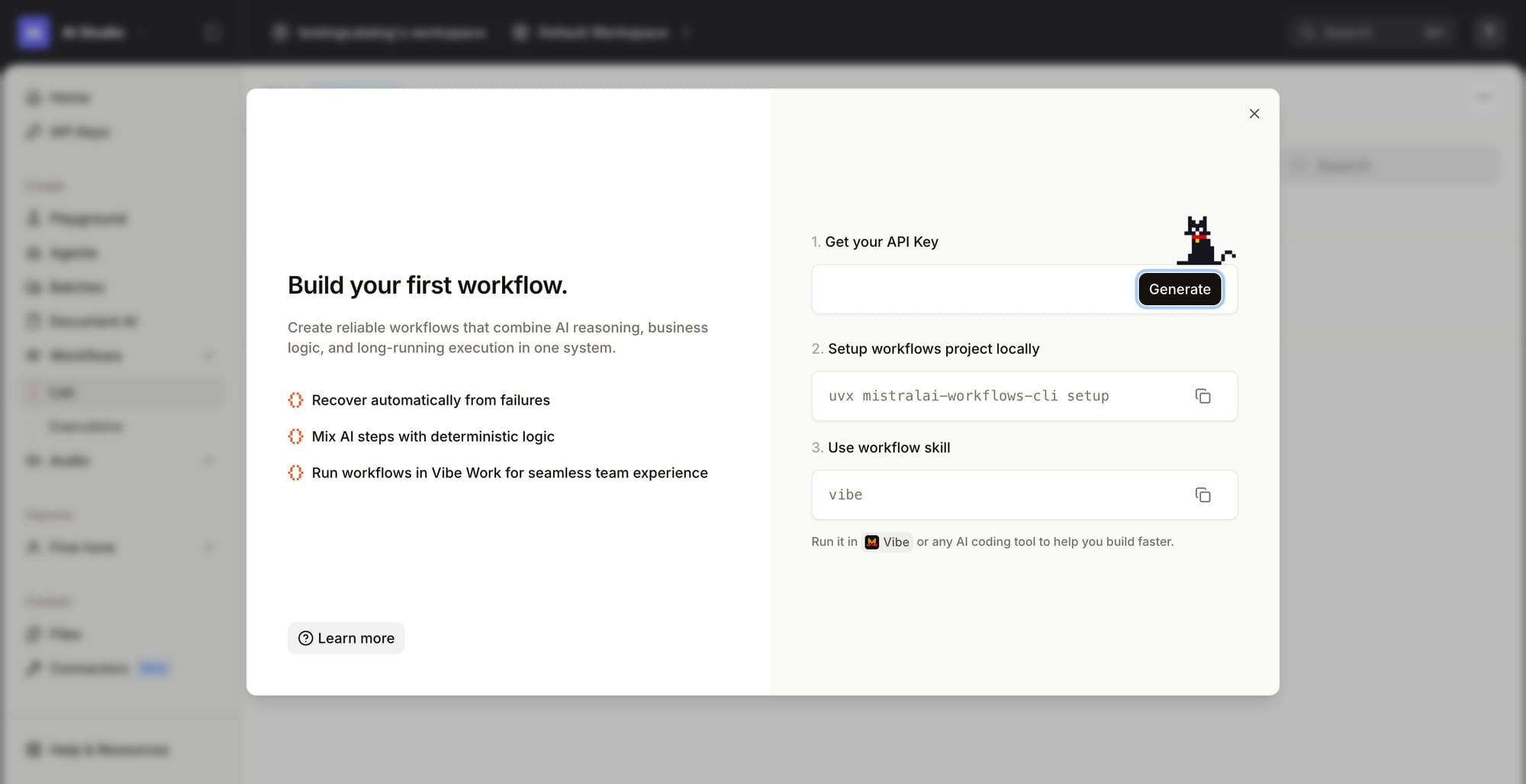

- •The Mistral Vibe CLI introduces a new 'sandbox-as-a-service' protocol that allows developers to containerize model execution environments, ensuring reproducible results across different hardware configurations.

📊 Competitor Analysis▸ Show

| Feature | Mistral Medium 3.5 | OpenAI o3-mini | Anthropic Claude 3.7 Sonnet |

|---|---|---|---|

| Context Window | 256K | 128K | 200K |

| Architecture | MoE (128B) | Reasoning-optimized | Transformer (Dense) |

| Primary Use Case | Coding/Vision/Sandbox | Complex Reasoning | Creative/Analytical Writing |

| Pricing Model | Token-based (Tiered) | Token-based (Tiered) | Token-based (Tiered) |

🛠️ Technical Deep Dive

- •Model Architecture: 128B parameter Mixture-of-Experts (MoE) with active parameter usage per token estimated at ~15-20B.

- •Context Window: 256K tokens utilizing a sliding-window attention mechanism combined with RoPE (Rotary Positional Embeddings) for long-range dependency management.

- •Sandbox Environment: Requires 4x NVIDIA H100/A100 GPUs for full-precision inference; supports FP8 quantization for reduced memory footprint in production.

- •Vision Integration: Employs a vision encoder module trained on high-resolution image-text pairs, allowing for native multimodal reasoning without external OCR tools.

🔮 Future ImplicationsAI analysis grounded in cited sources

Mistral will shift its primary revenue focus toward enterprise-managed sandbox environments.

The introduction of the Vibe CLI sandbox suggests a strategic pivot to capture the developer-tooling market rather than just general-purpose chat users.

The 256K context window will become the new baseline for mid-tier enterprise models by Q4 2026.

Competitors are under pressure to match Mistral's long-context capabilities to maintain parity in enterprise RAG workflows.

⏳ Timeline

2023-09

Mistral AI releases Mistral 7B, their first open-weights model.

2024-02

Launch of Mistral Large and the Le Chat platform.

2024-12

Mistral releases the Pixtral series, marking their entry into native multimodal models.

2026-04

Launch of Mistral Medium 3.5 and Le Chat Work Mode.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: TestingCatalog ↗