📋TestingCatalog•Freshcollected in 5m

Mistral Launches Workflows Public Preview

💡Mistral's enterprise workflows add fault tolerance—build prod AI pipelines now.

⚡ 30-Second TL;DR

What Changed

Public preview adds durability and fault tolerance

Why It Matters

Empowers enterprises with reliable AI orchestration, reducing deployment risks for practitioners building production systems.

What To Do Next

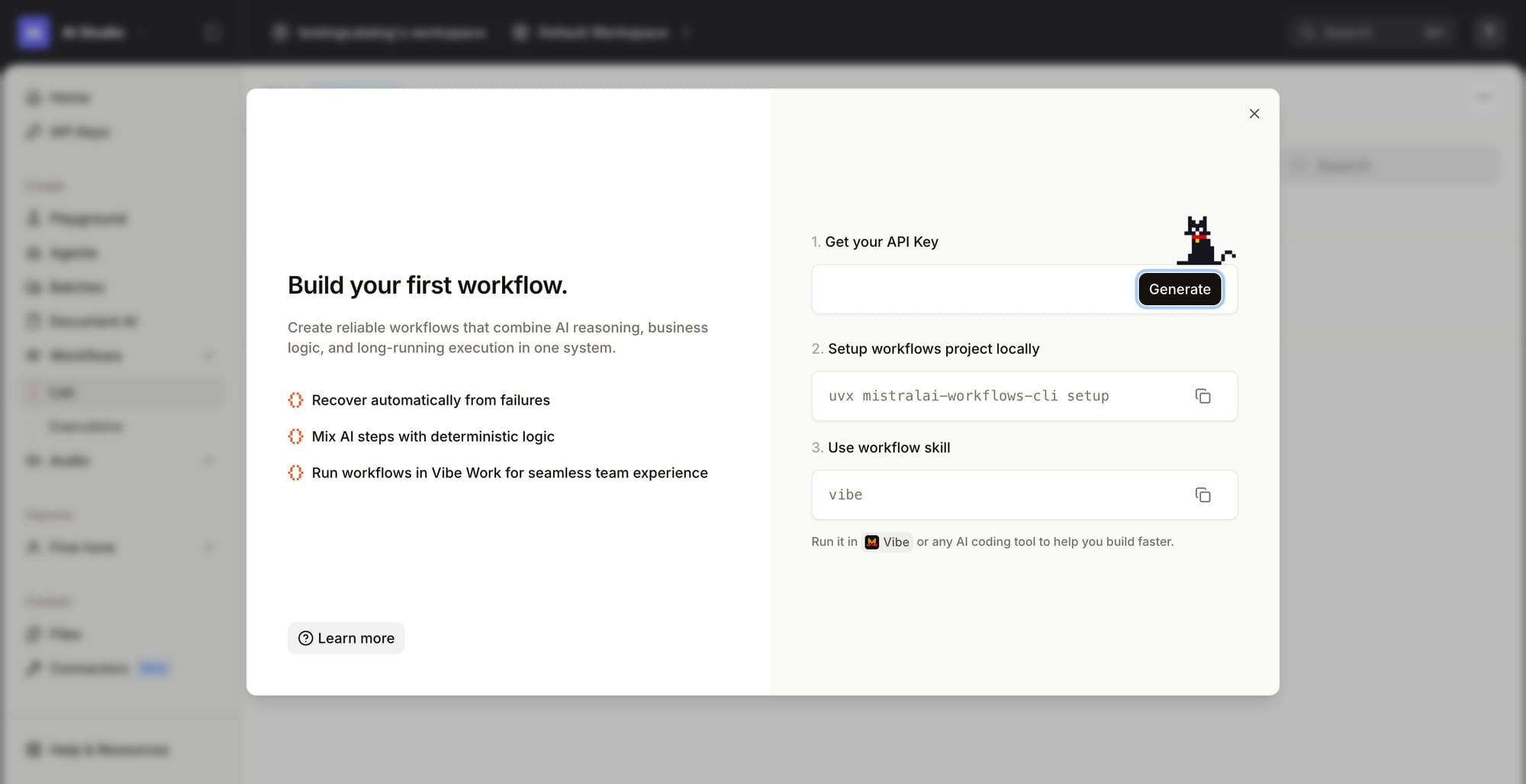

Sign up for Mistral Workflows preview and test SDK v3.0 Python automations in your pipeline.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Mistral Workflows utilizes a stateful execution engine that allows for long-running processes, addressing the limitations of standard stateless API calls in complex multi-step AI tasks.

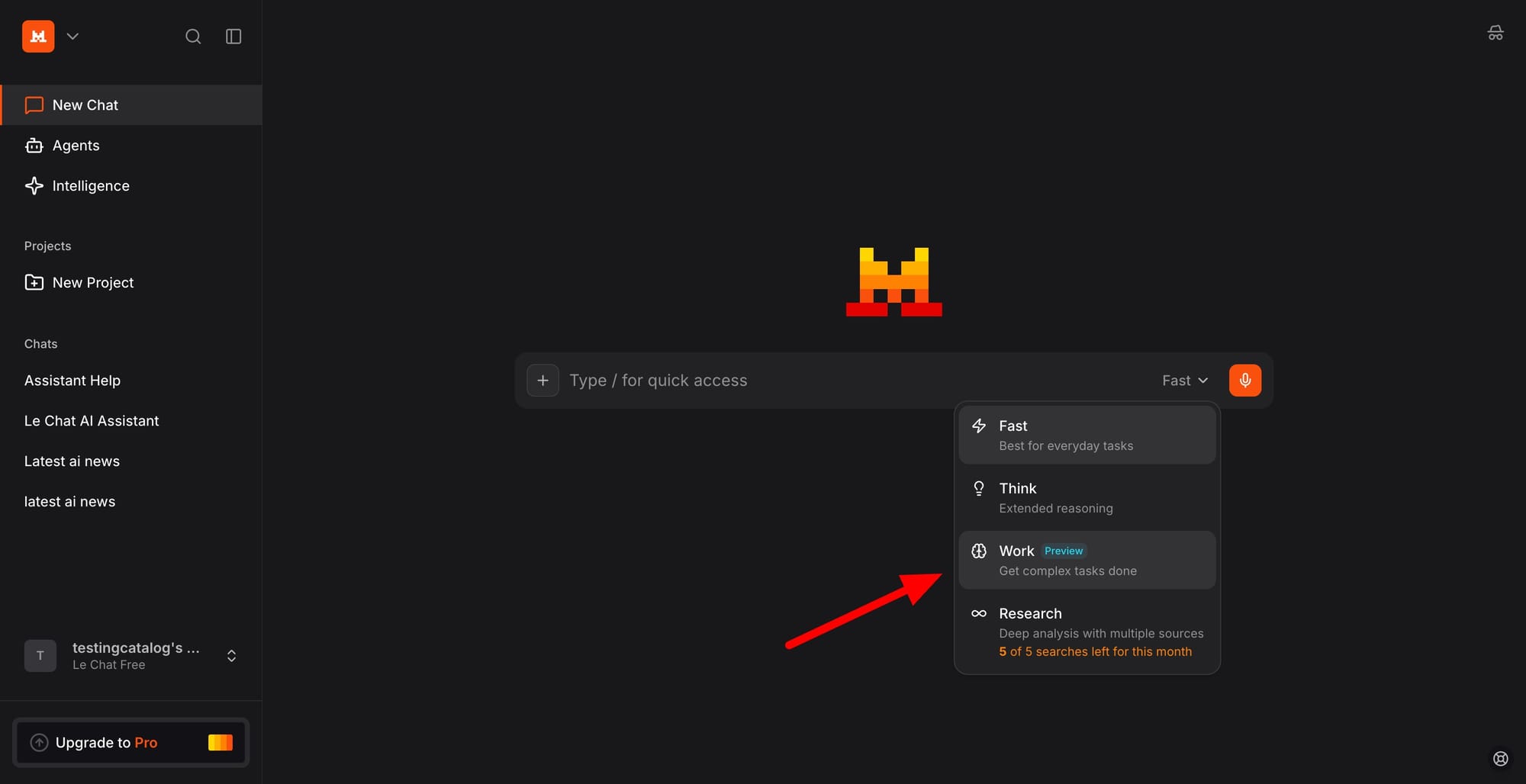

- •The integration with Le Chat allows non-technical users to trigger and monitor automated workflows, bridging the gap between conversational AI and backend production systems.

- •The SDK v3.0 introduces native support for asynchronous task management and automatic retry logic, specifically designed to handle transient failures in distributed LLM inference environments.

📊 Competitor Analysis▸ Show

| Feature | Mistral Workflows | OpenAI Assistants API | Anthropic Tool Use |

|---|---|---|---|

| State Management | Native Stateful Engine | Managed Thread State | Stateless (Client-side) |

| Fault Tolerance | Built-in Retry/Persistence | Limited/Manual | Manual Implementation |

| Primary Focus | Enterprise Production Pipelines | Agentic Conversational Apps | Model-level Tool Calling |

🛠️ Technical Deep Dive

- Execution Model: Implements a persistent state machine architecture that checkpoints execution progress, allowing workflows to resume from the last successful step after a failure.

- SDK v3.0 Architecture: Utilizes a decorator-based pattern in Python to define workflow steps, enabling seamless serialization of state between asynchronous calls.

- Observability Stack: Provides native integration with OpenTelemetry, allowing enterprises to trace latency and token usage across multi-step chains within their existing monitoring infrastructure.

- Concurrency: Supports parallel execution branches within a single workflow definition, optimized for multi-model routing scenarios.

🔮 Future ImplicationsAI analysis grounded in cited sources

Mistral will shift its primary revenue model toward infrastructure-as-a-service (IaaS) for AI agents.

By providing durable execution environments, Mistral is moving beyond simple model inference to hosting the entire lifecycle of enterprise AI applications.

The Workflows platform will introduce a marketplace for pre-built, industry-specific workflow templates.

The focus on production pipelines suggests a move toward standardizing common enterprise tasks like document processing or automated customer support flows.

⏳ Timeline

2023-04

Mistral AI founded in Paris, France.

2023-09

Release of Mistral 7B, the company's first open-weights model.

2024-02

Launch of Mistral Large and the La Plateforme API service.

2024-06

Introduction of Le Chat as a conversational interface for Mistral models.

2026-04

Public preview launch of Mistral Workflows and SDK v3.0.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: TestingCatalog ↗