🗾ITmedia AI+ (日本)•Freshcollected in 43m

Indian Student Scams MAGA with AI Bikini Pics

💡AI deepfake scam fools MAGA crowd—key lessons for image authenticity checks

⚡ 30-Second TL;DR

What Changed

Fake influencer Emily Hart made entirely with AI bikini images

Why It Matters

Exposes vulnerabilities to AI-generated deepfakes in social scams, urging better detection tools and awareness among users.

What To Do Next

Integrate AI content detectors like Illuminarty into your image pipelines to flag generated fakes.

Who should care:Creators & Designers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The creator, identified as a 20-year-old medical student from India, utilized the platform X (formerly Twitter) to cultivate the persona, leveraging algorithmic engagement patterns to specifically target conservative political demographics.

- •The monetization strategy involved a tiered subscription model on platforms like Fanvue or similar creator-economy sites, where 'Emily Hart' offered exclusive AI-generated content to paying subscribers.

- •The WIRED investigation revealed that the creator used a combination of Stable Diffusion and custom LoRA (Low-Rank Adaptation) models to maintain consistent facial features across various AI-generated poses and environments.

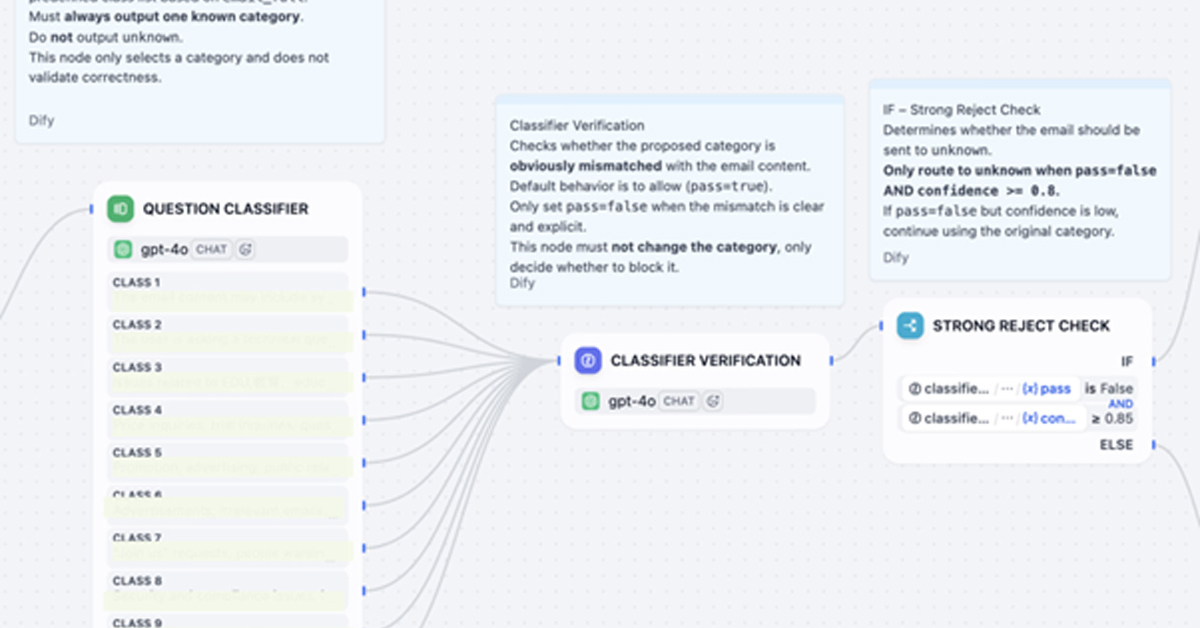

🛠️ Technical Deep Dive

- •Model Architecture: Utilized Stable Diffusion XL (SDXL) as the base model for high-fidelity image generation.

- •Consistency Mechanism: Employed LoRA (Low-Rank Adaptation) fine-tuning on a curated dataset of images to ensure the 'Emily Hart' character maintained a consistent identity across different prompts.

- •Workflow: Automated image generation pipelines were likely integrated with social media scheduling tools to maintain a high frequency of posts, maximizing visibility within the target demographic's feeds.

🔮 Future ImplicationsAI analysis grounded in cited sources

Political micro-targeting via AI-generated personas will face increased regulatory scrutiny.

The exploitation of political identity for financial gain through deceptive AI personas creates a new vector for misinformation that platforms are ill-equipped to moderate.

Platform verification systems will shift toward mandatory AI-content labeling.

High-profile hoaxes involving AI influencers are forcing social media companies to implement stricter provenance standards to maintain user trust.

⏳ Timeline

2024-05

Creation of the 'Emily Hart' persona on X.

2024-08

Persona gains significant traction within MAGA-aligned online communities.

2024-11

WIRED publishes the exposé revealing the creator's identity and methods.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗