🗾ITmedia AI+ (日本)•Freshcollected in 83m

DI: Key to Transparent AI Decisions

💡Gartner's 80% gov AI adoption forecast + DI for fixing black-box decisions by 2028.

⚡ 30-Second TL;DR

What Changed

Gartner: 80% gov agencies adopt AI agents by 2028

Why It Matters

DI frameworks will be essential for enterprises deploying AI in regulated sectors like government, pushing demand for transparent tools. It signals a shift from opaque models to accountable AI systems.

What To Do Next

Explore DI tools like those from Gartner-recommended vendors to audit your AI decision pipelines.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Decision Intelligence (DI) integrates traditional data science with behavioral science and management science to bridge the gap between AI-generated insights and human-centric decision-making processes.

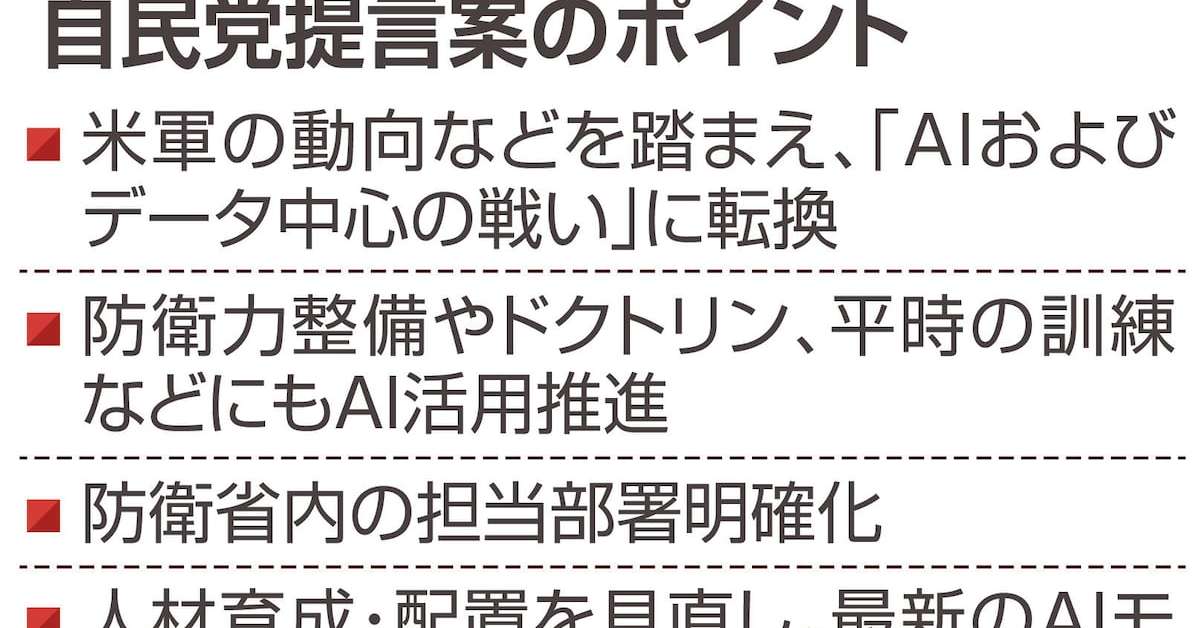

- •Regulatory frameworks like the EU AI Act are driving the adoption of DI, as organizations must now provide 'meaningful information about the logic involved' in high-risk automated decision systems.

- •DI platforms are shifting from passive dashboarding to active 'decision modeling' using techniques like causal inference, which allows agencies to simulate the impact of policy changes before implementation.

📊 Competitor Analysis▸ Show

| Feature | Decision Intelligence Platforms (e.g., Aera, Pyramid) | Traditional BI/Analytics | Explainable AI (XAI) Toolkits (e.g., SHAP, LIME) |

|---|---|---|---|

| Core Focus | Automated decision execution | Descriptive reporting | Model interpretability |

| Pricing | Enterprise SaaS (High) | Per-user/Per-node (Moderate) | Open Source (Free) |

| Benchmarks | Decision latency/Accuracy | Query performance | Feature importance fidelity |

🛠️ Technical Deep Dive

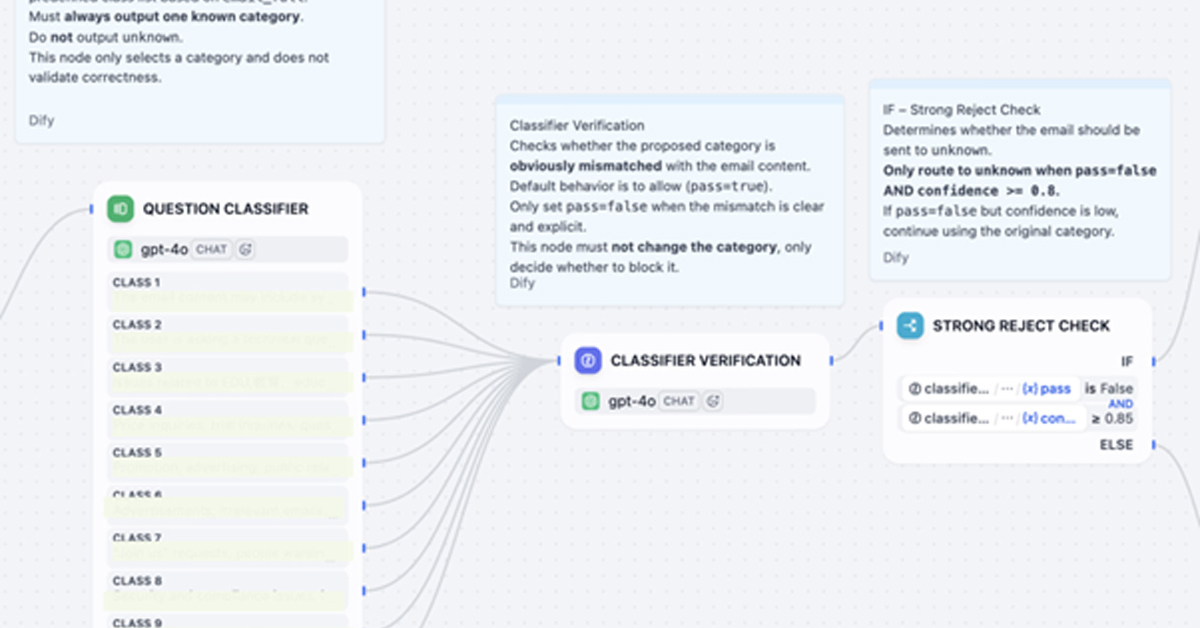

- •DI architectures typically utilize a 'Decision Model and Notation' (DMN) standard to map business logic independently of the underlying machine learning models.

- •Implementation often involves Causal AI layers that move beyond correlation to identify the 'why' behind a prediction, utilizing Directed Acyclic Graphs (DAGs) to visualize decision paths.

- •Integration with 'Human-in-the-loop' (HITL) workflows requires API-driven feedback mechanisms that allow human operators to override or adjust weights in real-time, which are then logged for auditability.

🔮 Future ImplicationsAI analysis grounded in cited sources

DI will become a mandatory procurement requirement for government AI contracts by 2027.

Increasing regulatory pressure regarding algorithmic accountability will force agencies to prioritize explainable systems over black-box performance.

The market for specialized DI software will grow at a CAGR exceeding 20% through 2028.

The shift from simple data visualization to automated, auditable decision-making is a critical bottleneck for enterprise and government AI scaling.

⏳ Timeline

2020-10

Gartner formally introduces 'Decision Intelligence' as a top strategic technology trend.

2022-05

Increased industry focus on DI as a framework to manage AI-driven decision complexity.

2024-08

Major government agencies begin pilot programs for DI-based policy simulation tools.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗