☁️AWS Machine Learning Blog•Freshcollected in 11m

Hybrid RAG Search with Bedrock OpenSearch

💡Hybrid RAG tutorial: Boost search accuracy with Bedrock + OpenSearch agents.

⚡ 30-Second TL;DR

What Changed

Generative AI agentic assistant for intelligent search

Why It Matters

Empowers builders to create more accurate RAG systems by blending search paradigms, improving AI assistant performance in enterprise search apps.

What To Do Next

Build a hybrid RAG prototype using Bedrock AgentCore and OpenSearch Service.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

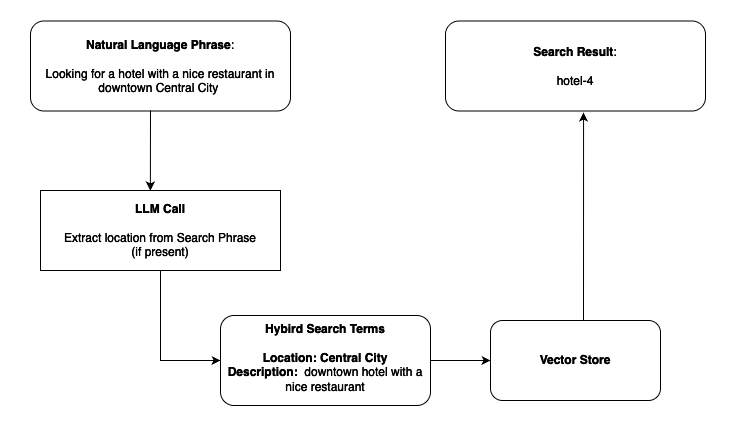

- •The integration utilizes Amazon OpenSearch Serverless with the k-NN (k-Nearest Neighbors) plugin to facilitate high-performance vector storage alongside traditional BM25 text search.

- •Bedrock AgentCore provides a standardized framework for orchestrating multi-step reasoning, allowing the agent to dynamically decide when to trigger a RAG retrieval versus executing a tool call.

- •The 'Strands Agents' architecture specifically addresses the challenge of context window management by implementing a modular memory retrieval system that filters relevant chunks before passing them to the LLM.

📊 Competitor Analysis▸ Show

| Feature | AWS Hybrid RAG (Bedrock/OpenSearch) | Google Cloud Vertex AI Search | Azure AI Search |

|---|---|---|---|

| Vector Engine | OpenSearch Serverless (k-NN) | Vertex AI Vector Search | Azure AI Search (Vector) |

| Orchestration | Bedrock AgentCore | Vertex AI Agents | Azure AI Search/Semantic Ranker |

| Pricing Model | Consumption-based (Compute/Storage) | Consumption-based (Indexing/Query) | Tiered/Consumption |

| Hybrid Search | Native BM25 + Vector | Native Hybrid Search | Native Hybrid Search |

🛠️ Technical Deep Dive

- Hybrid Search Mechanism: Employs Reciprocal Rank Fusion (RRF) to combine scores from semantic vector similarity (using Titan Embeddings) and lexical BM25 search, normalizing disparate scoring scales.

- Agentic Workflow: Bedrock AgentCore utilizes a ReAct (Reasoning + Acting) pattern, where the agent generates a thought trace, selects the search tool, and parses the retrieved context into a final response.

- Data Ingestion: Uses Amazon OpenSearch Ingestion pipelines to automate the embedding generation process, ensuring that documents are vectorized upon arrival in the index.

- Memory Management: Strands Agents implement a sliding-window buffer combined with a long-term vector store to maintain conversation state without exceeding model token limits.

🔮 Future ImplicationsAI analysis grounded in cited sources

Agentic RAG will shift from static retrieval to autonomous iterative refinement.

The integration of AgentCore suggests a move toward agents that can self-correct their search queries based on initial retrieval failures.

Serverless vector databases will become the default standard for enterprise RAG.

The operational efficiency of OpenSearch Serverless reduces the barrier to entry for complex hybrid search architectures.

⏳ Timeline

2023-04

Amazon Bedrock announced in preview to provide foundation models via API.

2023-11

Amazon OpenSearch Serverless adds vector engine support for generative AI applications.

2024-07

AWS introduces Bedrock Agents to automate multi-step tasks using foundation models.

2025-03

Bedrock AgentCore framework released to standardize agentic orchestration patterns.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗