⚛️量子位•Stalecollected in 83m

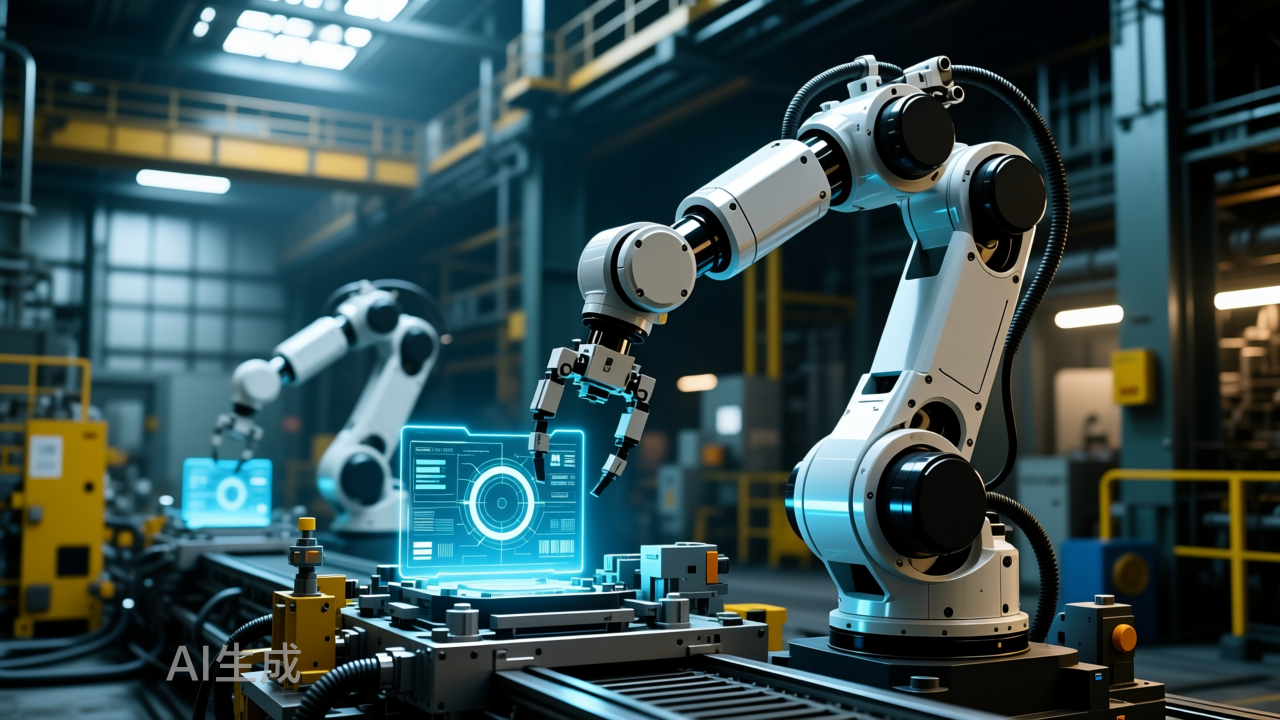

Google's Top Embodied Brain Powers Human-Like Robot Dog

💡Google's Gemini Robotics makes robots human-like via spatial AI—huge for devs.

⚡ 30-Second TL;DR

What Changed

Gemini Robotics is Google's strongest embodied brain release

Why It Matters

This launch advances embodied AI for robotics, enabling more natural interactions. It positions Google as a leader in spatial AI for physical world applications.

What To Do Next

Test Gemini models' spatial reasoning APIs in your robot simulation environments.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The integration utilizes a novel 'Vision-Language-Action' (VLA) architecture that allows the robot to process high-level natural language commands into low-level motor control sequences without intermediate code generation.

- •Google's collaboration with Boston Dynamics leverages the Spot platform's existing hardware API, focusing on real-time sensor fusion to enable the robot to navigate dynamic, unstructured environments previously inaccessible to pre-programmed paths.

- •The model incorporates a 'world model' component that predicts the physical consequences of movement, significantly reducing the latency between environmental perception and physical reaction compared to previous RT-2 based iterations.

📊 Competitor Analysis▸ Show

| Feature | Google Gemini Robotics | Tesla Optimus Gen 3 | Figure AI (Figure 02) |

|---|---|---|---|

| Primary Focus | Spatial Reasoning/VLA | End-to-End Neural Control | Humanoid General Purpose |

| Hardware | Boston Dynamics (Spot) | Tesla Custom Actuators | Custom Humanoid Platform |

| Deployment | Enterprise/Industrial | Automotive/Manufacturing | Commercial/Logistics |

🛠️ Technical Deep Dive

- Architecture: Utilizes a multimodal VLA (Vision-Language-Action) transformer model trained on a massive dataset of robotic trajectories and internet-scale video data.

- Spatial Reasoning: Employs a 3D-aware tokenization process that maps 2D camera inputs into a volumetric representation, allowing for precise object manipulation and obstacle avoidance.

- Latency Optimization: Implements a 'distillation' technique where the large-scale Gemini model trains a smaller, real-time-capable student model that runs on edge hardware integrated into the robot's chassis.

- Training Methodology: Uses a combination of imitation learning from human-operated teleoperation and reinforcement learning in high-fidelity physics simulations (MuJoCo).

🔮 Future ImplicationsAI analysis grounded in cited sources

Google will transition from software-only robotics to a hardware-agnostic 'robot brain' licensing model.

The successful integration with third-party hardware like Boston Dynamics suggests a strategic shift toward becoming the standard operating system for diverse robotic platforms.

The VLA architecture will enable zero-shot task execution for robots in domestic environments by 2027.

The model's ability to generalize spatial reasoning from training data to novel, unseen environments is the primary bottleneck currently being solved by the third-gen Gemini Robotics update.

⏳ Timeline

2023-03

Google announces PaLM-E, an embodied multimodal language model.

2023-07

Google introduces RT-2 (Robotic Transformer 2), a vision-language-action model.

2024-01

Google DeepMind unveils Mobile ALOHA for low-cost, dexterous mobile manipulation.

2025-05

Google integrates Gemini 1.5 Pro into robotic research pipelines for enhanced reasoning.

2026-04

Launch of Gemini Robotics third-gen model with specialized spatial reasoning.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 量子位 ↗