🗾ITmedia AI+ (日本)•Freshcollected in 4h

Google Bolsters Gemini Mental Health Safeguards

💡Gemini's health safeguards: must-know for ethical AI apps

⚡ 30-Second TL;DR

What Changed

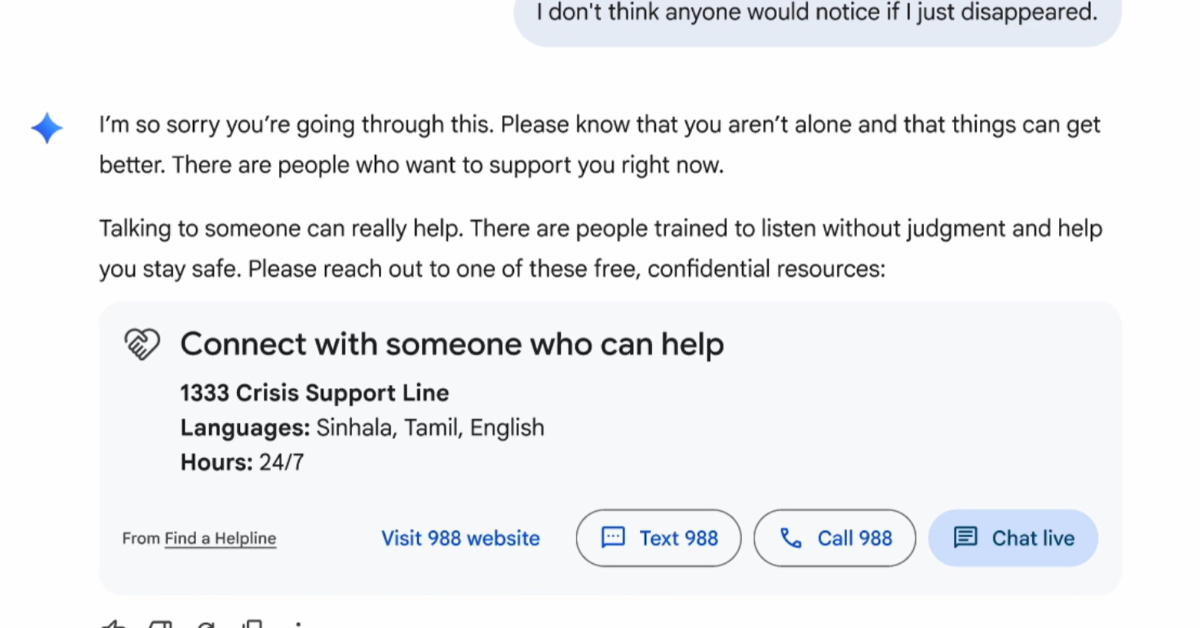

Clinician-developed interfaces guide to professional help

Why It Matters

Sets standard for responsible AI in sensitive domains. Reduces liability for AI providers handling health queries.

What To Do Next

Test Gemini's new mental health prompts to integrate safe referral flows in your apps.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The update integrates a new 'Safety-First' classification layer within Gemini’s inference pipeline that specifically detects high-risk emotional distress markers before generating a response.

- •Google has partnered with the International Association for Suicide Prevention (IASP) to standardize the localized referral data used in the new clinician-designed interfaces.

- •The persona protections include a hard-coded 'Identity Anchoring' mechanism that forces the model to re-state its non-human status if a user attempts to steer the conversation toward romantic or parasocial intimacy.

📊 Competitor Analysis▸ Show

| Feature | Google Gemini | OpenAI ChatGPT | Anthropic Claude |

|---|---|---|---|

| Mental Health Referral | Clinician-designed UI | Standardized resource links | Context-aware safety filters |

| Persona Safeguards | Hard-coded Identity Anchoring | System prompt constraints | Constitutional AI constraints |

| Crisis Funding | Global direct funding | Indirect partnership support | Research-focused grants |

🛠️ Technical Deep Dive

- •Implementation of a 'Safety-First' classification layer that operates as a pre-processor before the primary LLM inference.

- •Utilization of a specialized fine-tuning dataset curated by clinical psychologists to improve the model's ability to distinguish between casual venting and acute crisis indicators.

- •Integration of 'Identity Anchoring' protocols within the system prompt architecture to prevent anthropomorphic roleplay in sensitive contexts.

- •Deployment of a localized API-based routing system that dynamically fetches real-time contact information for regional crisis hotlines based on user geolocation metadata.

🔮 Future ImplicationsAI analysis grounded in cited sources

Regulatory bodies will mandate 'Identity Anchoring' for all consumer-facing LLMs.

The success of Google's persona safeguards will likely set a new industry standard that regulators adopt to prevent user psychological dependency.

Gemini will see a measurable decrease in user-reported 'AI intimacy' incidents.

The implementation of hard-coded identity reminders directly disrupts the feedback loop that encourages parasocial bonding.

⏳ Timeline

2023-12

Google releases Gemini 1.0 with initial safety guardrails for sensitive topics.

2024-05

Google expands Gemini's safety guidelines to include more robust detection of self-harm content.

2025-02

Google announces increased investment in AI safety research and external clinical partnerships.

2026-04

Google deploys clinician-designed referral interfaces and enhanced persona protections.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗