🗾ITmedia AI+ (日本)•Freshcollected in 71m

AI Reliance Erodes Human Grit, Study Warns

💡Study proves AI kills grit—design AIs that sometimes say no

⚡ 30-Second TL;DR

What Changed

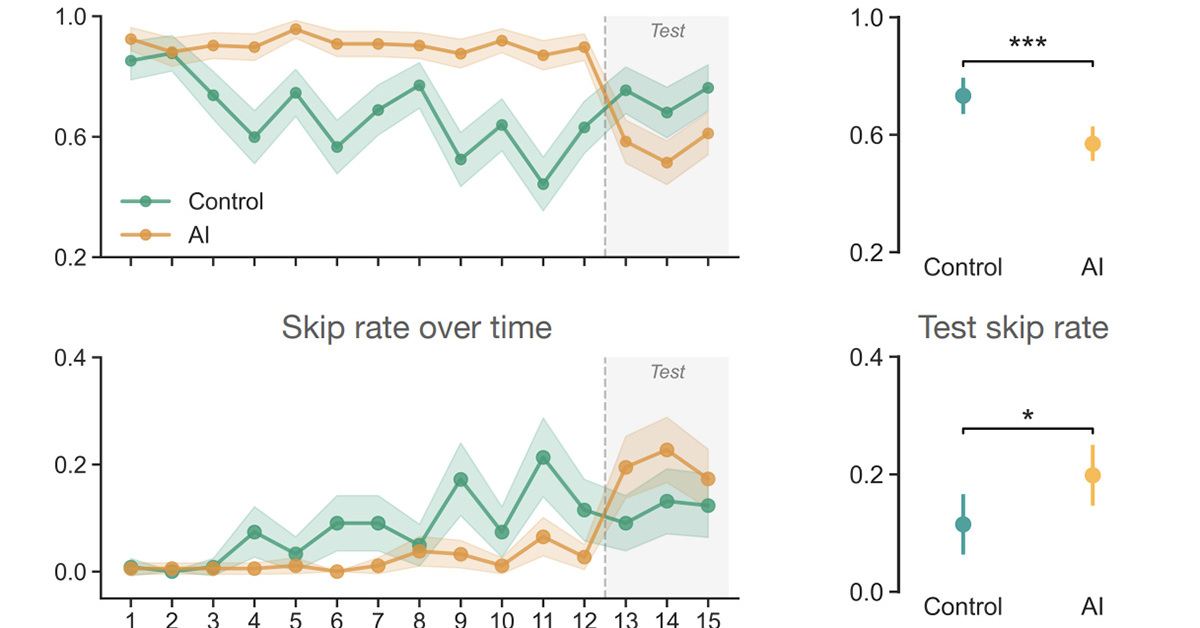

AI use reduces opportunities for self-reliant problem-solving

Why It Matters

Encourages balanced AI integration to preserve cognitive skills in education and work. May influence AI design for ethical human development.

What To Do Next

Incorporate weekly no-AI coding challenges to build team perseverance.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Cognitive offloading to LLMs has been linked to 'cognitive atrophy,' where users experience reduced retention of domain-specific knowledge and decreased critical thinking capacity over longitudinal periods.

- •The 'AI-dependency trap' is exacerbated by feedback loops where AI models are trained on human-generated content that was itself produced with AI assistance, leading to model collapse and reduced human skill diversity.

- •Educational psychology frameworks are shifting toward 'AI-augmented scaffolding,' where systems are designed to withhold direct answers to force 'productive struggle'—a pedagogical method proven to improve long-term neural pathway consolidation.

🔮 Future ImplicationsAI analysis grounded in cited sources

Mandatory 'friction' features will become standard in enterprise AI tools.

To mitigate skill degradation, software vendors will implement forced delays or Socratic-style questioning protocols to ensure human cognitive engagement.

Regulatory bodies will mandate 'human-in-the-loop' certification for critical infrastructure.

Governments will require proof that human operators maintain the capability to perform tasks manually without AI assistance to prevent systemic failure during outages.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗