💰钛媒体•Freshcollected in 30m

DeepSeek Cheaper as Cloud Prices Surge

💡Cloud prices up despite 80% inference drop—DeepSeek key to cost war

⚡ 30-Second TL;DR

What Changed

AI inference costs fell >80% in 18 months globally

Why It Matters

Rising cloud prices in China could squeeze AI startups' margins despite global cost drops, favoring efficient models like DeepSeek. Practitioners may shift to cost-optimized providers.

What To Do Next

Benchmark DeepSeek inference costs against Alibaba Cloud, Tencent Cloud, and Huawei Cloud for your next deployment.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

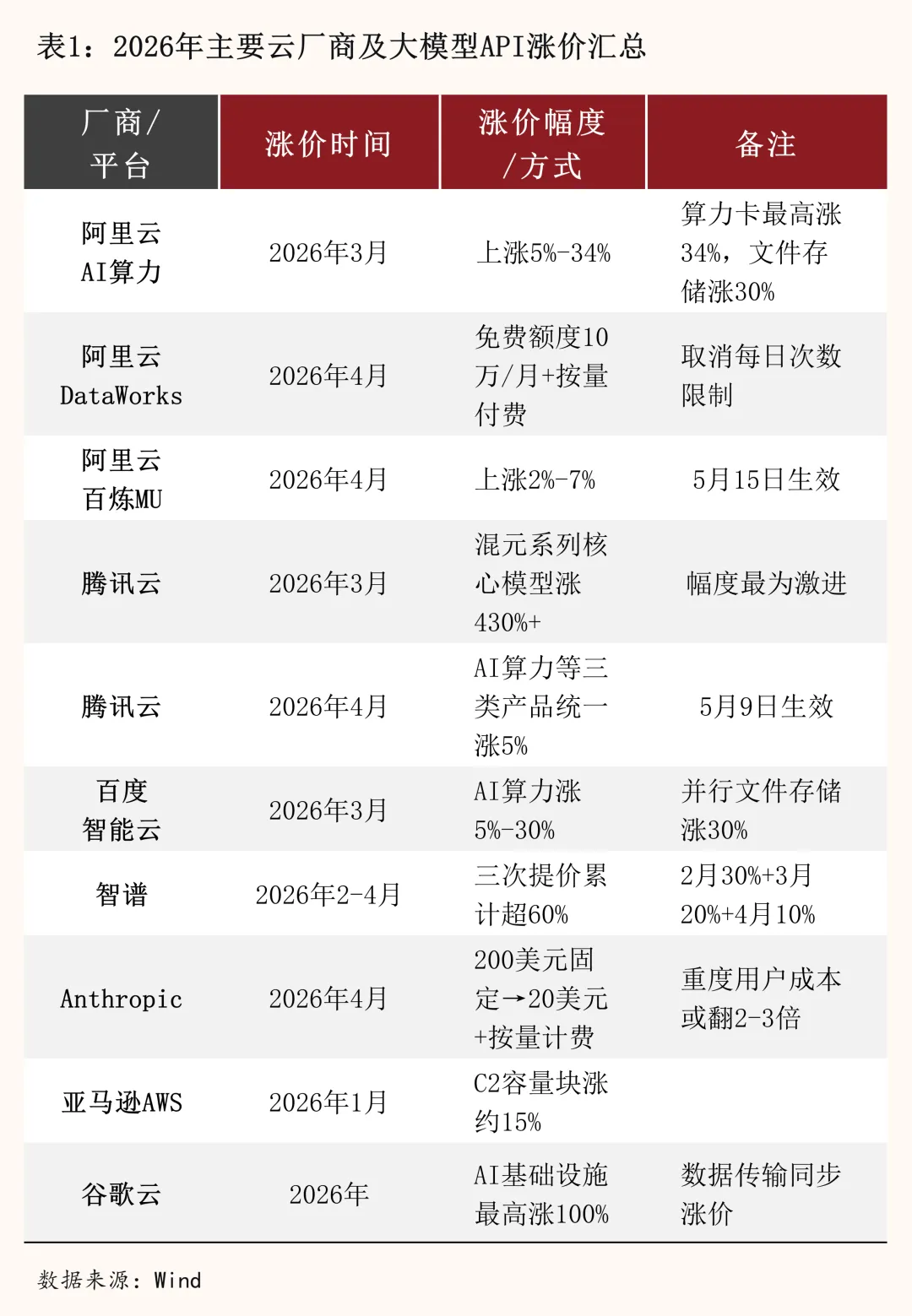

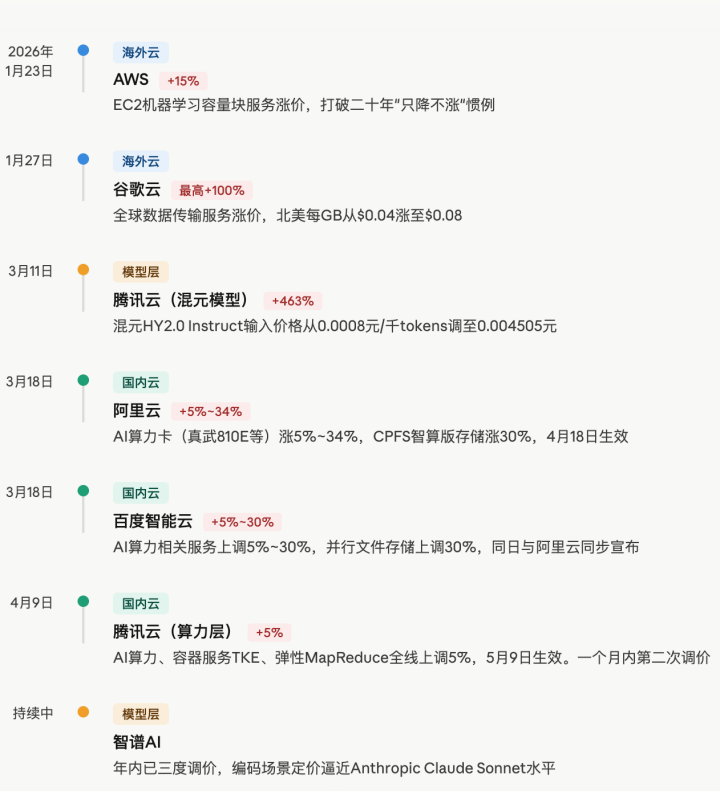

- •The simultaneous price hikes by China's top three cloud providers (Alibaba Cloud, Tencent Cloud, and Huawei Cloud) are primarily attributed to the surging demand for high-end H100/H800 GPU clusters, which has constrained supply and increased operational overhead.

- •DeepSeek's cost advantage is driven by its proprietary 'DeepSeek-MoE' architecture, which utilizes a Mixture-of-Experts approach to significantly reduce the number of active parameters per token, thereby lowering compute requirements compared to dense models.

- •Market analysts suggest the cloud price hikes are a strategic pivot from 'customer acquisition' to 'profitability' as cloud providers face pressure to monetize massive capital expenditures in AI infrastructure.

📊 Competitor Analysis▸ Show

| Feature | DeepSeek (V3/R1) | Alibaba Cloud (Qwen) | Tencent Cloud (Hunyuan) |

|---|---|---|---|

| Architecture | Mixture-of-Experts (MoE) | Dense/MoE Hybrid | Dense/MoE Hybrid |

| Inference Pricing | Highly aggressive/Disruptive | Premium/Enterprise-tier | Premium/Enterprise-tier |

| Benchmark Focus | Reasoning/Coding efficiency | General purpose/Multimodal | Enterprise/Business apps |

🛠️ Technical Deep Dive

- •DeepSeek-MoE architecture: Employs fine-grained expert segmentation, allowing the model to activate only a small fraction of total parameters per token, drastically reducing FLOPs.

- •Multi-token prediction: Utilizes advanced training techniques to improve inference throughput and reduce latency during long-context generation.

- •FP8 Training/Inference: DeepSeek has pioneered widespread adoption of FP8 precision to maximize hardware utilization on NVIDIA H800/A800 clusters, effectively doubling throughput compared to FP16.

🔮 Future ImplicationsAI analysis grounded in cited sources

Cloud providers will shift to tiered pricing models based on model efficiency.

As inference costs diverge based on model architecture, providers must differentiate pricing to maintain margins on less efficient legacy models.

Consolidation of smaller AI model startups will accelerate.

The combination of rising cloud infrastructure costs and the availability of ultra-low-cost inference from models like DeepSeek makes it difficult for startups without proprietary hardware optimization to remain solvent.

⏳ Timeline

2024-01

DeepSeek releases DeepSeek-LLM, marking its entry into high-performance open-weights models.

2024-05

DeepSeek introduces DeepSeek-V2, featuring the innovative DeepSeek-MoE architecture.

2024-12

DeepSeek-V3 launch, demonstrating significant cost-per-token reduction via optimized FP8 training.

2025-01

DeepSeek-R1 released, focusing on reasoning capabilities while maintaining low inference costs.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗