💰钛媒体•Recentcollected in 32m

AI Compute Hunger Drives Costs Skyward

💡Compute cost surge threatens AI profitability—who pays and controls it?

⚡ 30-Second TL;DR

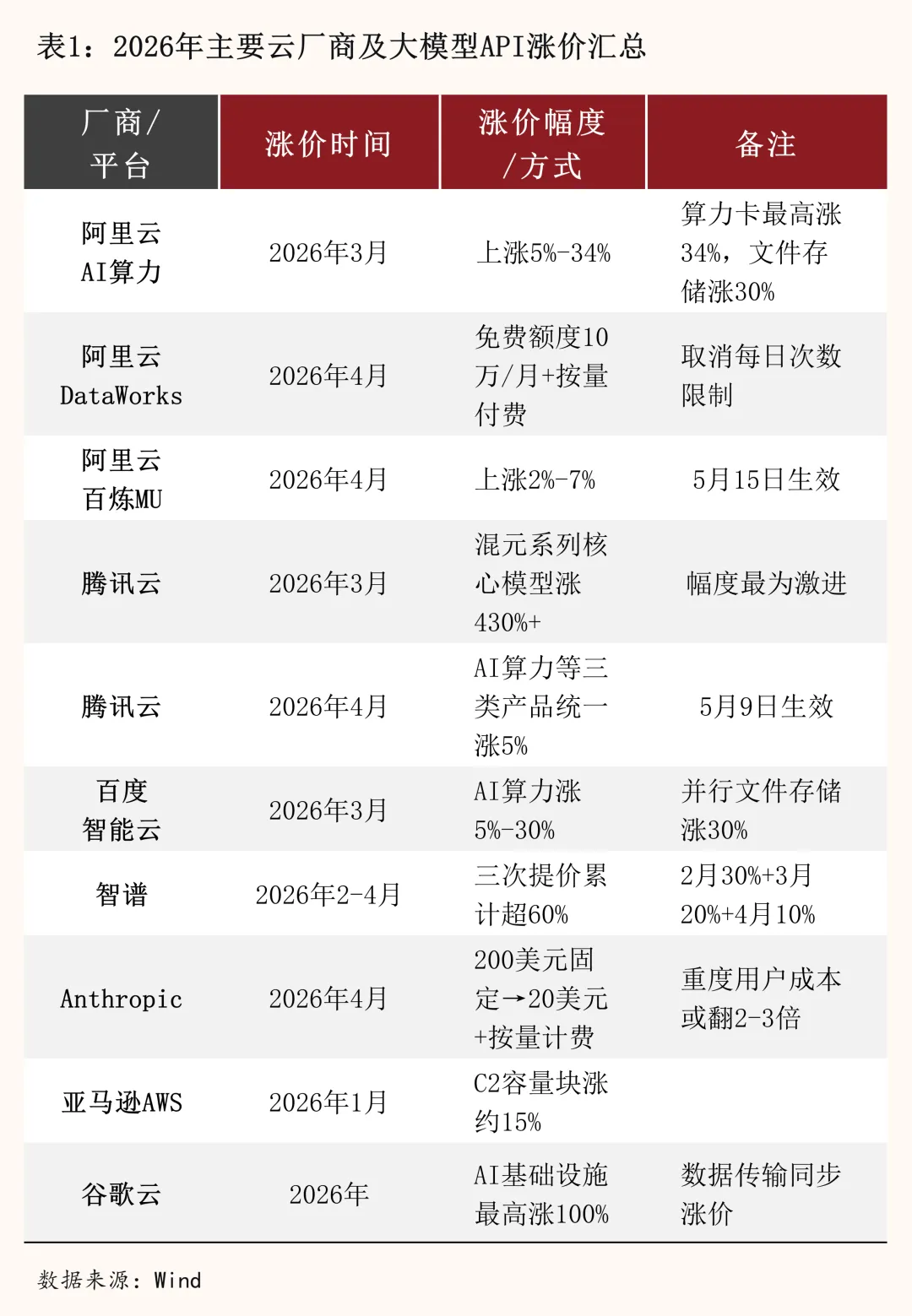

What Changed

Reversal from declining to rising AI usage costs

Why It Matters

Raises barriers for AI startups; favors compute giants like Nvidia in value capture.

What To Do Next

Benchmark your model's inference costs on AWS Inferentia vs GPUs now.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The surge in AI compute costs is increasingly driven by the 'inference wall,' where the energy and hardware requirements for deploying large-scale models are outpacing the efficiency gains from algorithmic optimizations.

- •Data center power constraints have become a primary bottleneck, forcing major AI players to invest directly in nuclear energy and grid infrastructure to secure the necessary baseload power for massive GPU clusters.

- •The shift toward 'sovereign AI' initiatives is creating a bifurcated market where national governments are subsidizing domestic compute infrastructure to reduce reliance on global cloud providers, further fragmenting the cost landscape.

🔮 Future ImplicationsAI analysis grounded in cited sources

Cloud providers will transition to 'compute-as-a-utility' pricing models.

Escalating energy and hardware costs will force providers to move away from flat-rate subscriptions toward dynamic pricing based on real-time energy spot prices and hardware scarcity.

Small-to-medium enterprises will shift focus to Small Language Models (SLMs).

The prohibitive cost of training and running frontier-scale models will make specialized, efficient SLMs the only economically viable path for most businesses.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗