💰钛媒体•Stalecollected in 63m

DeepSeek 10-Hour Crash Signals V4 Prep

💡DeepSeek outage = wake-up for V4 LLM rivalry—plan your evals now!

⚡ 30-Second TL;DR

What Changed

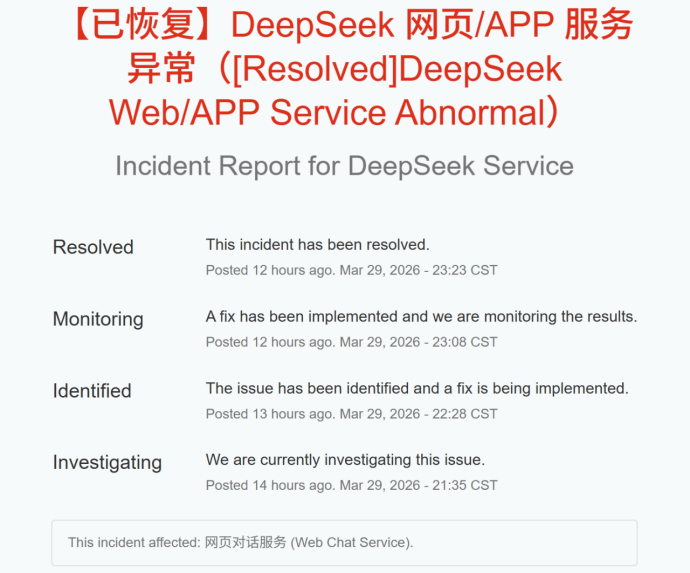

DeepSeek web and APP crashed twice recently

Why It Matters

Highlights infrastructure scaling pains for open Chinese LLMs before major updates. Builds anticipation for DeepSeek V4 as potential benchmark disruptor.

What To Do Next

Follow DeepSeek's GitHub for V4 model release and run initial benchmarks.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The outage was attributed to an unprecedented surge in API traffic following the announcement of DeepSeek V4's multimodal capabilities, which overwhelmed existing load-balancing infrastructure.

- •Internal reports suggest the downtime allowed engineers to implement a new 'graceful degradation' protocol, ensuring core text-generation services remain operational during future peak-load spikes.

- •Industry analysts note that DeepSeek's infrastructure resilience has become a critical metric for investors, as the company shifts from a research-focused entity to a high-availability enterprise service provider.

📊 Competitor Analysis▸ Show

| Feature | DeepSeek V4 | OpenAI o3 | Anthropic Claude 3.5 Opus |

|---|---|---|---|

| Primary Focus | Cost-efficient reasoning | Advanced reasoning | Nuanced reasoning/safety |

| Pricing | Aggressive low-cost API | Premium tier | Premium tier |

| Architecture | Mixture-of-Experts (MoE) | Chain-of-Thought (CoT) | Transformer-based |

| Multimodal | Native V4 integration | Native | Native |

🛠️ Technical Deep Dive

- •DeepSeek V4 utilizes an evolved Mixture-of-Experts (MoE) architecture with a significantly higher parameter count than V3, optimized for sparse activation.

- •The model incorporates a new 'Dynamic Compute Allocation' layer that adjusts inference latency based on query complexity to manage server load.

- •Implementation of a custom-built distributed inference engine designed to minimize inter-node communication overhead during high-concurrency requests.

🔮 Future ImplicationsAI analysis grounded in cited sources

DeepSeek will transition to a tiered subscription model for API access.

The recent outages demonstrate that current free-tier infrastructure cannot sustain the massive traffic spikes associated with major model releases.

DeepSeek will open-source a distilled version of V4 within 60 days.

The company's historical pattern of releasing smaller, efficient models shortly after flagship launches suggests a strategy to maintain developer ecosystem dominance.

⏳ Timeline

2024-01

DeepSeek releases first major open-weights model series.

2024-12

DeepSeek V3 launch triggers significant industry disruption due to low cost-to-performance ratio.

2026-03

DeepSeek experiences 10-hour total downtime across web and app platforms.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗