📋TestingCatalog•Stalecollected in 22h

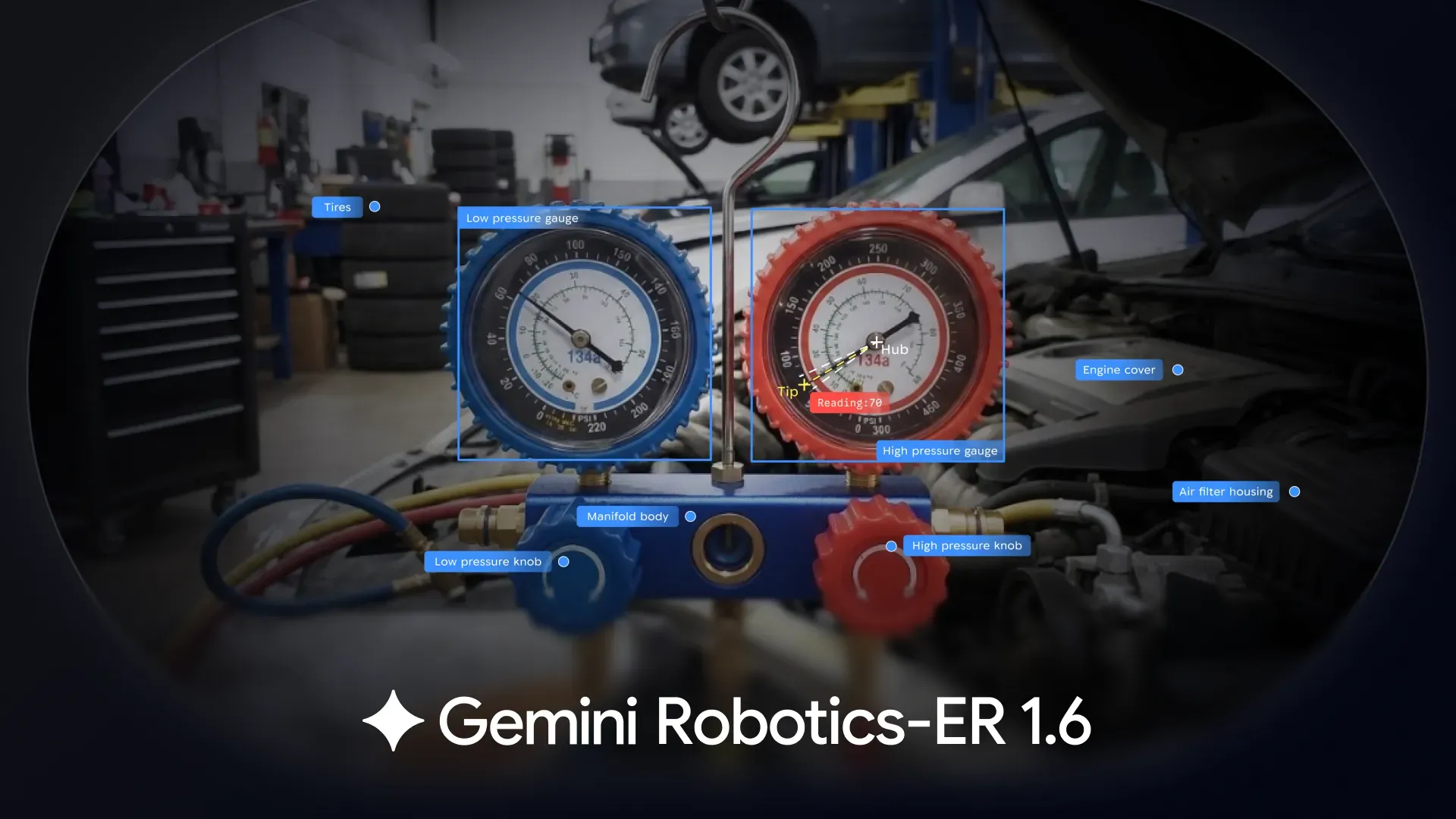

DeepMind Releases Gemini Robotics-ER 1.6 Reasoning Model

💡Robotics model reads gauges & plans tasks via API – essential for embodied AI builders

⚡ 30-Second TL;DR

What Changed

Spatial and physical sense for robots

Why It Matters

Pushes embodied AI forward, allowing robots to handle complex real-world tasks more autonomously.

What To Do Next

Access Gemini Robotics-ER 1.6 in Google AI Studio to prototype robot task planning.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Gemini Robotics-ER 1.6 utilizes a multimodal architecture specifically fine-tuned on a proprietary dataset of physical world interactions, distinguishing it from general-purpose LLMs that lack embodied grounding.

- •The model incorporates a novel 'spatial-temporal reasoning layer' that allows robots to predict the physical state of objects over time, reducing the latency typically associated with external vision-language processing.

- •Deployment is optimized for edge-computing environments, allowing the model to run on local robot controllers to ensure operational continuity even in scenarios with intermittent cloud connectivity.

📊 Competitor Analysis▸ Show

| Feature | Gemini Robotics-ER 1.6 | OpenAI/Figure Robotics | Tesla Optimus |

|---|---|---|---|

| Primary Focus | Reasoning & Analog Sensing | General Purpose Humanoid | Manufacturing & Labor |

| API Access | Google AI Studio | Closed/Partnership | Proprietary |

| Spatial Awareness | High (Native) | High (Vision-based) | Moderate (Vision-based) |

| Pricing | Usage-based (API) | N/A (Integrated) | N/A (Internal) |

🛠️ Technical Deep Dive

- Architecture: Employs a transformer-based backbone with a specialized 'Embodied-Token' layer that maps visual sensor data directly to motor control primitives.

- Input Modalities: Native support for RGB-D camera streams, tactile sensor feedback, and legacy analog gauge telemetry.

- Latency: Optimized for sub-100ms inference on NVIDIA Jetson Orin modules.

- Integration: Exposes a RESTful API for high-level task planning while maintaining a low-level ROS 2 (Robot Operating System) bridge for real-time execution.

🔮 Future ImplicationsAI analysis grounded in cited sources

Standardization of analog-to-digital robotic interfaces.

The ability to read legacy analog instruments natively will accelerate the retrofitting of older industrial machinery with modern AI-driven automation.

Shift toward edge-first robotic reasoning.

By enabling local execution of complex reasoning, Google is setting a precedent that reduces reliance on cloud-based inference for safety-critical robotic tasks.

⏳ Timeline

2023-12

Google DeepMind announces RT-2, a vision-language-action model.

2024-02

Release of Gemini 1.5 Pro with long-context capabilities.

2025-06

Google introduces initial Robotics-ER research framework.

2026-04

Official release of Gemini Robotics-ER 1.6.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: TestingCatalog ↗