📋TestingCatalog•Freshcollected in 30m

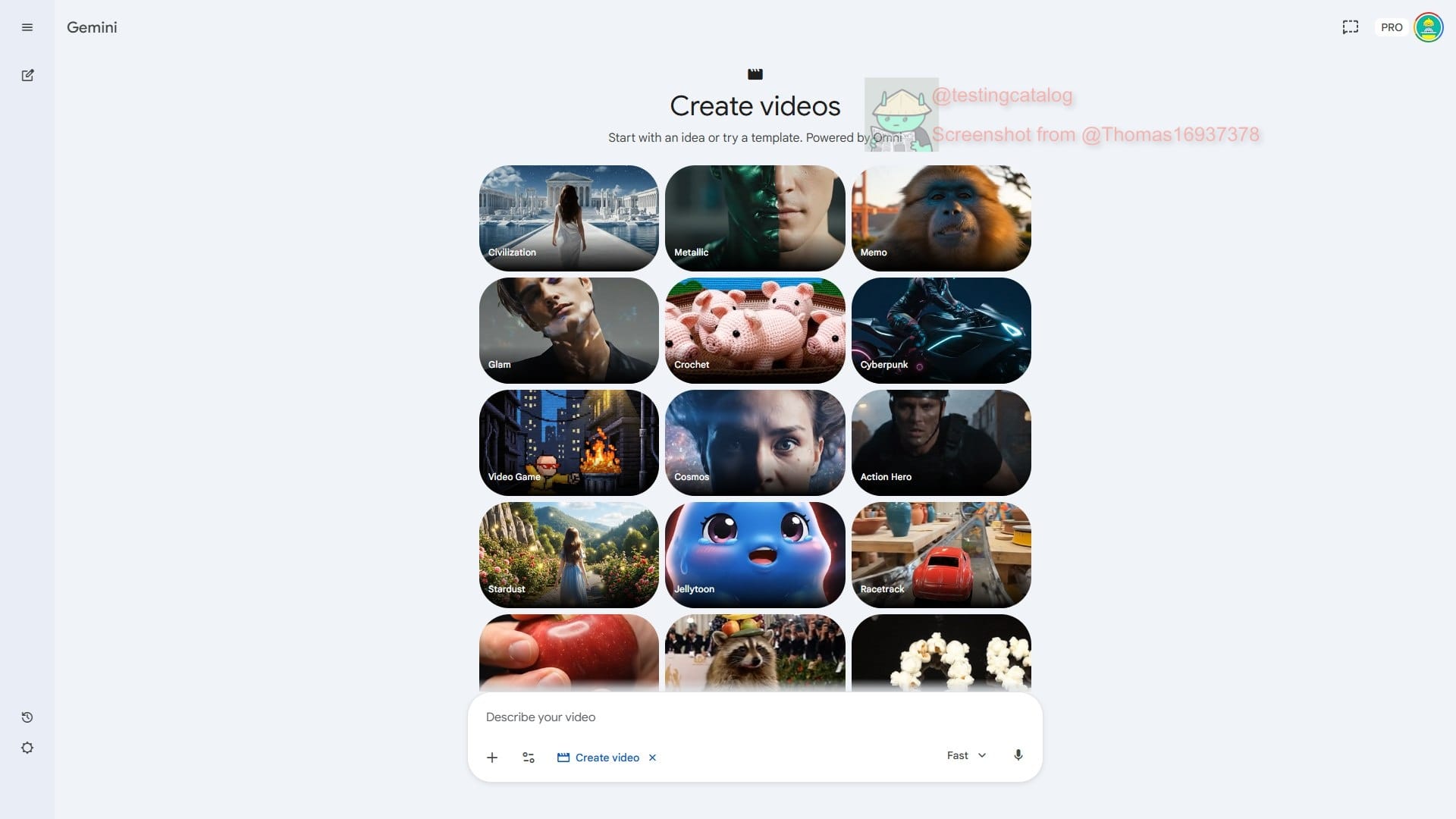

Google Tests Omni Video Model for I/O

💡Google's leaked Omni eyes video gen rivalry with Sora ahead of I/O.

⚡ 30-Second TL;DR

What Changed

Google testing Omni model for video generation

Why It Matters

Omni could rival Sora in video AI, boosting Google's multimodal offerings. AI creators gain potential new tool for dynamic content generation, shifting video synthesis competition.

What To Do Next

Monitor Google I/O 2026 for Omni model demo and API previews.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 'Omni' model architecture is reportedly built on a native multimodal foundation, allowing it to process and generate video, audio, and text simultaneously without separate transcoding layers.

- •Internal testing suggests the model utilizes a 'temporal consistency' layer designed to reduce the flickering artifacts common in earlier video generation models like Veo.

- •Google's integration strategy involves embedding Omni directly into the Gemini Advanced workspace, enabling users to generate video clips directly from prompts within Google Docs and Slides.

📊 Competitor Analysis▸ Show

| Feature | Google Omni | OpenAI Sora | Runway Gen-3 Alpha |

|---|---|---|---|

| Architecture | Native Multimodal | Diffusion Transformer | Latent Diffusion |

| Max Resolution | 4K (Reported) | 1080p | 4K |

| Pricing | Gemini Advanced Tier | Usage-based | Subscription/Credits |

🛠️ Technical Deep Dive

- •Architecture: Employs a unified Transformer-based architecture that treats video frames as a continuous stream of tokens, rather than discrete image sequences.

- •Latency Optimization: Utilizes speculative decoding to accelerate inference times for real-time video generation previews.

- •Training Data: Leverages a proprietary dataset of high-fidelity, long-form video content combined with synthetic data generated by previous iterations of Google's video models.

- •Context Window: Supports extended temporal context, allowing for consistent character and environment retention over 60-second generation sequences.

🔮 Future ImplicationsAI analysis grounded in cited sources

Google will integrate Omni into YouTube Studio for automated B-roll generation.

The model's ability to maintain temporal consistency makes it highly suitable for assisting creators in generating supplementary footage for existing video content.

Omni will trigger a shift in enterprise AI pricing models toward per-second video generation costs.

The high computational demand of native multimodal video generation necessitates a move away from flat-rate subscriptions to usage-based billing.

⏳ Timeline

2024-05

Google announces Veo, its first high-definition generative video model.

2025-02

Google releases Gemini 2.0, introducing enhanced multimodal reasoning capabilities.

2026-03

Initial internal alpha testing of the Omni model architecture begins.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: TestingCatalog ↗