🕸️LangChain Blog•Freshcollected in 36m

Continual Learning Layers for AI Agents

💡Unlock 3 layers of AI agent learning beyond model weights for better evolving systems.

⚡ 30-Second TL;DR

What Changed

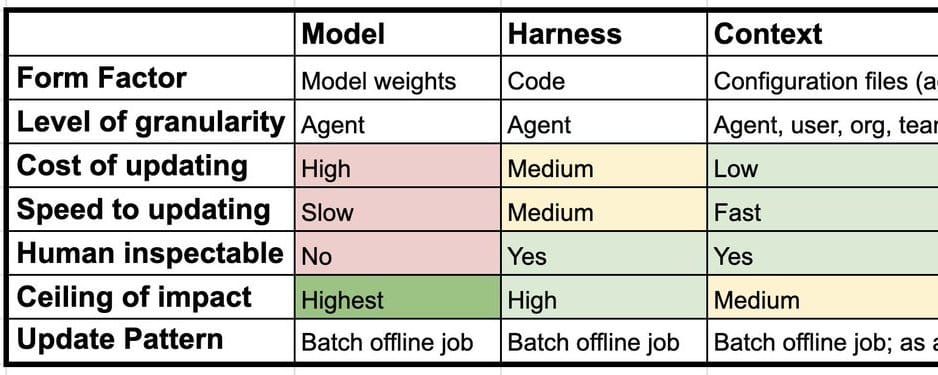

AI agent learning spans model, harness, and context layers

Why It Matters

Enables more robust AI agents that adapt continuously without full retraining, potentially reducing costs and improving performance in dynamic environments.

What To Do Next

Experiment with LangChain agents by adding context and harness updates for continual learning.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 'harness' layer refers to the agent's orchestration logic, including memory management, tool-use strategies, and planning loops, which can be optimized independently of the underlying LLM weights.

- •Context-layer learning leverages dynamic RAG (Retrieval-Augmented Generation) and episodic memory stores to allow agents to adapt to new domains without requiring expensive fine-tuning or catastrophic forgetting risks.

- •This layered architecture enables 'modular evolution,' where developers can upgrade the agent's reasoning harness or knowledge base independently of the model, significantly reducing the latency and cost of system updates.

🛠️ Technical Deep Dive

- •Model Layer: Focuses on weight-based adaptation (e.g., LoRA, QLoRA) for domain-specific reasoning capabilities.

- •Harness Layer: Implements state-machine or graph-based orchestration that updates its decision-making heuristics based on successful/failed execution traces (e.g., ReAct, Plan-and-Solve).

- •Context Layer: Utilizes vector databases and long-term memory buffers that ingest real-time feedback loops to refine retrieval relevance and agent persona consistency.

🔮 Future ImplicationsAI analysis grounded in cited sources

Agentic systems will shift from static deployments to self-optimizing pipelines.

By decoupling learning into three layers, agents can autonomously update their context and harness logic without needing full model retraining.

Catastrophic forgetting will become a secondary concern for enterprise AI.

Moving the primary learning burden to the context and harness layers isolates the core model from frequent, potentially destabilizing weight updates.

⏳ Timeline

2023-03

LangChain library release, establishing the foundation for agentic orchestration.

2024-06

Introduction of LangGraph, enabling more complex, stateful agentic workflows.

2025-02

LangChain introduces advanced memory and persistence modules for long-running agents.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: LangChain Blog ↗