Anthropic Exposes Chinese Claude Distillation

💡Chinese labs distilled Claude at scale—learn evasion tactics & defenses for your APIs

⚡ 30-Second TL;DR

What Changed

DeepSeek: 150k+ chats for reasoning, RL rewards, censored paraphrasing

Why It Matters

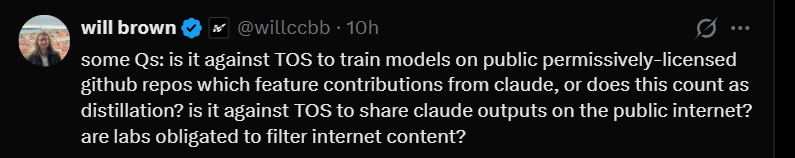

Exposes API vulnerabilities to distillation, may spur tighter rate limits and geo-blocks on frontier models. Escalates debates on distillation ethics vs. industry norms.

What To Do Next

Audit your API prompts for distillation patterns using metadata like IP clustering and rubric repeats.

🧠 Deep Insight

Web-grounded analysis with 5 cited sources.

🔑 Enhanced Key Takeaways

- •Anthropic attributed the campaigns to specific labs using IP address correlation, request metadata, infrastructure indicators, and corroboration from industry partners.[1][4]

- •Anthropic has deployed classifiers, behavioral fingerprinting systems, strengthened verification for educational and startup accounts, and enhanced safeguards to counter distillation attacks.[3][4]

- •OpenAI has previously accused DeepSeek of similar distillation techniques on its models.[2]

- •Google Threat Intelligence Group recently disrupted distillation attacks targeting Gemini's reasoning capabilities via over 100,000 prompts.[3]

- •The attacks occurred amid US debates on AI chip exports, with the Trump administration allowing Nvidia H200 chips to China last month.[1]

🛠️ Technical Deep Dive

- •Distillation involves sending bulk structured prompts at industrial scale to extract capabilities like agentic reasoning traces, which attackers later reconstruct.[4]

- •Moonshot AI's later phase specifically attempted to extract and reconstruct Claude’s internal reasoning traces through targeted prompts.[4]

- •Detection relies on classifiers identifying anomalous API traffic patterns distinct from normal usage, including volume, structure, and prompt focus.[4]

- •Attribution methods include IP correlation across proxy services (hydra clusters), request metadata matching staff profiles, and shared infrastructure indicators.[1][4]

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (5)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- TechCrunch — Anthropic Accuses Chinese AI Labs of Mining Claude As US Debates AI Chip Exports

- cyberscoop.com — Anthropic Accuses Chinese Labs AI Distillation Cyber Risk

- thehackernews.com — Anthropic Says Chinese AI Firms Used 16

- Anthropic — Detecting and Preventing Distillation Attacks

- Anthropic — Disrupting AI Espionage

📰 Event Coverage

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 虎嗅 ↗