🗾ITmedia AI+ (日本)•Freshcollected in 84m

Anthropic Boosts Claude Skill Testing

💡No-code testing for Claude skills prevents quality drops—essential for agent builders

⚡ 30-Second TL;DR

What Changed

New evaluation features for no-code skill verification

Why It Matters

Enables faster, more reliable AI agent development, reducing errors for builders deploying production skills. Could standardize quality checks across Claude ecosystem.

What To Do Next

Test the new evaluation tools in Anthropic's skill-creator for your Claude agents today.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

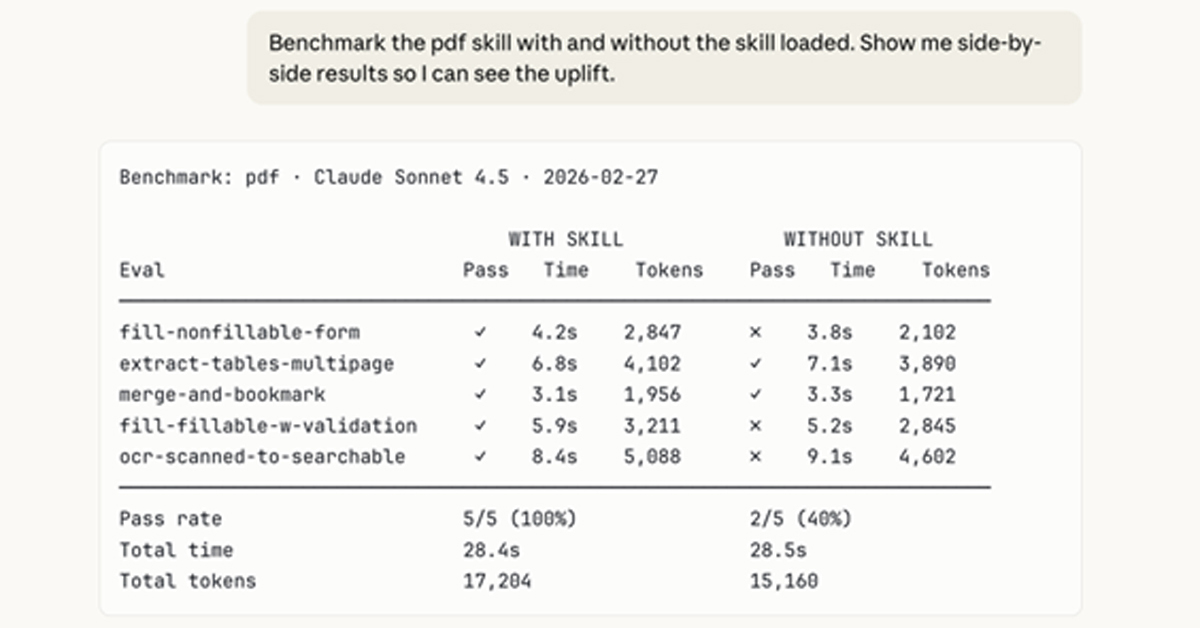

- •The new evaluation suite integrates with Anthropic's 'Claude Agentic Workflow' framework, allowing developers to run automated unit tests against agent outputs using a library of pre-defined, domain-specific evaluation datasets.

- •Anthropic has introduced a 'Regression Guard' feature that automatically flags potential performance drops when a user updates a skill's prompt or tool definition, comparing the new version against a baseline of historical successful executions.

- •The tool now supports 'Human-in-the-loop' (HITL) feedback loops, where developers can route ambiguous agent outputs to a human reviewer within the interface to generate ground-truth data for future automated benchmark fine-tuning.

📊 Competitor Analysis▸ Show

| Feature | Anthropic (Claude Skill Testing) | OpenAI (GPTs/Assistants) | Google (Vertex AI Agent Builder) |

|---|---|---|---|

| Evaluation Approach | No-code automated regression testing | Manual testing/evals via Playground | Integrated Vertex AI Evaluation service |

| Pricing | Usage-based (API/Token) | Usage-based (API/Token) | Tiered (Platform/Compute) |

| Benchmarks | Proprietary skill-specific metrics | Standardized model benchmarks | Custom evaluation pipelines |

🛠️ Technical Deep Dive

- •The evaluation engine utilizes a 'Judge-LLM' architecture, where a high-capability model (e.g., Claude 3.5 Sonnet or Opus) is tasked with scoring the output of the agent skill based on user-defined rubrics.

- •Supports JSON-schema validation for tool-use outputs, ensuring that agent-generated tool calls adhere strictly to the defined API specifications before execution.

- •Implements a 'Semantic Similarity' scoring mechanism using vector embeddings to compare agent responses against reference 'golden' answers, providing a quantitative quality metric.

- •The framework allows for 'Multi-turn Simulation,' where the testing environment maintains state across multiple agent-tool interactions to verify long-horizon task completion.

🔮 Future ImplicationsAI analysis grounded in cited sources

Agentic skill marketplaces will shift toward verified quality tiers.

Standardized evaluation tools allow platforms to certify agent skills, creating a premium tier of 'verified' tools for enterprise users.

Development cycles for complex AI agents will shorten by at least 30%.

Automated regression testing removes the bottleneck of manual verification, allowing for faster iteration and deployment of agentic workflows.

⏳ Timeline

2024-03

Anthropic releases Claude 3 model family with improved tool-use capabilities.

2024-06

Anthropic introduces the 'Claude Tool Use' API, enabling agents to interact with external software.

2025-02

Anthropic launches the 'Claude Agentic Workflow' framework for enterprise developers.

2025-10

Anthropic releases initial no-code skill-creator tools for Claude.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗