💰钛媒体•Freshcollected in 70m

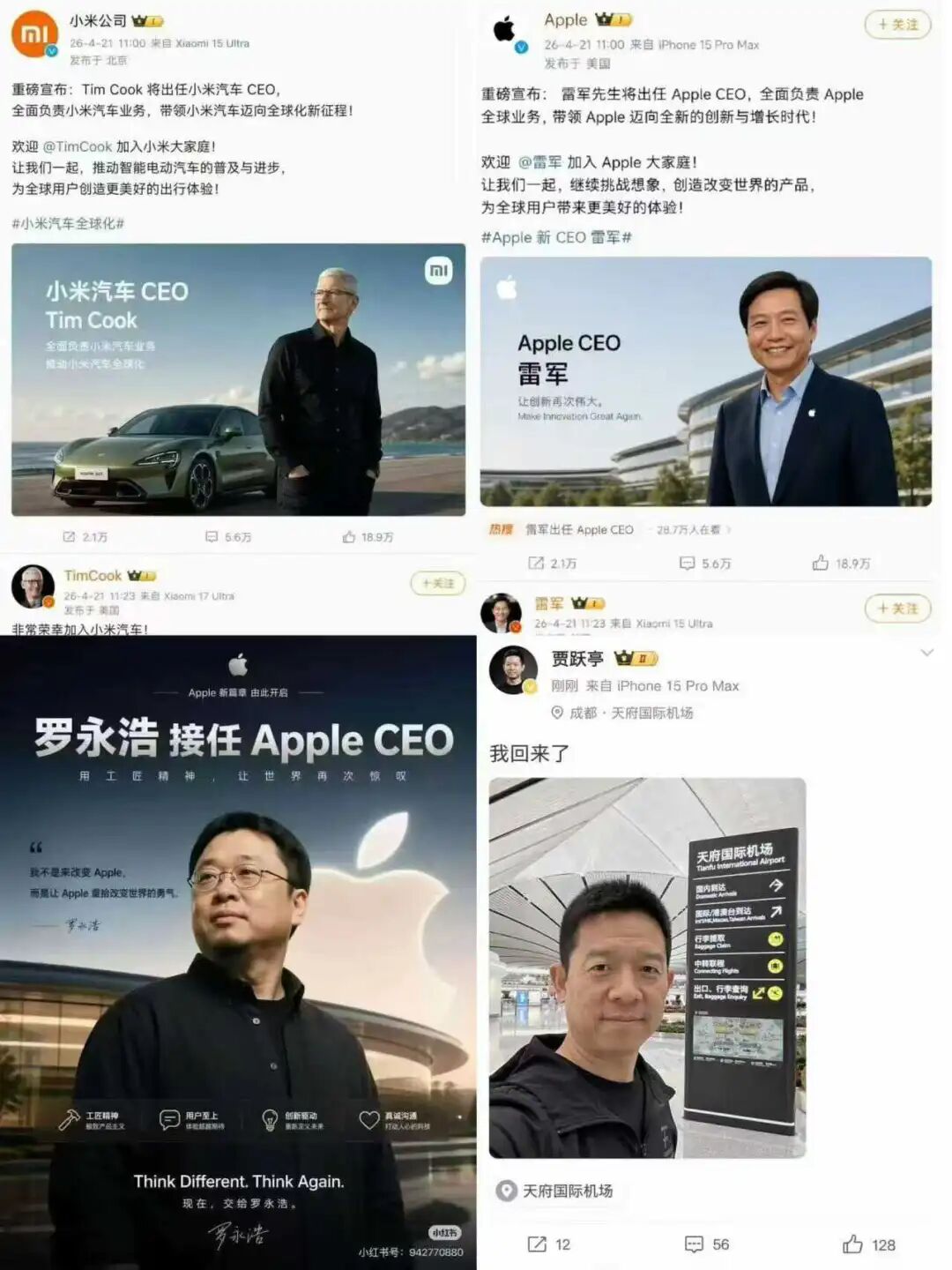

AI Makes Every Day April Fool's Day

💡AI kills 'seeing is believing'—key for detection tool builders.

⚡ 30-Second TL;DR

What Changed

AI creates daily realistic fakes

Why It Matters

Erodes trust in visuals, urging AI practitioners to prioritize detection and watermarking tech amid misinformation rise.

What To Do Next

Integrate Hugging Face's AI detector into your gen AI pipeline today.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The proliferation of 'cheapfakes'—low-effort, AI-assisted manipulations—is currently outpacing high-end 'deepfakes' in causing widespread public misinformation due to their accessibility and viral nature.

- •Regulatory bodies, including the EU and various US state legislatures, have begun mandating digital watermarking and provenance standards (such as C2PA) to combat the erosion of trust in digital media.

- •Cognitive security researchers are shifting focus from purely technical detection methods to 'human-in-the-loop' verification systems, as generative models are increasingly capable of bypassing automated forensic detectors.

🔮 Future ImplicationsAI analysis grounded in cited sources

Digital provenance standards will become a mandatory requirement for major social media platforms by 2027.

Legislative pressure and the need to mitigate liability for AI-generated defamation are forcing platforms to adopt verifiable content metadata.

Automated deepfake detection tools will experience a significant decline in efficacy against next-generation generative models.

Adversarial training techniques allow new models to specifically optimize for bypassing existing forensic detection architectures.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗