🇨🇳cnBeta (Full RSS)•Freshcollected in 2h

AI Giants' Warnings Spark Backlash 'Oppenheimer' Trap

💡AI leaders' risk warnings draw hate—vital for biz strategy shifts.

⚡ 30-Second TL;DR

What Changed

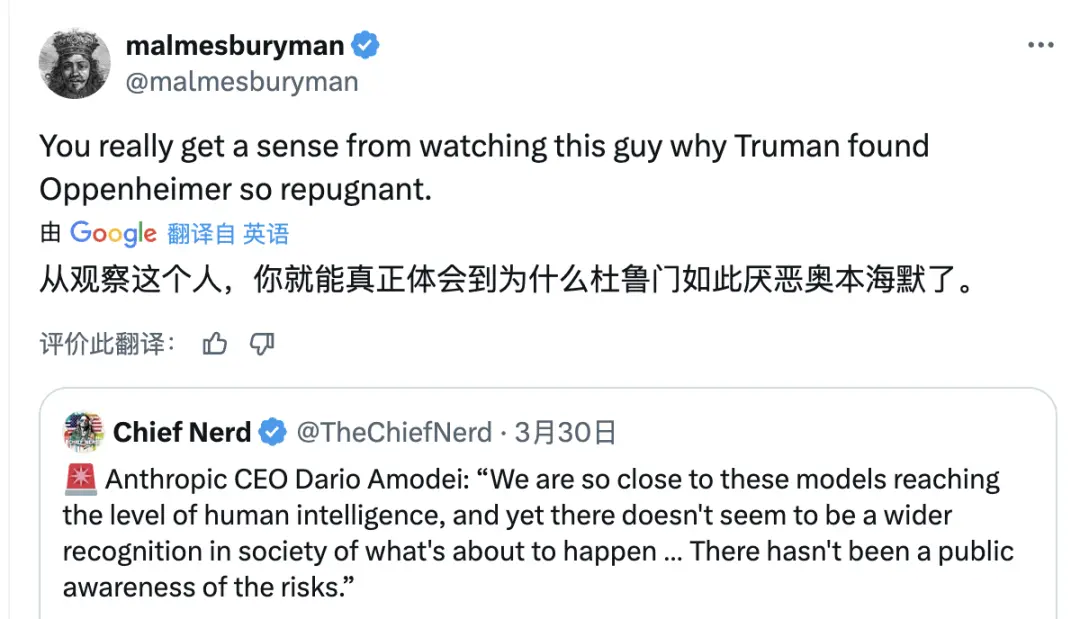

Viral X post by @malmesburyman on March 30

Why It Matters

Exposes tension between AI safety advocacy and public perception, influencing founder strategies and policy debates amid growing scrutiny.

What To Do Next

Search X for @malmesburyman Amodei post to track AI safety sentiment.

Who should care:Founders & Product Leaders

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 'Oppenheimer' analogy specifically references the 1945 meeting where J. Robert Oppenheimer told President Truman he felt he had 'blood on his hands,' leading Truman to label him a 'crybaby scientist' and exclude him from future policy influence.

- •Critics of AI leaders like Amodei argue that public warnings serve as a strategic 'regulatory capture' mechanism, designed to impose high compliance costs that only well-funded incumbents can afford, thereby stifling open-source competition.

- •The backlash reflects a growing divide between 'accelerationist' factions, who view existential risk discourse as counterproductive fear-mongering, and 'safety-first' proponents who believe current scaling laws necessitate immediate government oversight.

🔮 Future ImplicationsAI analysis grounded in cited sources

AI companies will shift away from public existential risk rhetoric in official communications.

The negative public sentiment and potential for political alienation are forcing firms to rebrand safety concerns as 'responsible innovation' rather than 'existential threat mitigation'.

Regulatory bodies will increasingly scrutinize the motives behind industry-led safety warnings.

Lawmakers are becoming more aware of the potential for large AI labs to use safety narratives to lobby for regulations that create barriers to entry for smaller competitors.

⏳ Timeline

2021-01

Anthropic is founded by former OpenAI employees with a focus on AI safety and constitutional AI.

2023-03

Anthropic releases Claude, emphasizing the 'Constitutional AI' approach to alignment.

2023-05

Dario Amodei testifies before the U.S. Senate Judiciary Committee regarding the potential risks of advanced AI systems.

2024-05

Anthropic joins other major AI firms in signing voluntary safety commitments with the U.S. government.

2025-11

Anthropic releases updated safety frameworks, drawing increased scrutiny from open-source advocacy groups.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗