🐼Pandaily•Stalecollected in 3h

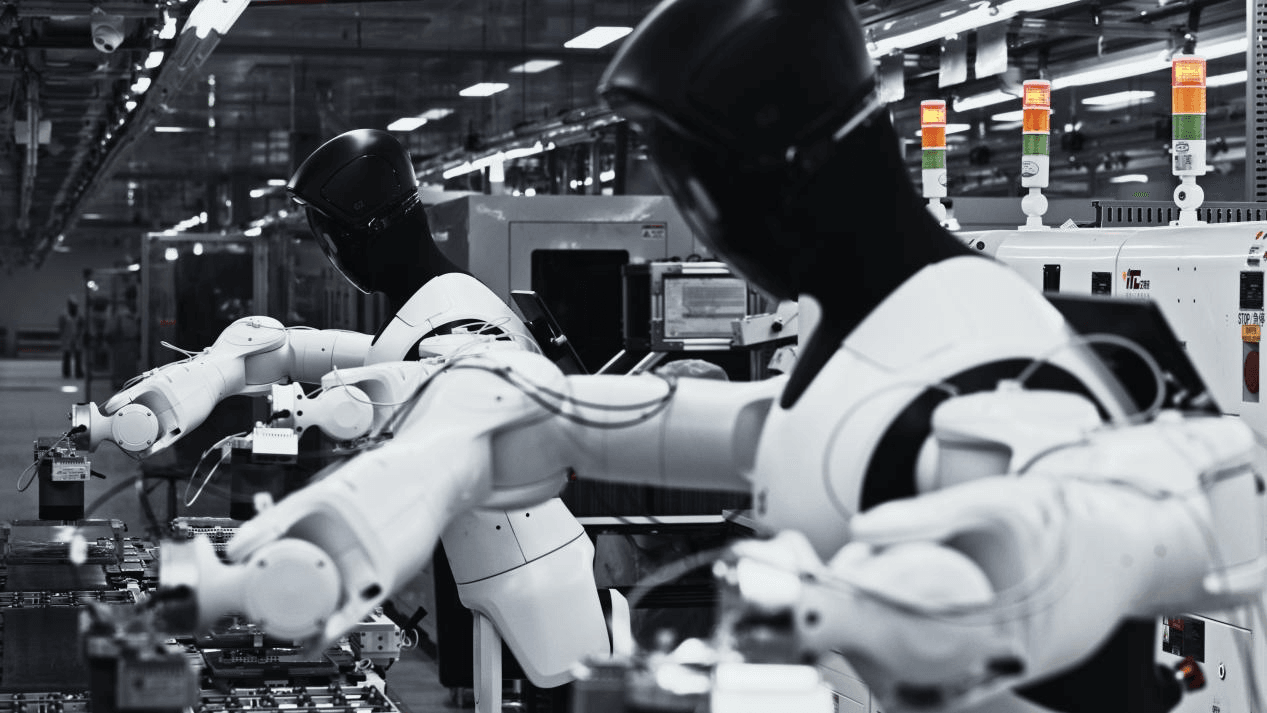

AGIBOT Yuanling G2 Aces 8-Hour Factory Shift

💡First scaled embodied AI factory shift hits 99.5% success—blueprint for industrial robotics.

⚡ 30-Second TL;DR

What Changed

Livestreamed 8-hour shift on 3C assembly line

Why It Matters

This validates embodied AI's reliability for full-shift operations, potentially lowering barriers for industrial adoption. It signals a shift toward AI-driven automation in manufacturing.

What To Do Next

Watch AGIBOT's livestream to analyze Yuanling G2's real-world performance metrics.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The Yuanling G2 utilizes a proprietary 'Embodied Brain' architecture that integrates multimodal large models for real-time visual-tactile perception and motor control, reducing the need for traditional hard-coded programming.

- •AGIBOT, founded by former Huawei 'Genius Youth' recruit Zhihui Jun, has transitioned from R&D to commercial production by leveraging a modular design that allows for rapid reconfiguration across different 3C assembly tasks.

- •The 99.5% success rate was validated under specific controlled lighting and standardized component positioning, highlighting that while the robot handles complex assembly, it still relies on structured industrial environments.

📊 Competitor Analysis▸ Show

| Feature | AGIBOT Yuanling G2 | Tesla Optimus Gen 2 | Figure AI Figure 02 |

|---|---|---|---|

| Primary Focus | Industrial 3C Assembly | General Purpose/Factory | General Purpose/Logistics |

| Control Architecture | Proprietary Embodied AI | End-to-End Neural Net | OpenAI-integrated VLM |

| Deployment Status | Scaled Industrial Pilot | Internal Factory Testing | Pilot/Commercial Testing |

| Pricing | Not Public | Not Public | Not Public |

🛠️ Technical Deep Dive

- •Actuation: Employs high-torque density joint modules with integrated force-torque sensors for delicate manipulation tasks.

- •Vision System: Features multi-camera depth sensing with onboard edge computing to process spatial data at low latency.

- •Control Loop: Utilizes a hierarchical control strategy where a high-level policy model generates task plans, executed by a low-level real-time motion controller.

- •Training: Leverages a combination of teleoperation-based imitation learning and reinforcement learning in simulated environments (Sim-to-Real).

🔮 Future ImplicationsAI analysis grounded in cited sources

AGIBOT will expand into automotive sub-assembly by Q4 2026.

The modular architecture of the G2 allows for the adaptation of existing 3C assembly skill sets to automotive component handling with minimal retraining.

Industrial robot labor costs will drop below $5/hour by 2028.

The successful 8-hour shift demonstration indicates that embodied AI robots are approaching the operational efficiency required to displace manual labor in high-volume manufacturing.

⏳ Timeline

2023-08

AGIBOT officially launches the Yuanling series, targeting industrial and service applications.

2024-05

AGIBOT secures significant Series B funding to accelerate mass production of embodied AI robots.

2025-11

AGIBOT initiates pilot testing of the Yuanling G2 in select 3C manufacturing facilities.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Pandaily ↗