⚖️AI Alignment Forum•Recentcollected in 37m

$100M Grants for Scaling AI Safety Automation

💡$100M grants ready for scalable AI safety pipelines—pitch yours now for massive compute.

⚡ 30-Second TL;DR

What Changed

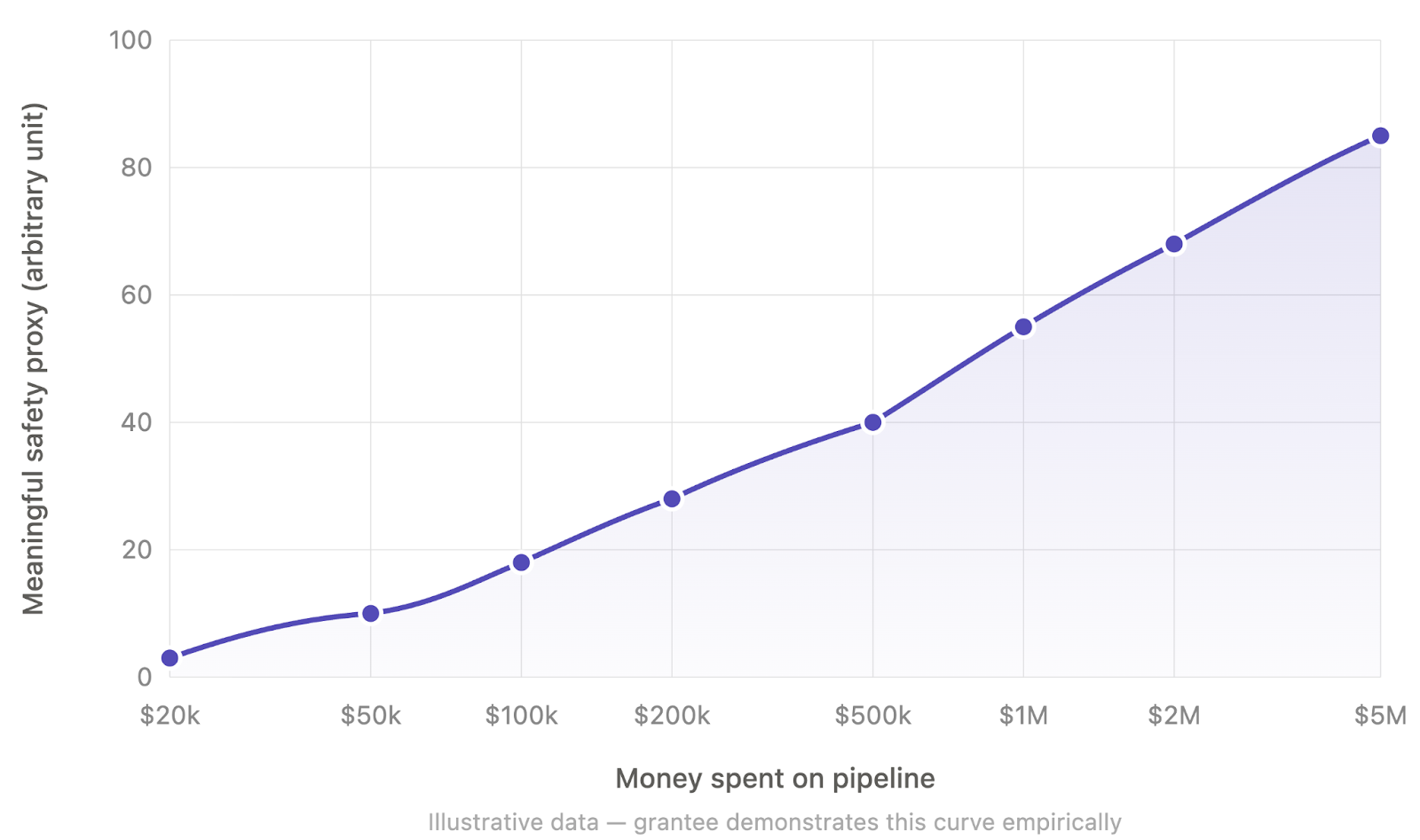

Propose initial grants like $5M ($2M salaries, $3M compute) to test scaling hypotheses

Why It Matters

Could unlock billions in AI safety funding, accelerating automated pipelines critical for short timelines. Shifts focus from conservative salaries to compute-heavy scaling, benefiting safety orgs.

What To Do Next

Draft a scaling hypothesis for your AI safety method and pitch to Apollo Research funders.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The proposal aligns with the 'Automated Alignment Research' (AAR) paradigm, which seeks to replace human-in-the-loop oversight with AI systems capable of performing interpretability and safety evaluations at scale.

- •The funding model draws inspiration from the 'Compute-Optimal' scaling laws observed in model training, applying similar empirical rigor to the cost-efficiency of safety-critical feature discovery.

- •This initiative addresses the 'alignment bottleneck' by shifting focus from theoretical safety research to high-throughput, automated pipelines that leverage massive GPU clusters specifically for mechanistic interpretability.

🔮 Future ImplicationsAI analysis grounded in cited sources

Automated safety pipelines will become the primary metric for model release readiness.

As safety research scales, regulatory and internal governance bodies will likely mandate empirical proof of safety via automated interpretability proxies before allowing large-scale model deployment.

Compute-for-safety spending will exceed 10% of total model training budgets by 2028.

The shift toward automated, compute-intensive safety verification necessitates a significant reallocation of capital from pure training to safety-focused inference and analysis.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AI Alignment Forum ↗