💰钛媒体•Stalecollected in 14h

Xiaomi Model Mistaken for DeepSeek V4

💡Xiaomi model fooled devs as DeepSeek V4—uncover why it matters for LLM tracking.

⚡ 30-Second TL;DR

What Changed

Xiaomi released mysterious AI model

Why It Matters

Reveals rapid evolution in AI model releases, causing benchmark mix-ups and highlighting need for clear model identification in open ecosystems.

What To Do Next

Benchmark Xiaomi's model against DeepSeek V3 on Hugging Face to clarify performance.

Who should care:Developers & AI Engineers

🧠 Deep Insight

Web-grounded analysis with 7 cited sources.

🔑 Enhanced Key Takeaways

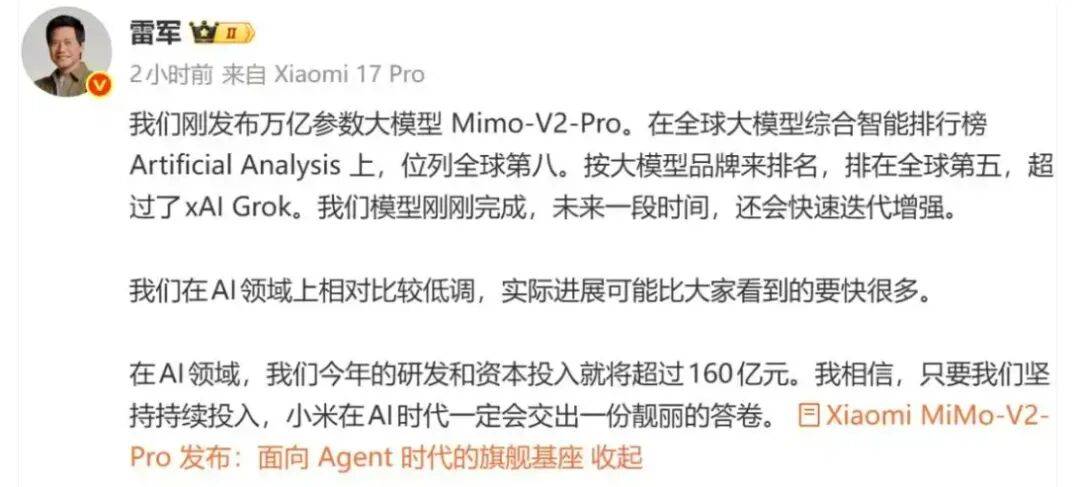

- •Hunter Alpha, the mysterious model, was revealed to be Xiaomi's MiMo-V2-Pro, an internal test build developed by Xiaomi's AI team MiMo led by former DeepSeek researcher Leo Fuli, designed to serve as the 'brain' of AI agents capable of executing complex tasks with minimal human supervision[2]

- •The confusion arose because Hunter Alpha described itself as 'a Chinese AI model primarily trained in Chinese' with a May 2025 knowledge cutoff matching DeepSeek's reported cutoff, and its refusal to identify its creator fueled speculation about its true origin[2]

- •DeepSeek V4 is expected to be a native multimodal model with picture, video, and text-generating functions, optimized primarily for coding and long-context software engineering tasks, with internal tests suggesting it could outperform Claude and ChatGPT on long-context coding[1]

- •DeepSeek strategically withheld V4 optimization from U.S. chipmakers Nvidia and AMD, instead granting early access to domestic Chinese suppliers like Huawei and Cambricon, reflecting deliberate efforts to deepen ties with China's domestic hardware ecosystem[1]

📊 Competitor Analysis▸ Show

| Feature | DeepSeek V4 | Xiaomi MiMo-V2-Pro | Claude | ChatGPT |

|---|---|---|---|---|

| Primary Focus | Code Generation & Long-Context Tasks | AI Agent Brain / Complex Task Execution | General Purpose | General Purpose |

| Multimodal Capability | Picture, Video, Text | Not Specified | Text-Based | Text-Based |

| Context Window | Not Specified | 1-Million Token | Not Specified | Not Specified |

| Parameter Scale | Not Specified | 1-Trillion | Not Specified | Not Specified |

| Target Audience | Developers, Software Engineers | AI Agent Developers | General Users | General Users |

🛠️ Technical Deep Dive

- Token-Level Sparse MLA: Separate test scripts for sparse and dense decoding indicate parallel processing pathways, using FP8 for storing KV cache and bfloat16 for matrix multiplication, designed for extreme long-context scenarios[1]

- Value Vector Position Awareness (VVPA): New mechanism addressing positional information decay over long contexts, preserving spatial information even under aggressive compression for sequences extending into hundreds of thousands of tokens[1]

- DualPath Inference Optimization: Improves offline inference throughput by up to 1.87x and online inference by 1.96x, expected to be incorporated into DeepSeek V4's inference architecture[1]

- Engram Memory Architecture: DeepSeek V4's core innovation moving beyond traditional transformer limitations, facilitating more efficient data recall and context management for optimal data retrieval and processing[4]

🔮 Future ImplicationsAI analysis grounded in cited sources

DeepSeek V4 will establish dominance in specialized coding domains over general-purpose competitors

China's AI ecosystem will accelerate decoupling from U.S. semiconductor suppliers

DeepSeek's strategic partnership with Huawei and Cambricon for V4 optimization signals deliberate efforts to reduce dependence on Nvidia and AMD, establishing domestic hardware-software integration[1]

Multimodal capabilities will become table-stakes for flagship Chinese LLMs

DeepSeek V4's native multimodal design follows similar moves by Moonshot, Alibaba's Qwen, and ByteDance's Seed, indicating industry-wide convergence on picture, video, and text generation[1]

⏳ Timeline

2025-05

DeepSeek establishes knowledge cutoff date for V4 model training

2026-02

DeepSeek V4 slated for mid-February 2026 release with enhanced performance and efficiency

2026-03-18

Hunter Alpha model appears on OpenRouter; widely speculated to be DeepSeek V4 due to 1-trillion parameters and 1-million-token context window

2026-03-18

Xiaomi confirms Hunter Alpha is actually MiMo-V2-Pro, an internal test build led by former DeepSeek researcher Leo Fuli

📎 Sources (7)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- recodechinaai.substack.com — Deepseeks Next Move What V4 Will

- timesofindia.indiatimes.com — 129676482

- youtube.com — Watch

- vertu.com — Deepseek V4 2026 AI Model Review Redefining LLM Expectations

- tradingview.com — Reuters.com,2026:newsml L1n40701s:0 Mystery AI Model Suspected to Be Deepseek V4 Is Revealed to Be From Xiaomi

- digit.fyi — Mystery AI Model Revealed to Be Latest From Xiaomi

- techrepublic.com — News Xiaomi Hunter Alpha AI Model Deepseek Speculation

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗