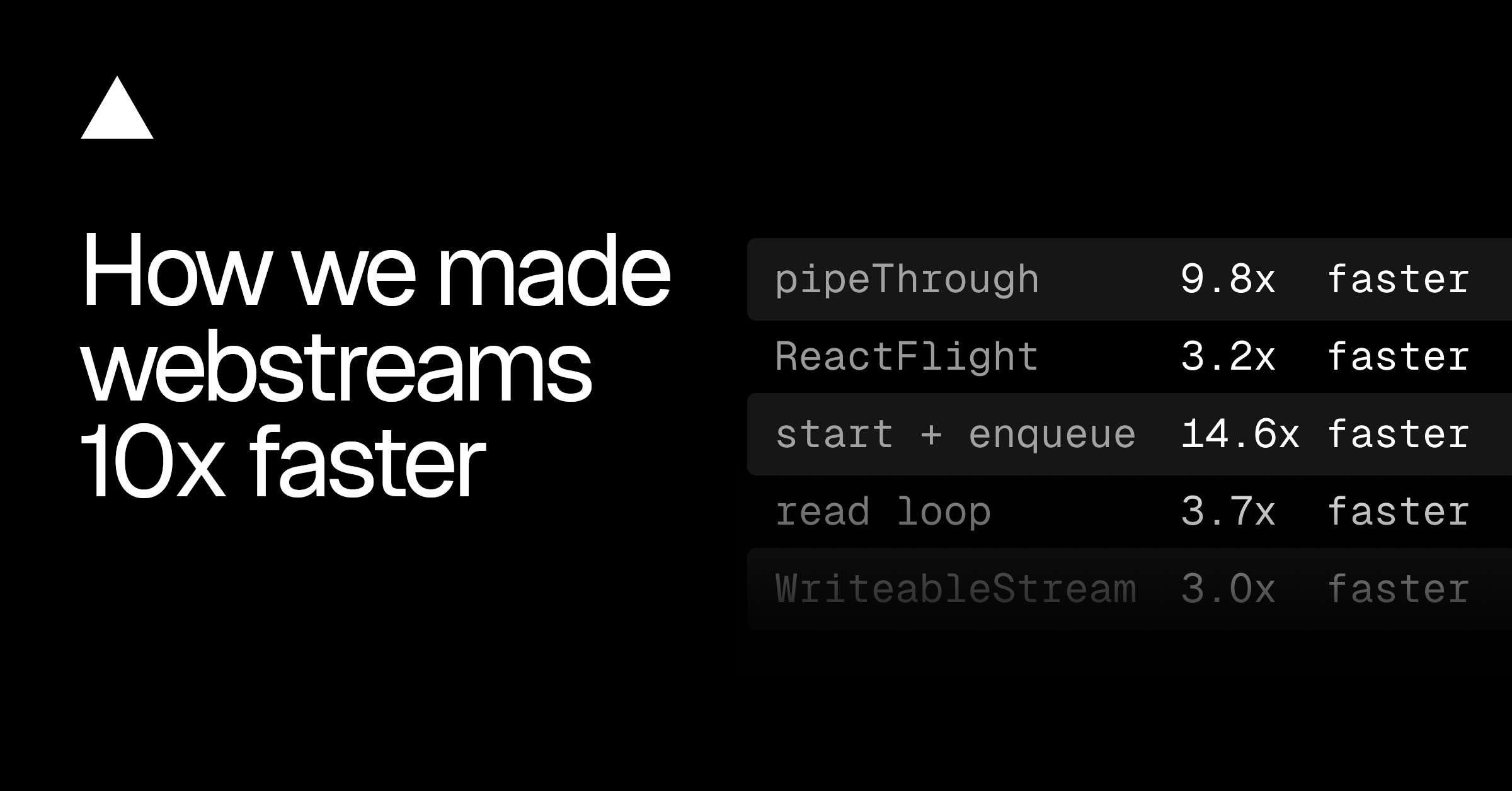

Vercel 10x Faster WebStreams

💡10x faster WebStreams for Next.js SSR – vital for scalable AI streaming apps.

⚡ 30-Second TL;DR

What Changed

WebStreams dominate Next.js SSR flamegraphs with Promise and allocation overhead

Why It Matters

Boosts streaming performance in Next.js and React SSR, critical for real-time AI apps like chat interfaces. Reduces framework overhead highlighted in benchmarks. Enables faster server responses at scale.

What To Do Next

Benchmark fast-webstreams in your Next.js SSR pipeline for 10x streaming gains.

🧠 Deep Insight

Web-grounded analysis with 7 cited sources.

🔑 Enhanced Key Takeaways

- •Vercel identified WebStreams as a critical performance bottleneck in Next.js server-side rendering, with Promise chains and memory allocations causing significant overhead in flamegraphs[1]

- •Native Node.js WebStreams implementation achieves only 630 MB/s throughput compared to 7,900 MB/s with legacy Node.js streams, representing a 12x performance gap[1]

- •Vercel's fast-webstreams library maintains full WHATWG Streams API compatibility while leveraging optimized Node.js streams backend for superior performance[1]

- •Edge Runtime optimization is critical for AI applications, with streaming reducing perceived latency by delivering responses incrementally rather than waiting for complete generation[2]

- •The performance improvements are being upstreamed to Node.js core through contributions, indicating industry-wide recognition of WebStreams overhead issues[1]

🛠️ Technical Deep Dive

• WebStreams implementation uses Promise-based architecture that introduces allocation overhead unsuitable for high-throughput server scenarios • fast-webstreams reimplements WHATWG Streams specification while delegating to Node.js native streams for actual I/O operations • The optimization targets the server-side rendering path in Next.js where streaming is essential for progressive HTML delivery • Edge Runtime environments (V8 Isolates) are optimized for streaming without full Node.js overhead, enabling zero cold starts and native HTTP stream handling[2] • Streaming text responses in AI applications reduce perceived latency by delivering tokens incrementally rather than waiting for complete LLM generation[2] • Implementation considerations include handling asynchronous generators correctly with for await...of patterns and managing serverless function timeouts during long-running streams[2]

🔮 Future ImplicationsAI analysis grounded in cited sources

This optimization addresses a fundamental bottleneck in modern web frameworks handling AI-generated content and real-time data. As AI applications become standard in production systems, streaming performance directly impacts user experience and infrastructure costs. The upstreaming to Node.js core suggests this will become a baseline improvement for the entire Node.js ecosystem. Organizations using Next.js with AI features (LLMs, real-time APIs) will benefit from reduced latency and improved throughput without code changes. Edge Runtime adoption will likely accelerate as streaming performance becomes a competitive differentiator for serverless platforms.

⏳ Timeline

📎 Sources (7)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Vercel News ↗