💰钛媒体•Stalecollected in 72m

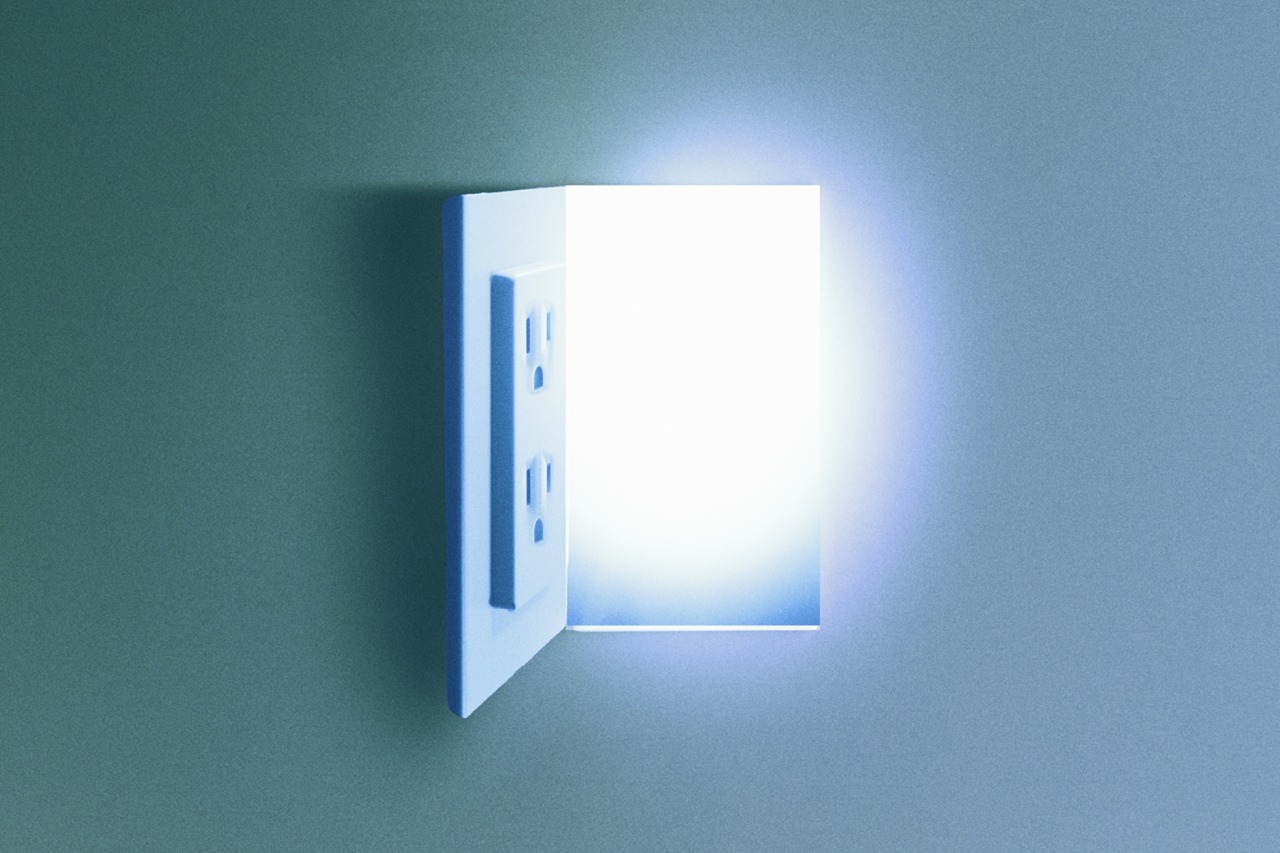

Utilities Profit from AI Power Boom

💡AI power deals favor utilities—plan for rising energy costs in your infra stack

⚡ 30-Second TL;DR

What Changed

Massive AI investments by tech giants spike electricity needs

Why It Matters

Raises data center energy costs, forcing AI firms to optimize power efficiency.

What To Do Next

Model power consumption for your next AI training run using AWS cost calculators.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

Web-grounded analysis with 6 cited sources.

🔑 Enhanced Key Takeaways

- •U.S. data center electricity demand surged 22% in 2025 and is projected to reach nearly 76 gigawatts in 2026, enough to power approximately 40% of U.S. homes, creating unprecedented leverage for utility companies in infrastructure negotiations[4].

- •AI-specific electricity consumption is projected to grow from 53-76 terawatt-hours in 2024 to 165-326 terawatt-hours by 2028, representing a shift where AI could consume over half of all data center electricity by 2028[2].

- •Major grid operators including PJM and ERCOT are implementing new frameworks requiring large data center users to develop independent power supplies or accept controlled load reduction, fundamentally reshaping utility-tech company relationships[1].

- •Tech giants' combined capital expenditure reached record levels—Amazon ($85.8B), Google ($52.5B), Microsoft ($44.5B), and Meta ($39.2B)—with a significant portion directed toward power infrastructure to support AI expansion[6].

- •AI data centers require approximately 14 gigawatts of additional new power capacity by 2030, with GPU-accelerated servers potentially accounting for 27% of planned new generation capacity in 2027, positioning utilities as critical infrastructure partners rather than commodity providers[3].

🛠️ Technical Deep Dive

- •Large AI data center sites consume over 1 gigawatt of continuous load, equivalent to powering 850,000 homes[1]

- •A single ChatGPT query consumes approximately 10 times more electricity than a Google search[2]

- •Generative AI training clusters consume 7-8 times more energy than typical computing workloads[2]

- •GPUs (primarily Nvidia-supplied) are the energy-intensive core technology driving AI data center power consumption, with energy demand on an upward trajectory through 2030[3]

- •Training GPT-3 required approximately 1,287 megawatt-hours and generated about 552 tons of CO₂[2]

- •AI-specific servers used an estimated 53-76 terawatt-hours in 2024, with projections of 165-326 terawatt-hours by 2028[2]

🔮 Future ImplicationsAI analysis grounded in cited sources

Utility companies will transition from commodity suppliers to strategic infrastructure partners, commanding premium pricing and long-term contracts with tech giants.

The unprecedented scale and speed of AI data center deployment (field to city-scale electricity consumption in two years) creates supply constraints that shift negotiating power from tech companies to utilities[4].

Power infrastructure bottlenecks will become a primary constraint on AI expansion, potentially limiting the profitability of the $600+ billion annual AI investment wave.

Severe constraints including turbine shortages, slow grid expansion, and regulatory delays are already creating 'nasty road bumps' for hyperscaler deployment plans[1].

Regional disparities in grid capacity will create geographic winners and losers in AI infrastructure development, with Virginia and Texas consolidating dominance.

Virginia hosts 663 operating data centers with 600 more planned, and Texas ranks second; utilities in these regions will capture disproportionate value from AI expansion[4].

⏳ Timeline

2021

AI and machine learning accounted for less than 0.2% of global electricity use and less than 0.1% of global emissions

2022

Global data center electricity consumption reached 460 terawatt-hours

2023

U.S. data centers consumed 4.4% of national electricity (176 TWh), up from 1.9% in 2018; ChatGPT's public debut catalyzed AI investment acceleration

2024

AI-specific servers consumed an estimated 53-76 terawatt-hours; tech giants' capital expenditures reached record highs (Amazon $85.8B, Google $52.5B, Microsoft $44.5B, Meta $39.2B)

2025

U.S. power consumption hit second consecutive record high at 4,195 terawatt-hours; data center grid demand rose 22%; electricity prices nationwide increased 7% year-over-year

2026-02

PJM grid operator unveiled framework requiring large data center users to develop independent power supply or accept controlled load reduction; data center grid demand projected to reach nearly 76 gigawatts

📎 Sources (6)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- energynow.com — US AI Boom Faces Electric Shock

- research.aimultiple.com — AI Energy Consumption

- energypolicy.columbia.edu — Projecting the Electricity Demand Growth of Generative AI Large Language Models in the US

- atmos.earth — Ais Energy Reckoning Has Arrived

- opteraclimate.com — 2026 Predictions How AI Will Impact Energy Use and Climate Work

- belfercenter.org — AI Data Centers US Electric Grid

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗