💰钛媒体•Stalecollected in 6m

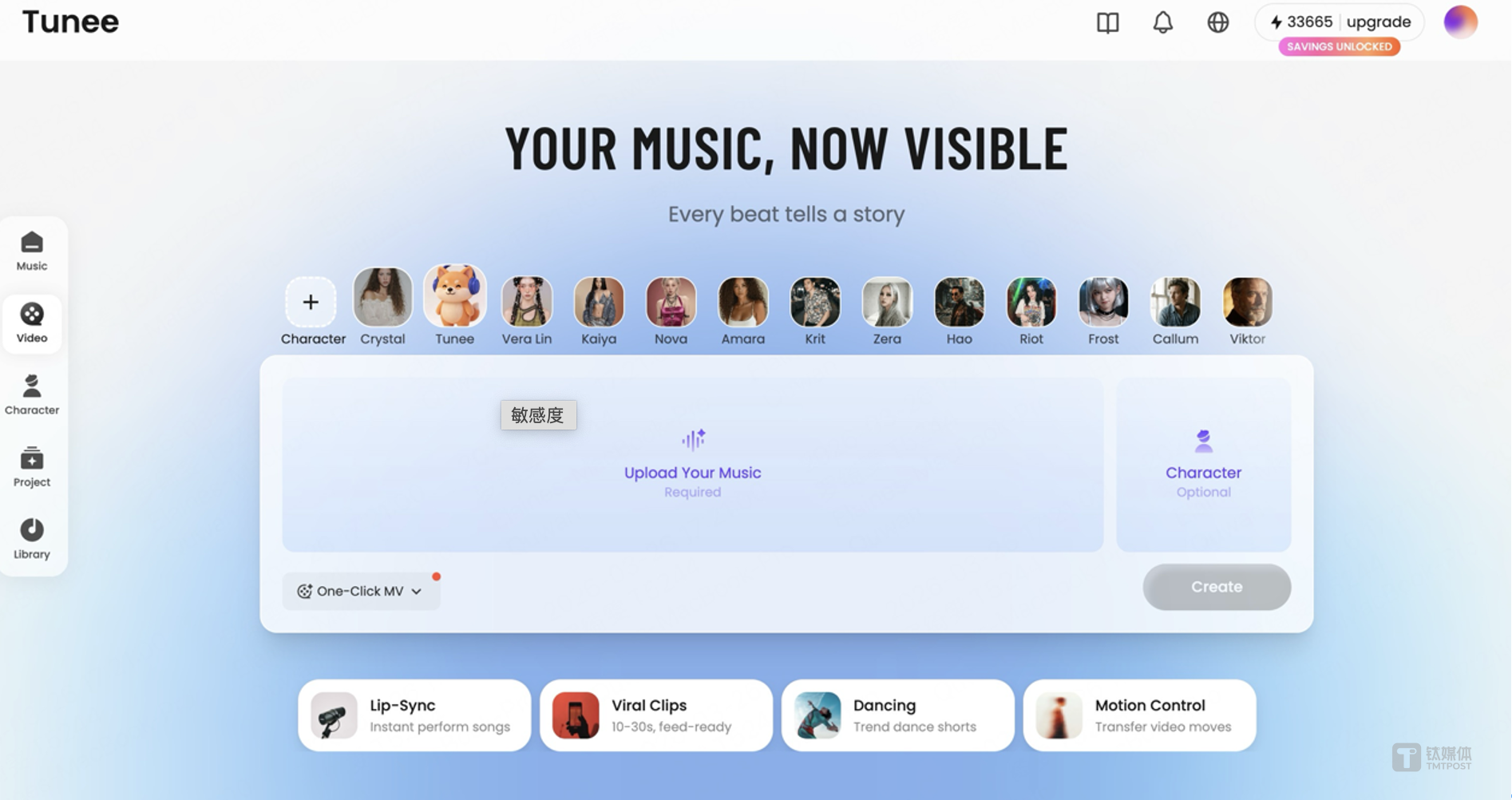

Tunee Launches Editable MV Agent

💡Editable AI MV workflows give full control—beats one-shot generators for creators.

⚡ 30-Second TL;DR

What Changed

MV Agent launched on Tunee platform

Why It Matters

Empowers creators with iterative control over AI music videos, boosting quality and customization in video production. Could set new standards for editable AI generative tools.

What To Do Next

Test Tunee's MV Agent with OpenClaw integration for editable music video workflows.

Who should care:Creators & Designers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Tunee's parent company, Qfun Tech, has strategically positioned the MV Agent as a B2B-focused solution aimed at streamlining professional video production pipelines rather than just consumer-grade content creation.

- •The integration of the OpenClaw model specifically addresses temporal consistency issues in long-form video generation, a common failure point in previous generation-only AI tools.

- •The platform utilizes a modular 'node-based' architecture, allowing users to export intermediate assets (like character sheets or script segments) to external creative software like Adobe Premiere or Blender.

📊 Competitor Analysis▸ Show

| Feature | Tunee MV Agent | Runway Gen-3 Alpha | Luma Dream Machine |

|---|---|---|---|

| Workflow Control | Fully Editable Nodes | Limited/Prompt-based | Prompt-based |

| Primary Focus | Professional Pipeline | Creative/Artistic | Social/Viral Content |

| Model Integration | OpenClaw (Exclusive) | Proprietary | Proprietary |

| Pricing Model | Enterprise/Subscription | Tiered Subscription | Freemium/Credits |

🛠️ Technical Deep Dive

- •Architecture: Utilizes a multi-stage diffusion pipeline where each stage (scripting, storyboarding, character consistency, motion) is decoupled and independently tunable.

- •OpenClaw Integration: Employs a proprietary 'Claw-Attention' mechanism that enforces character and style consistency across disparate video frames by anchoring latent vectors to a master reference asset.

- •Data Pipeline: Supports high-fidelity asset ingestion, allowing users to upload custom LoRAs or character reference sheets to override default model weights during the generation process.

- •API Capabilities: Provides RESTful API access for enterprise integration, enabling programmatic control over the storyboard-to-video rendering pipeline.

🔮 Future ImplicationsAI analysis grounded in cited sources

Tunee will shift the AI video market toward 'modular' rather than 'monolithic' generation models.

The success of editable workflows forces competitors to move away from 'black-box' generation to maintain relevance with professional studios.

Qfun Tech will likely license the OpenClaw model to third-party creative software vendors by Q4 2026.

The modular nature of the agent suggests a strategy to become the underlying engine for existing professional video editing suites.

⏳ Timeline

2024-05

Qfun Tech secures Series A funding to develop generative video infrastructure.

2025-02

Initial beta release of the Tunee platform focusing on basic text-to-video capabilities.

2025-11

Qfun Tech announces the development of the OpenClaw model for improved temporal consistency.

2026-03

Official launch of Tunee MV Agent with integrated OpenClaw workflow.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗