Silicon Valley Coders Feed Chinese LLMs

💡US devs pivot to powering China's LLM surge—new revenue streams for compute owners

⚡ 30-Second TL;DR

What Changed

Silicon Valley developers intensely 'feeding' Chinese LLMs with data/compute

Why It Matters

Accelerates Chinese AI progress via global compute, heightens US-China tech rivalry. Opportunities emerge for devs to monetize idle resources.

What To Do Next

Benchmark your idle GPUs for compatibility with Chinese LLM training pipelines like Qwen.

🧠 Deep Insight

Web-grounded analysis with 3 cited sources.

🔑 Enhanced Key Takeaways

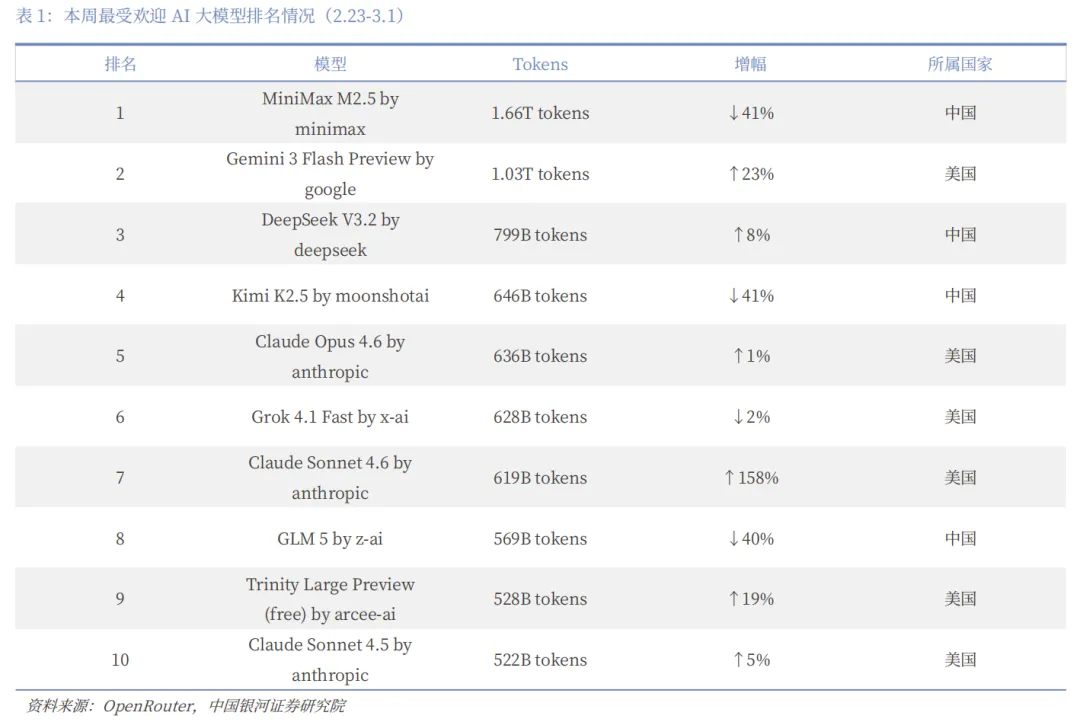

- •Chinese LLMs like DeepSeek-R1 achieved higher AppStore downloads than ChatGPT within a week of its January 2025 launch, impacting US tech stocks including Nvidia.[1]

- •DeepSeek employs Chain of Thought Reasoning and Distillation using models like Llama and Qwen to attain high performance at significantly lower production and training costs.[1]

- •SpikingBrain, a Chinese LLM, mimics human neuron spiking for superior power efficiency and faster responses in long tasks, potentially launching a new LLM generation.[1]

🛠️ Technical Deep Dive

- •DeepSeek-R1 uses innovative Chain of Thought Reasoning and Distillation techniques, leveraging open models like Llama and Qwen to reduce training resources while matching or exceeding competitor performance.[1]

- •SpikingBrain implements brain-inspired spiking neural networks, where neurons activate discretely via spikes rather than continuously, enabling power savings and accelerated execution of extended sequences.[1]

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (3)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗