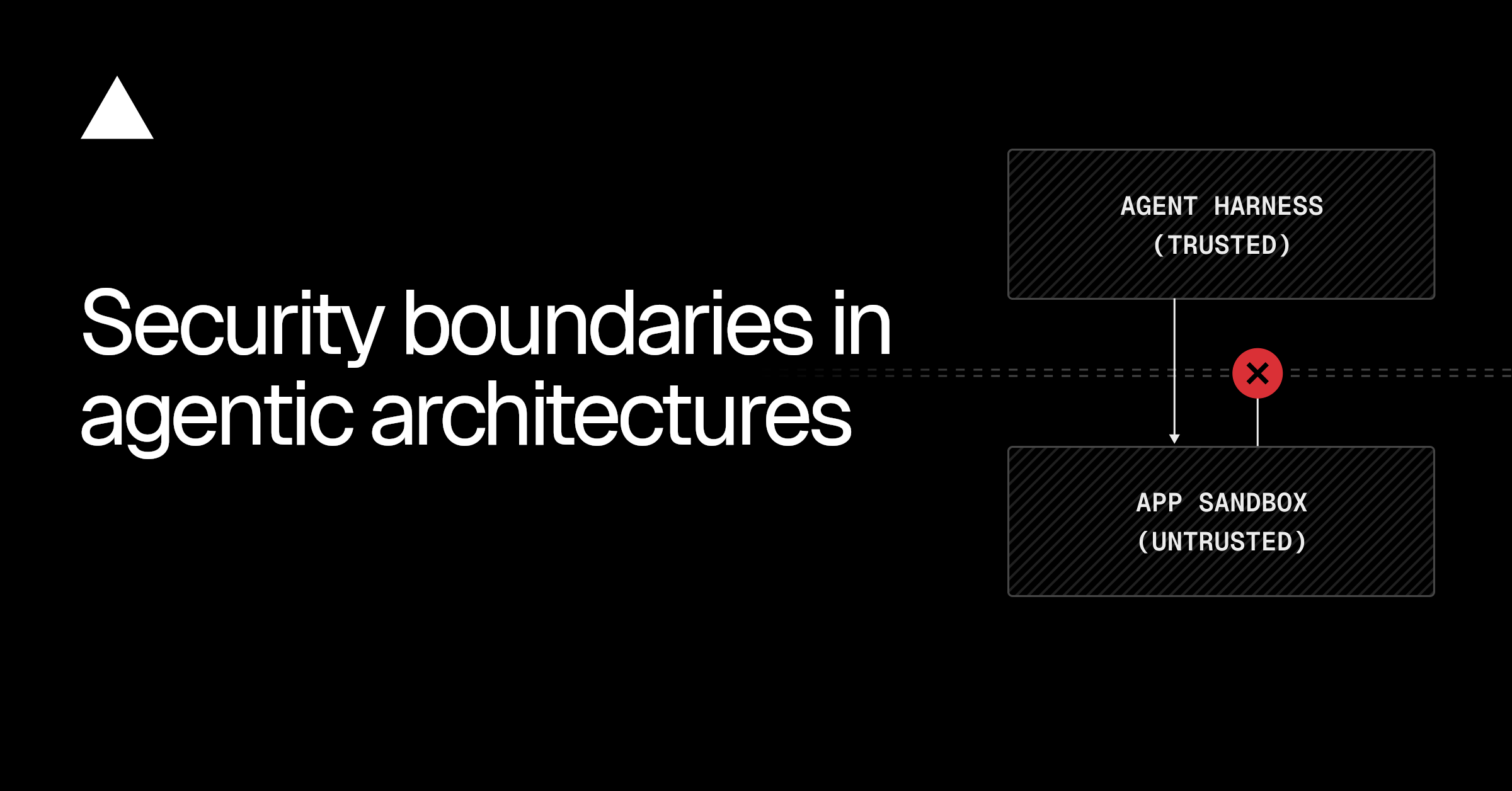

Security Boundaries in Agentic Architectures

💡Secure your coding agents against prompt injection exfiltrating secrets—essential for prod deployment.

⚡ 30-Second TL;DR

What Changed

Coding agents read filesystems, run shell/Python, generate/execute code for flexibility.

Why It Matters

This shifts how teams deploy agents, preventing breaches from untrusted code execution in production. Builders can now design safer multi-component systems, reducing infrastructure compromise risks.

What To Do Next

Audit your agent setup to isolate generated code execution from credential access using separate sandboxes.

🧠 Deep Insight

Web-grounded analysis with 8 cited sources.

🔑 Enhanced Key Takeaways

- •Vercel's Agent Trust Hub partnership with skills.sh introduces independent third-party risk verification for AI skills, assigning transparent safety classifications (Safe, Low Risk, High Risk, Critical Risk) backed by Gen Threat Labs threat intelligence—extending security boundaries beyond individual agent architectures to ecosystem-level governance[1].

- •Enterprise agentic systems face hard infrastructure constraints: Vercel's AI Gateway enforces a 5-minute timeout for autonomous agents and 4.5MB file limits, making long-running agents with extensive reasoning phases impractical without architectural redesign or alternative platforms like TrueFoundry that support private VPC deployment[2].

- •Security isolation in agentic architectures requires multi-layered trust models: Vercel Agent's Code Review feature uses secure sandboxes to validate AI-generated patches against real builds, tests, and linters before execution—demonstrating that trust boundaries must span from LLM output through validation to infrastructure execution[4].

- •The agentic support stack (2026) operationalizes security boundaries through orchestration layers: Plain's Workflow Engine enforces confidence thresholds, SLA-based escalation, and human handoff protocols, ensuring agents operate within defined trust zones and escalate to humans when exceeding their security or capability boundaries[7].

📊 Competitor Analysis▸ Show

| Feature | Vercel | Netlify | TrueFoundry |

|---|---|---|---|

| Agentic Agent Support | Agent suite (Code Review, Investigation) | Agent Runners, token management | Custom agent deployment |

| Execution Timeout | 5 minutes (hard limit) | Not specified | Flexible, VPC-native |

| Security Model | Multi-tenant edge, SOC 2 Type 2 | Multi-tenant, SOC 2 Type 2 | Single-tenant VPC isolation |

| Private Networking | Limited (public edge networks) | Limited | AWS PrivateLink, GCP, Azure VPC |

| Data Residency | Multi-tenant SaaS | Multi-tenant SaaS | Customer-controlled VPC |

| AI Development Credits | Not mentioned | Included in all plans | Custom pricing |

| Next.js Integration | Deep streaming support | Limited | Framework-agnostic |

| Vendor Lock-in Risk | High (proprietary runtime) | Moderate (plugin system) | Low (standard cloud APIs) |

🛠️ Technical Deep Dive

- Vercel Agent Code Review Architecture: Multi-step reasoning pipeline that analyzes pull requests for security vulnerabilities, logic errors, and performance issues; generates patches; executes patches in secure sandboxes with real builds, tests, and linters; only suggests fixes that pass validation checks[4].

- Vercel Agent Investigation: Queries logs and metrics around alert timestamps, applies pattern-matching and correlation analysis to identify root causes, surfaces insights without manual log review[4].

- Gen Agent Trust Hub Risk Modeling: Analyzes skill permissions, behavioral patterns, known vulnerabilities, and malicious intent indicators; assigns risk classifications (Safe/Low/High/Critical) via threat intelligence from Gen Threat Labs[1].

- Edge Function Constraints: Strict latency requirement between request and first byte of response; agents requiring extensive pre-streaming reasoning hit connection severance at proxy layer[2].

- Vercel Agent Privacy: Does not store or train on customer data; uses only LLM providers on Vercel's subprocessor list with contractual restrictions on training data usage[4].

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (8)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- gendigital.com — Agent Trust Hub Vercel

- truefoundry.com — Vercel AI Review 2026 We Tested It So You Dont Have to

- clarifai.com — Vercel vs Netlify

- vercel.com — Agent

- vercel.com — Security Boundaries in Agentic Architectures

- supergok.com — Vercel Sandbox Secure Compute AI Agents

- plain.com — Agentic Support Stack 2026

- pub.towardsai.net — AI Agents Skills Hooks Tool Standards Acdda0510a68

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Vercel News ↗