☁️AWS Machine Learning Blog•Stalecollected in 16m

SageMaker JumpStart Adds Use-Case Deployments

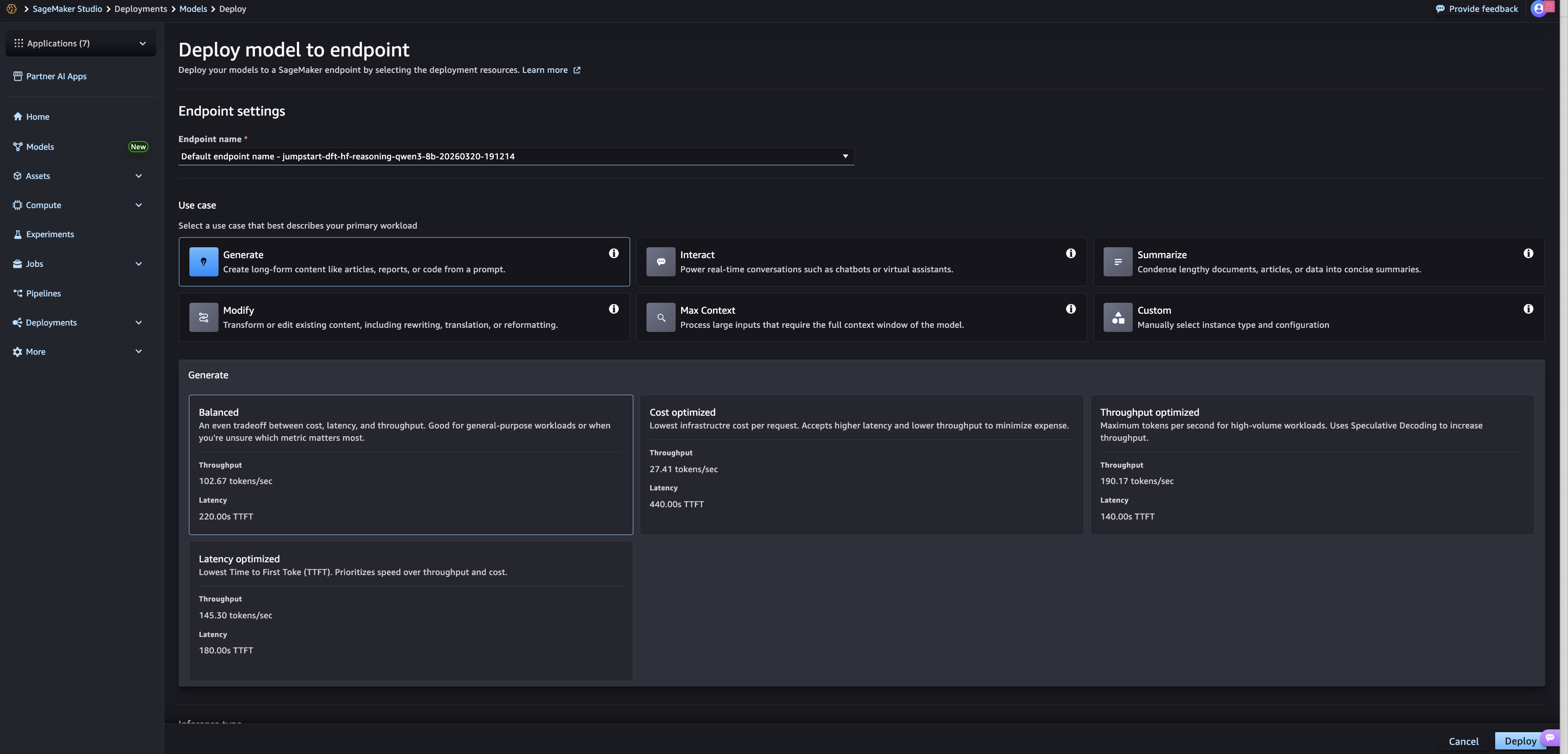

💡Pre-optimized JumpStart deployments for your use case—faster, tailored perf

⚡ 30-Second TL;DR

What Changed

Launch of optimized deployments

Why It Matters

Speeds up model deployment for practitioners, optimizing for performance and cost in real-world scenarios. Reduces setup time for common AI use cases.

What To Do Next

Test SageMaker JumpStart optimized deployments for your next model inference use case.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The update integrates directly with AWS CloudFormation and Terraform, allowing infrastructure-as-code (IaC) teams to standardize model deployment patterns across enterprise environments.

- •Optimized deployments leverage automated instance-type recommendations based on the specific model architecture and latency requirements, reducing manual benchmarking efforts.

- •The feature introduces granular cost-tracking tags by default for each use-case deployment, enabling improved FinOps visibility for ML workloads.

📊 Competitor Analysis▸ Show

| Feature | AWS SageMaker JumpStart | Google Vertex AI Model Garden | Azure Machine Learning Model Catalog |

|---|---|---|---|

| Deployment Optimization | Use-case specific pre-defined configs | Pre-built containers & pipelines | Managed endpoints with environment presets |

| Pricing Model | Pay-per-use (compute/storage) | Pay-per-use (compute/storage) | Pay-per-use (compute/storage) |

| Benchmarking | Integrated instance recommendations | Integrated performance metrics | Integrated performance metrics |

🛠️ Technical Deep Dive

- •Utilizes pre-configured CloudFormation templates that encapsulate VPC networking, IAM roles, and Auto Scaling policies tailored to specific model types (e.g., LLMs vs. Computer Vision).

- •Implements automated 'warm-up' scripts within the deployment lifecycle to ensure model artifacts are loaded into memory before traffic routing begins.

- •Supports native integration with SageMaker Inference Recommender to dynamically adjust instance sizing based on real-time throughput and latency telemetry.

- •Enforces standardized security guardrails by automatically applying AWS KMS encryption keys and VPC endpoint policies during the deployment configuration phase.

🔮 Future ImplicationsAI analysis grounded in cited sources

Enterprise ML adoption will shift toward 'configuration-as-code' patterns.

Standardizing deployment configurations reduces the operational overhead of managing bespoke ML infrastructure, accelerating time-to-production.

AWS will likely introduce automated cost-optimization triggers based on these deployment configs.

The addition of granular cost-tracking tags provides the telemetry necessary for automated rightsizing of inference endpoints.

⏳ Timeline

2020-12

Amazon SageMaker JumpStart launched to provide one-click access to pre-trained models.

2022-04

JumpStart expanded to include foundation models and generative AI capabilities.

2024-03

Introduction of SageMaker Inference Recommender integration for automated instance selection.

2026-04

Launch of optimized deployments with pre-defined use-case configurations.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗