☁️AWS Machine Learning Blog•Freshcollected in 16m

SageMaker Adds Optimized GenAI Inference Recs

💡Automate genAI inference configs to skip infra tuning—deploy faster!

⚡ 30-Second TL;DR

What Changed

Introduces optimized recommendations for generative AI inference

Why It Matters

This feature reduces deployment time and costs for genAI models by automating infrastructure choices. AI teams can iterate faster on model improvements. It lowers the barrier for scaling inference in production.

What To Do Next

Test optimized inference recommendations on your next SageMaker model deployment.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

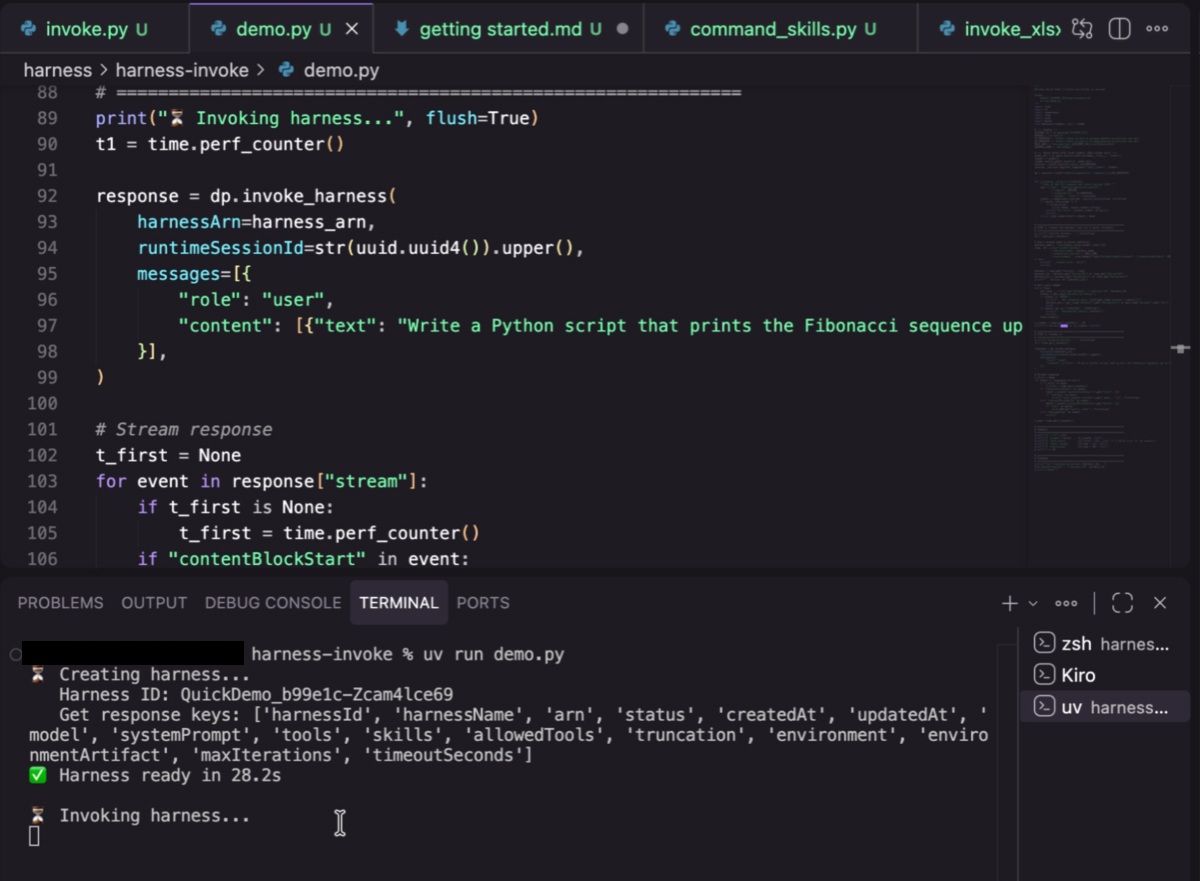

- •The feature leverages SageMaker Inference Recommender to automatically profile model latency and throughput across various instance types, specifically targeting large language models (LLMs) and foundation models.

- •It integrates with AWS Neuron SDK for optimized performance on AWS Trainium and Inferentia chips, reducing the manual effort required to tune model-specific compilation parameters.

- •The service now includes automated cost-per-inference projections, allowing developers to simulate budget impacts before committing to specific production hardware configurations.

📊 Competitor Analysis▸ Show

| Feature | AWS SageMaker Inference Recs | Google Vertex AI Model Garden | Azure AI Model Catalog |

|---|---|---|---|

| Deployment Optimization | Automated instance/config profiling | Automated tuning via Vertex AI Pipelines | Managed endpoints with auto-scaling |

| Hardware Support | AWS Silicon (Trainium/Inferentia) & NVIDIA | TPU & NVIDIA | NVIDIA & Maia |

| Pricing Transparency | Real-time cost-per-inference estimates | Usage-based billing with cost monitoring | Consumption-based pricing |

🛠️ Technical Deep Dive

- •Utilizes a load-testing engine that simulates real-world traffic patterns to generate P99 latency metrics.

- •Supports automated quantization recommendations (e.g., FP8, INT8) based on the specific model architecture and hardware target.

- •Provides integration with SageMaker Model Monitor to ensure that the recommended deployment configuration maintains performance SLAs post-deployment.

- •Automates the selection of optimal container images and environment variables for high-throughput inference.

🔮 Future ImplicationsAI analysis grounded in cited sources

Infrastructure management will become a secondary task for ML engineers.

Automated configuration tools reduce the need for manual hardware tuning, shifting the focus toward model architecture and data quality.

Cloud providers will move toward 'intent-based' infrastructure provisioning.

By allowing users to specify performance requirements rather than hardware specs, AWS is abstracting the underlying infrastructure layer.

⏳ Timeline

2021-11

AWS launches SageMaker Inference Recommender to automate instance selection.

2023-04

SageMaker expands support for foundation models via JumpStart.

2024-06

AWS introduces enhanced support for Inferentia2 chips in SageMaker.

2026-04

SageMaker adds optimized generative AI inference recommendations.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗