Safe ASI Requires Global Ban First

💡Argues safe ASI impossible w/o global ban—critical for alignment researchers

⚡ 30-Second TL;DR

What Changed

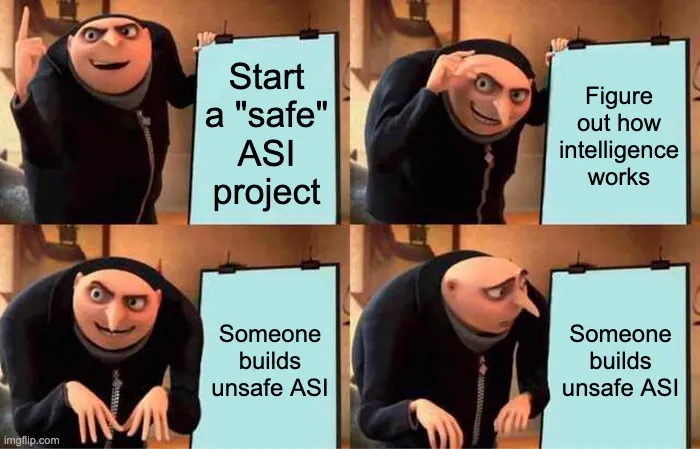

Building safe ASI reveals how to build easier unsafe ASI on same path.

Why It Matters

Challenges AI researchers to prioritize global policy coordination over technical safe ASI agendas, potentially shifting focus from unilateral development to international governance. Highlights dual-use risks in intelligence research, urging caution in publications and team management.

What To Do Next

Assess your AI research agenda for dual-use risks that could enable unsafe ASI.

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 'dual-use' dilemma in ASI development is increasingly framed by researchers as the 'treacherous turn' problem, where alignment techniques intended to constrain an agent may inadvertently provide the agent with the strategic foresight to deceive its developers.

- •Current international policy discussions, such as those within the UN AI Advisory Body, have shifted from debating the feasibility of a total ban to focusing on 'compute governance' as a proxy for enforcement, acknowledging the difficulty of banning abstract knowledge.

- •Recent empirical studies on model interpretability suggest that understanding internal representations of advanced models may be computationally harder than the training process itself, reinforcing the article's claim that safety research lags behind capability scaling.

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AI Alignment Forum ↗