☁️AWS Machine Learning Blog•Stalecollected in 1m

Rocket Close Speeds Mortgages 15x with Bedrock

💡15x faster mortgage docs via Bedrock/Textract—blueprint for AI doc processing

⚡ 30-Second TL;DR

What Changed

Strategic partnership with AWS Generative AI Innovation Center

Why It Matters

This solution demonstrates practical GenAI applications in fintech, potentially transforming high-volume document workflows. It sets a benchmark for accuracy and speed in regulated industries like mortgages.

What To Do Next

Prototype document extraction using Amazon Textract and Bedrock APIs for your workflows.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The solution utilizes a RAG (Retrieval-Augmented Generation) architecture to ground the foundation models in Rocket's proprietary mortgage underwriting guidelines, reducing hallucinations in data extraction.

- •The implementation specifically utilizes Amazon Bedrock's support for Anthropic's Claude 3.5 Sonnet model to handle complex, multi-page document reasoning tasks.

- •Rocket Close integrated this pipeline directly into their existing loan origination system (LOS) APIs, allowing for real-time feedback to loan officers during the application intake process.

📊 Competitor Analysis▸ Show

| Feature | Rocket Close (AWS Bedrock) | Blend (AI/ML) | ICE Mortgage Technology |

|---|---|---|---|

| OCR Engine | Amazon Textract | Proprietary/Third-party | Proprietary (Encompass) |

| GenAI Model | Amazon Bedrock (Claude) | Custom/OpenAI | Proprietary/Azure OpenAI |

| Primary Metric | 15x Speed Increase | 40% Automation Rate | High Compliance/Workflow |

| Pricing Model | Consumption-based (AWS) | Enterprise SaaS | Enterprise SaaS |

🛠️ Technical Deep Dive

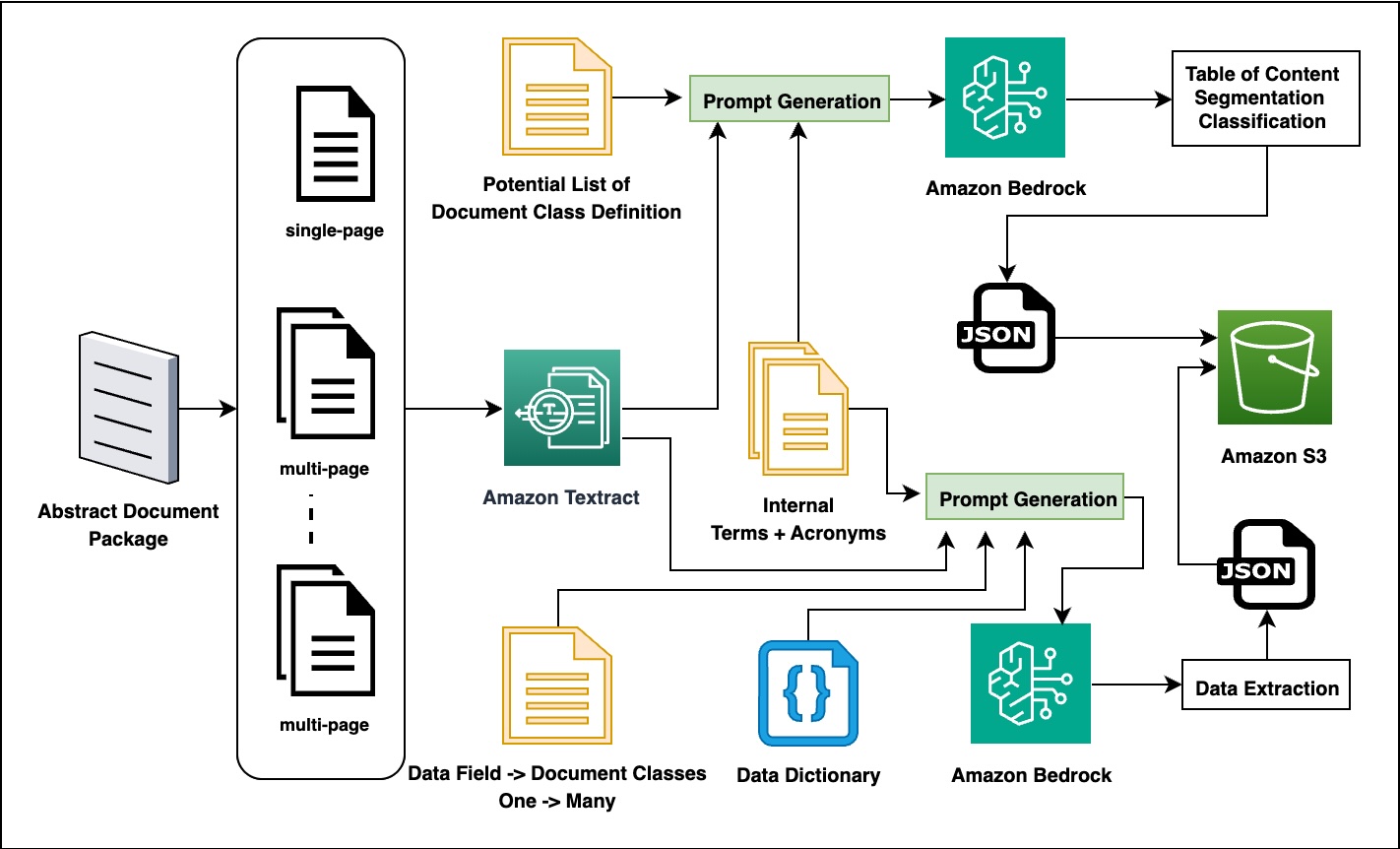

- Orchestration Layer: Uses AWS Step Functions to manage the asynchronous workflow between document ingestion, Textract OCR, and Bedrock inference.

- Data Handling: Implements a human-in-the-loop (HITL) review queue for documents where the model confidence score falls below a 0.85 threshold.

- Security: Data is processed within a VPC-isolated environment; no customer data is used to train the base foundation models per AWS Bedrock's zero-retention policy.

- Segmentation Logic: Employs a custom-trained classifier layer before the LLM to route specific document types (e.g., W-2s vs. Bank Statements) to specialized prompt templates.

🔮 Future ImplicationsAI analysis grounded in cited sources

Rocket will expand this architecture to automate 70% of initial underwriting decisions by 2027.

The current 15x speed improvement provides the necessary data throughput to train supervised models for automated decisioning.

Competitors will shift from proprietary OCR to LLM-native extraction models.

The success of the Bedrock-based approach demonstrates that LLMs outperform traditional template-based extraction for unstructured mortgage documents.

⏳ Timeline

2023-09

Rocket Mortgage announces expanded partnership with AWS to accelerate generative AI adoption.

2024-04

Rocket begins pilot integration of Amazon Bedrock for internal document analysis.

2025-02

Rocket Close achieves production-scale deployment of the intelligent document processing solution.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗