☁️AWS Machine Learning Blog•Stalecollected in 3m

Reserve GPU for SageMaker AI Inference

💡Lock in GPU capacity for SageMaker inference—no more hunting during peaks (saves time & costs).

⚡ 30-Second TL;DR

What Changed

Search for available p-family GPU capacity

Why It Matters

Provides predictable GPU access for production inference, cutting costs and deployment delays for AI teams scaling models. Enhances efficiency in high-demand environments.

What To Do Next

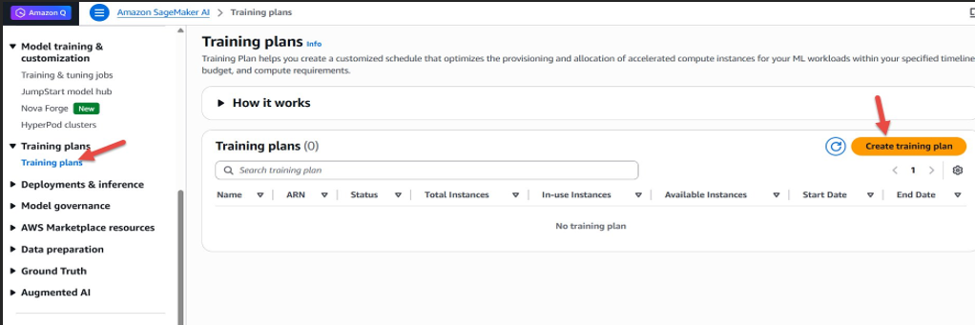

Search p-family GPU availability in SageMaker console and reserve via training plan for your inference endpoint.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •This capability leverages the existing AWS Training Plan infrastructure, originally designed for model training, to now provide guaranteed compute availability for inference workloads, effectively bridging the gap between training and deployment resource management.

- •The reservation model allows customers to commit to specific GPU instances (such as P4 or P5 families) for a fixed term, which helps mitigate the risk of 'capacity crunch' during high-demand periods or regional shortages.

- •By utilizing reserved capacity for inference, organizations can achieve more predictable cost structures compared to on-demand pricing, while ensuring that mission-critical endpoints remain operational without the risk of instance termination due to capacity constraints.

📊 Competitor Analysis▸ Show

| Feature | AWS SageMaker (Reserved Inference) | Google Cloud Vertex AI | Azure Machine Learning |

|---|---|---|---|

| Capacity Reservation | Training Plan-based GPU reservation | Reservations for GCE/TPU | Azure Dedicated Host/Capacity Reservation |

| Pricing Model | Term-based commitment | Committed Use Discounts (CUDs) | Capacity Reservation pricing |

| Inference Focus | Integrated with SageMaker Endpoints | Integrated with Vertex Endpoints | Integrated with Managed Endpoints |

🛠️ Technical Deep Dive

- Integration: The feature utilizes the SageMaker 'Training Plan' API to provision capacity, which is then mapped to SageMaker Inference Endpoints via the 'CapacityReservationSpecification' parameter.

- Instance Support: Primarily targets high-performance P-family instances (e.g., P4d, P4de, P5) which are optimized for large-scale LLM inference.

- Lifecycle Management: Reservations are managed via the AWS Management Console or AWS CLI, allowing users to associate specific endpoint configurations with a pre-purchased capacity block.

- Availability: The system performs a real-time check against regional capacity pools before allowing a reservation to be finalized, ensuring the requested GPU count is physically available.

🔮 Future ImplicationsAI analysis grounded in cited sources

AWS will expand reserved capacity to non-GPU instance families.

The success of the Training Plan model for inference suggests a broader strategy to standardize capacity management across all SageMaker compute types.

Automated capacity scaling will become the default for enterprise SageMaker deployments.

As infrastructure management becomes more complex, integrating reserved capacity with auto-scaling policies will reduce manual intervention for high-availability workloads.

⏳ Timeline

2017-11

Launch of Amazon SageMaker to simplify machine learning model building and deployment.

2022-11

AWS introduces SageMaker Training Plans to allow customers to reserve compute for training jobs.

2024-05

AWS announces general availability of P5 instances in SageMaker for high-performance training and inference.

2026-03

AWS extends Training Plan capacity reservations to support SageMaker AI inference endpoints.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗