☁️AWS Machine Learning Blog•Stalecollected in 21m

Polly Bidirectional Streaming for TTS

💡New Polly API enables real-time TTS for LLMs – perfect for low-latency voice AI.

⚡ 30-Second TL;DR

What Changed

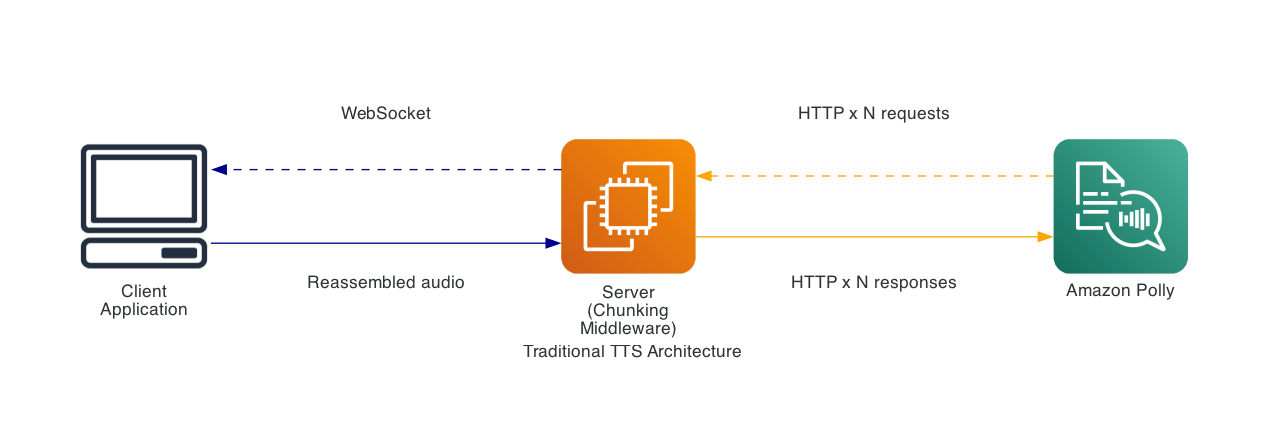

New Bidirectional Streaming API for Amazon Polly

Why It Matters

Revolutionizes voice AI apps by enabling true real-time synthesis, improving user experience in chatbots and virtual assistants. Reduces latency for dynamic conversations powered by LLMs.

What To Do Next

Test Amazon Polly's Bidirectional Streaming API for your LLM-powered voice chatbot.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The API utilizes a gRPC-based interface to facilitate full-duplex communication, significantly reducing the time-to-first-byte (TTFB) compared to traditional REST-based request-response patterns.

- •It integrates natively with Amazon Bedrock, allowing developers to stream partial tokens directly from LLM inference calls into Polly without needing to buffer complete sentences or paragraphs.

- •The implementation includes advanced prosody management, enabling the engine to adjust speech cadence dynamically as additional context from the LLM becomes available during the streaming session.

📊 Competitor Analysis▸ Show

| Feature | Amazon Polly (Bidirectional) | Google Cloud TTS | ElevenLabs | OpenAI Realtime API |

|---|---|---|---|---|

| Streaming Mode | Full-Duplex gRPC | Server-Sent Events (SSE) | WebSocket | WebSocket |

| Latency | Ultra-low (Incremental) | Low (Chunked) | Low (Chunked) | Ultra-low (Native) |

| Integration | AWS/Bedrock Native | Google Cloud/Vertex AI | API-first | OpenAI Platform |

| Pricing | Pay-per-character | Pay-per-character | Subscription/Usage | Usage-based |

🛠️ Technical Deep Dive

- Protocol: Implements a gRPC bidirectional stream, allowing the client to send text chunks while simultaneously receiving audio frames over the same connection.

- Buffer Management: Employs a sliding window mechanism to handle partial text inputs, ensuring that prosody and intonation are maintained across chunk boundaries.

- Latency Optimization: Bypasses standard HTTP/1.1 overhead by maintaining a persistent connection, reducing handshake latency for conversational turns.

- Encoding: Supports real-time transcoding into multiple formats (e.g., PCM, Opus) directly within the stream to minimize post-processing requirements.

🔮 Future ImplicationsAI analysis grounded in cited sources

Voice-based AI agents will achieve sub-200ms response latency.

The elimination of sentence-level buffering allows audio synthesis to begin as soon as the first few tokens of an LLM response are generated.

Polly will become the primary TTS engine for AWS-hosted multi-modal agents.

Native integration with Bedrock and the new streaming API creates a seamless pipeline that is more efficient than third-party TTS integrations.

⏳ Timeline

2016-11

Amazon Polly is launched as a cloud-based text-to-speech service.

2018-11

Introduction of Neural Text-to-Speech (NTTS) for more human-like voice quality.

2023-09

Integration of Polly with Amazon Bedrock to support generative AI applications.

2026-03

Launch of the Bidirectional Streaming API for real-time conversational TTS.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗