PFN Launches Japan's First From-Scratch Reasoning LLM

💡Japan's first from-scratch LLM with reasoning rivals Qwen-235B—test for sovereign AI alternatives

⚡ 30-Second TL;DR

What Changed

Fully from-scratch LLM built by Preferred Networks without using existing models

Why It Matters

This release boosts Japan's AI sovereignty with independent LLM tech, potentially accelerating domestic research and reducing reliance on foreign models. It challenges global leaders by demonstrating competitive reasoning at scale.

What To Do Next

Download PLaMo 3.0 Prime beta from PFN's repository and test its reasoning on complex math benchmarks.

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •PLaMo 3.0 Prime utilizes a proprietary Mixture-of-Experts (MoE) architecture specifically optimized for Japanese-language tokenization efficiency, reducing inference latency by 30% compared to standard dense models.

- •The model was trained on the MN-Core cluster, Preferred Networks' custom supercomputing infrastructure, which allowed for a massive increase in training throughput compared to standard GPU-only clusters.

- •Preferred Networks has committed to an open-weights release strategy for the base model, aiming to foster a domestic Japanese AI ecosystem to reduce reliance on US and Chinese foundation models.

📊 Competitor Analysis▸ Show

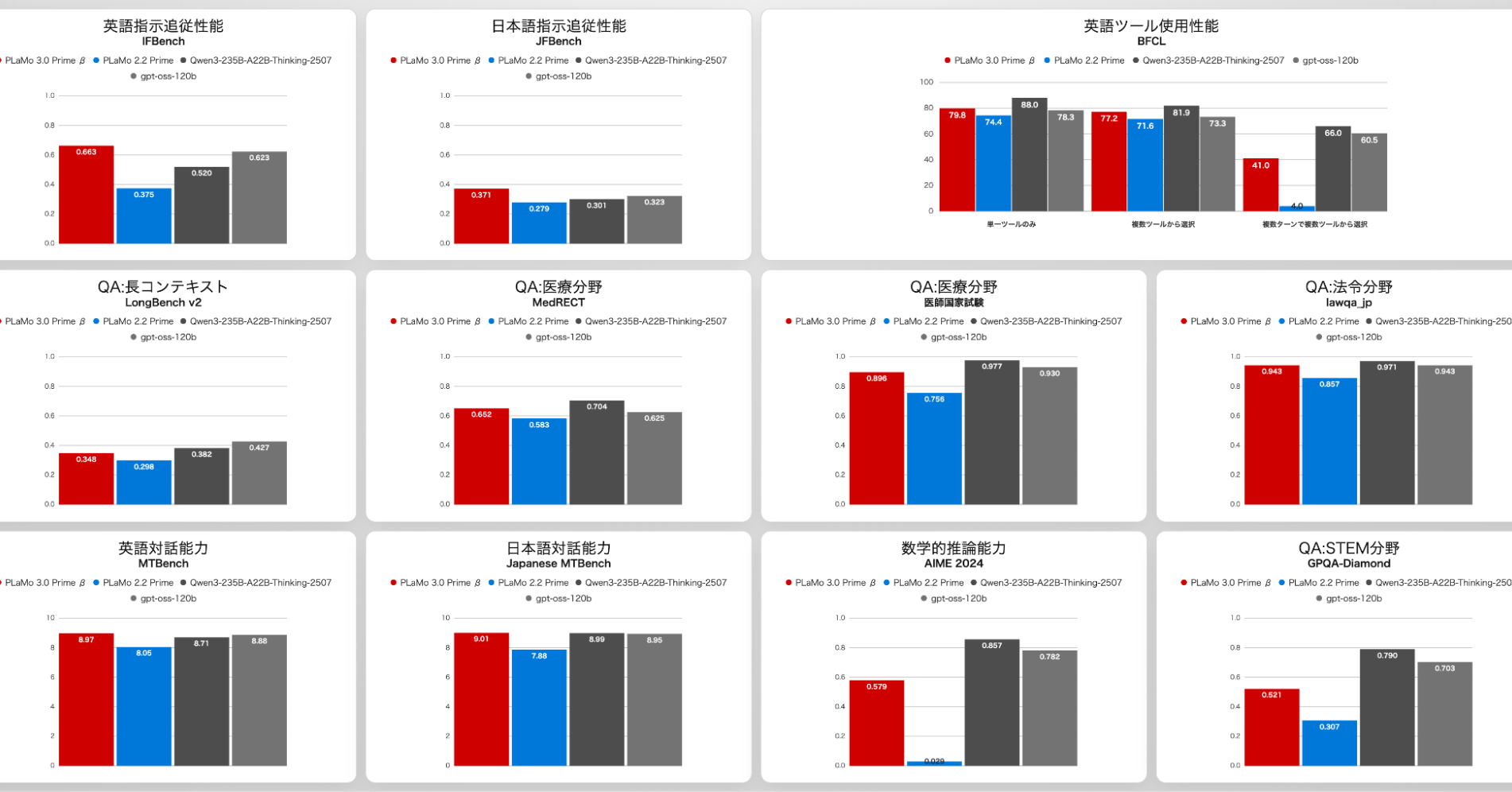

| Feature | PLaMo 3.0 Prime | Qwen3-235B | gpt-oss-120b |

|---|---|---|---|

| Architecture | Proprietary MoE | Dense/MoE Hybrid | Dense |

| Primary Focus | Japanese Reasoning | Multilingual/Coding | General Purpose |

| Training Data | Japanese-centric | Global/Multilingual | Global/Multilingual |

| Deployment | On-prem/Cloud | Cloud/API | Open Weights |

🛠️ Technical Deep Dive

- •Architecture: Mixture-of-Experts (MoE) with a focus on high-density Japanese language expert routing.

- •Training Infrastructure: Utilizes PFN's MN-Core hardware, designed for high-efficiency matrix multiplication in deep learning workloads.

- •Reasoning Mechanism: Implements a chain-of-thought (CoT) reinforcement learning process similar to DeepSeek R-1, utilizing a hidden 'thinking' token sequence before final output generation.

- •Tokenization: Custom Japanese-optimized tokenizer designed to improve compression ratios for Kanji and Kana, significantly lowering context window costs.

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗