🔍Google AI Blog•Stalecollected in 30m

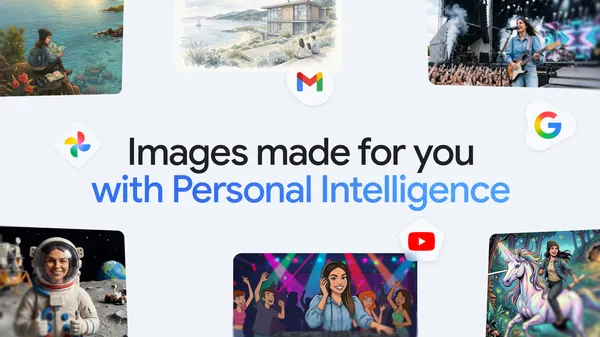

Personalized Images in Gemini App

💡Gemini personalizes images with your photos—key for creative AI tools.

⚡ 30-Second TL;DR

What Changed

Introduces personalized image creation in Gemini app

Why It Matters

Enhances AI creativity by personalizing outputs, boosting user engagement in Gemini. May inspire similar features in other AI apps.

What To Do Next

Test personalized image generation in Gemini app using your Google Photos.

Who should care:Creators & Designers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Nano Banana 2 utilizes a novel 'Contextual Grounding Layer' that allows the model to reference private Google Photos metadata and user-specific semantic embeddings without exposing raw image data to the cloud.

- •The feature includes a mandatory 'Privacy-First Consent' toggle, requiring users to explicitly opt-in to allow the model to index their personal photo library for generative purposes.

- •Google has implemented a new 'Provenance Watermarking' system for all images generated via Nano Banana 2, ensuring that AI-generated content is cryptographically signed to distinguish it from authentic user photos.

📊 Competitor Analysis▸ Show

| Feature | Google Gemini (Nano Banana 2) | OpenAI ChatGPT (DALL-E 3) | Midjourney (v7) |

|---|---|---|---|

| Personal Context Integration | Deep (Google Photos/Drive) | Limited (Memory/Files) | None |

| Privacy Architecture | On-device/Private Cloud | Cloud-based | Cloud-based |

| Primary Use Case | Life-logging/Personalized Art | Creative/Professional | Artistic/High-fidelity |

| Pricing | Included in Gemini Advanced | Included in Plus/Team | Subscription-based |

🛠️ Technical Deep Dive

- •Model Architecture: Nano Banana 2 is a multimodal small language model (SLM) optimized for edge-to-cloud hybrid inference.

- •Contextual Grounding: Employs a Retrieval-Augmented Generation (RAG) pipeline that queries a local vector database of user-indexed photo metadata.

- •Inference Optimization: Uses 4-bit quantization to enable on-device processing of personal context, reducing latency for image generation prompts.

- •Safety Layer: Integrates a secondary 'Personal Identity Filter' that prevents the generation of photorealistic depictions of the user or their family members to mitigate deepfake risks.

🔮 Future ImplicationsAI analysis grounded in cited sources

Google will expand Nano Banana 2 integration to include real-time calendar and email context.

The current architecture for photo-based grounding is designed as a modular framework that can ingest structured data from other Workspace apps.

Third-party developers will gain access to the 'Contextual Grounding Layer' via API by Q4 2026.

Google's historical pattern with Gemini features involves transitioning from proprietary app-exclusive tools to platform-wide developer APIs.

⏳ Timeline

2023-12

Google announces the Gemini model family, setting the foundation for multimodal capabilities.

2024-05

Google I/O introduces expanded image generation capabilities within the Gemini ecosystem.

2025-09

Google releases the first iteration of Nano Banana, focusing on lightweight on-device generative tasks.

2026-04

Launch of Nano Banana 2 with deep Google Photos integration.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Google AI Blog ↗