🐯虎嗅•Freshcollected in 10m

PC Growth Amid AI-Driven Memory Crunch

💡AI boom hikes PC memory 200%—critical for infra builders planning edge AI hardware.

⚡ 30-Second TL;DR

What Changed

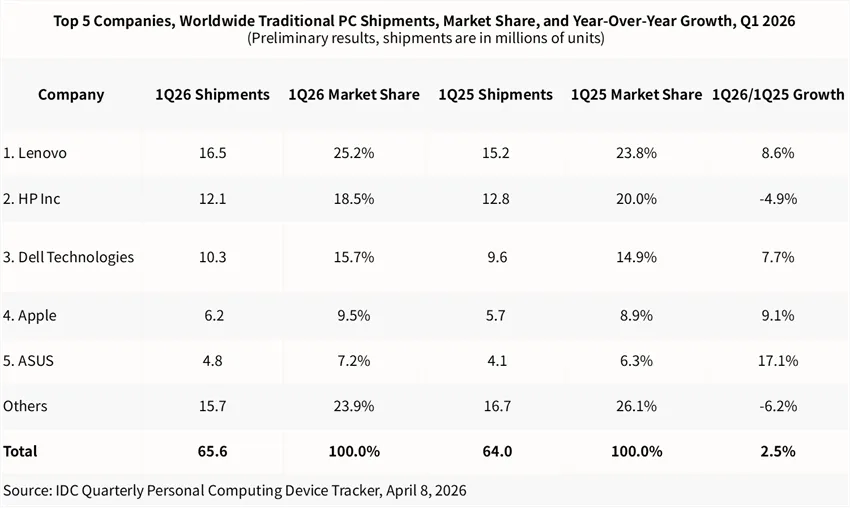

PC shipments +2.5% YoY (IDC), memory costs up $122-237 from HBM shift to AI.

Why It Matters

AI data center demand tightens memory supply, raising PC costs and favoring supply-strong vendors like Lenovo. This could slow AI PC rollout for edge inference if prices persist.

What To Do Next

Track Omdia memory price forecasts to optimize AI workstation procurement timing.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The memory supply crunch is exacerbated by a transition to HBM3e and HBM4 production capacity, which has prioritized high-margin AI server contracts over traditional DDR5 modules used in consumer PCs.

- •The Windows 10 EOL deadline, set for October 2025, has created a 'forced' refresh cycle that is currently masking the underlying weakness in consumer discretionary spending on premium hardware.

- •Foundry capacity constraints for NPU-integrated SoCs are creating a secondary bottleneck, limiting the availability of 'AI PC' branded laptops despite strong initial enterprise demand.

📊 Competitor Analysis▸ Show

| Feature | Lenovo (ThinkPad AI) | Dell (Latitude AI) | HP (EliteBook AI) |

|---|---|---|---|

| NPU TOPS | 45-50 TOPS (Snapdragon/Intel) | 40-45 TOPS (Intel/AMD) | 35-40 TOPS (Intel) |

| Pricing Strategy | Aggressive enterprise bundling | Premium/Service-led | Value-tier focus |

| Supply Chain | In-house manufacturing (high) | Outsourced (high) | Outsourced (medium) |

🛠️ Technical Deep Dive

- •AI PCs in Q1 2026 are primarily utilizing SoCs with integrated NPUs capable of >40 TOPS, meeting the minimum requirements for local execution of large language models (LLMs) like Llama 3.x.

- •Memory architecture shift: Transition from standard LPDDR5x to LPDDR6 is being accelerated to support the increased bandwidth requirements of on-device AI inference.

- •Thermal management: New chassis designs incorporate vapor chamber cooling to sustain NPU performance during prolonged AI workloads, increasing bill-of-materials (BOM) costs by approximately 8-12%.

🔮 Future ImplicationsAI analysis grounded in cited sources

PC average selling prices (ASPs) will increase by 15% in H2 2026.

The depletion of low-cost memory inventory and the shift to more expensive NPU-integrated silicon will force manufacturers to pass costs to consumers.

HP will lose further market share to Lenovo throughout 2026.

HP's continued reliance on consumer-grade segments leaves it more vulnerable to the projected decline in non-essential hardware spending compared to Lenovo's enterprise-heavy portfolio.

⏳ Timeline

2024-06

Microsoft announces Copilot+ PC requirements, setting the 40 TOPS NPU standard.

2025-10

Official end of support for Windows 10, triggering the current enterprise upgrade cycle.

2026-01

Global memory manufacturers announce a 20% reduction in DDR5 output to prioritize HBM production.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 虎嗅 ↗