🇨🇳cnBeta (Full RSS)•Stalecollected in 7h

NVIDIA Launches AI-Optimized Vera CPU

💡NVIDIA's first AI-specific CPU: 2x efficiency for agents/RL – infra upgrade alert

⚡ 30-Second TL;DR

What Changed

NVIDIA launches Vera CPU for external sale at GTC

Why It Matters

NVIDIA's Vera CPU expands its AI hardware ecosystem, enabling more efficient agentic AI and RL workloads. This could challenge Arm and x86 dominance in AI servers, benefiting practitioners with optimized inference.

What To Do Next

Benchmark Vera CPU on your RL agent workloads via NVIDIA's early access program.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

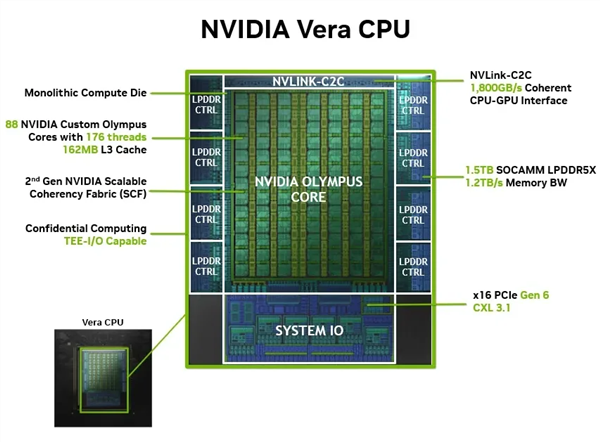

- •Vera utilizes a proprietary 'Agent-Centric' instruction set architecture (ISA) that prioritizes low-latency branching for reinforcement learning decision trees over traditional general-purpose compute tasks.

- •The CPU integrates directly with NVIDIA's Blackwell-successor interconnect fabric, allowing for cache-coherent memory pooling between the Vera CPU and Rubin GPU clusters to minimize data movement overhead.

- •NVIDIA has positioned Vera as the foundational compute element for the 'Omniverse Agentic Fabric,' a software stack designed to manage autonomous agent swarms in real-time simulation environments.

📊 Competitor Analysis▸ Show

| Feature | NVIDIA Vera | Intel Xeon (AI-Optimized) | AMD EPYC (AI-Optimized) |

|---|---|---|---|

| Primary Focus | Agentic RL/Inference | General Purpose/HPC | General Purpose/HPC |

| Architecture | Agent-Centric ISA | x86-64 (AVX-512/AMX) | x86-64 (AVX-512) |

| Memory Fabric | Proprietary Coherent | CXL 2.0/3.0 | CXL 2.0/3.0 |

| Pricing | Premium/Enterprise | Competitive/Volume | Competitive/Volume |

🛠️ Technical Deep Dive

- Architecture: Custom silicon optimized for asynchronous reinforcement learning workloads, featuring dedicated hardware accelerators for Monte Carlo Tree Search (MCTS) and policy evaluation.

- Interconnect: Utilizes NVLink-C2C (Chip-to-Chip) for high-bandwidth, low-latency communication with Rubin GPUs, enabling unified memory space.

- Efficiency: Achieved through a 'Dynamic Power Gating' mechanism that scales core voltage based on the agent's inference complexity rather than fixed clock cycles.

- Memory: Supports HBM3e integration directly on-package to eliminate bottlenecks associated with traditional DDR5 memory interfaces in agentic workflows.

🔮 Future ImplicationsAI analysis grounded in cited sources

NVIDIA will phase out general-purpose CPU development for data centers.

The shift toward specialized silicon like Vera suggests a strategic pivot away from competing with x86 incumbents in non-AI workloads.

Vera will become the standard for autonomous robotics control systems.

The hardware's specific optimization for reinforcement learning aligns with the computational requirements of real-time robotic decision-making.

⏳ Timeline

2024-06

NVIDIA announces the Rubin architecture roadmap at Computex.

2025-03

NVIDIA previews agent-specific compute requirements at GTC.

2026-03

NVIDIA officially launches the Vera CPU alongside Rubin GPUs at GTC.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗