📊Bloomberg Technology•Stalecollected in 3m

Nvidia, Arm Revive CPU in AI Era

💡CPUs rebounding in AI—rethink GPU-only strategies for workloads

⚡ 30-Second TL;DR

What Changed

Nvidia and Arm emphasize CPU's central role in AI

Why It Matters

Reinforces need for balanced hardware stacks in AI, potentially lowering reliance on GPUs alone. Could accelerate CPU optimizations for inference and hybrid workloads.

What To Do Next

Benchmark Arm Neoverse CPUs for cost-effective AI inference deployments.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The resurgence is driven by the 'Grace' CPU architecture, which utilizes the Arm Neoverse V2 platform to provide high-bandwidth memory (HBM3) integration, directly addressing the memory bottleneck in large-scale AI model training.

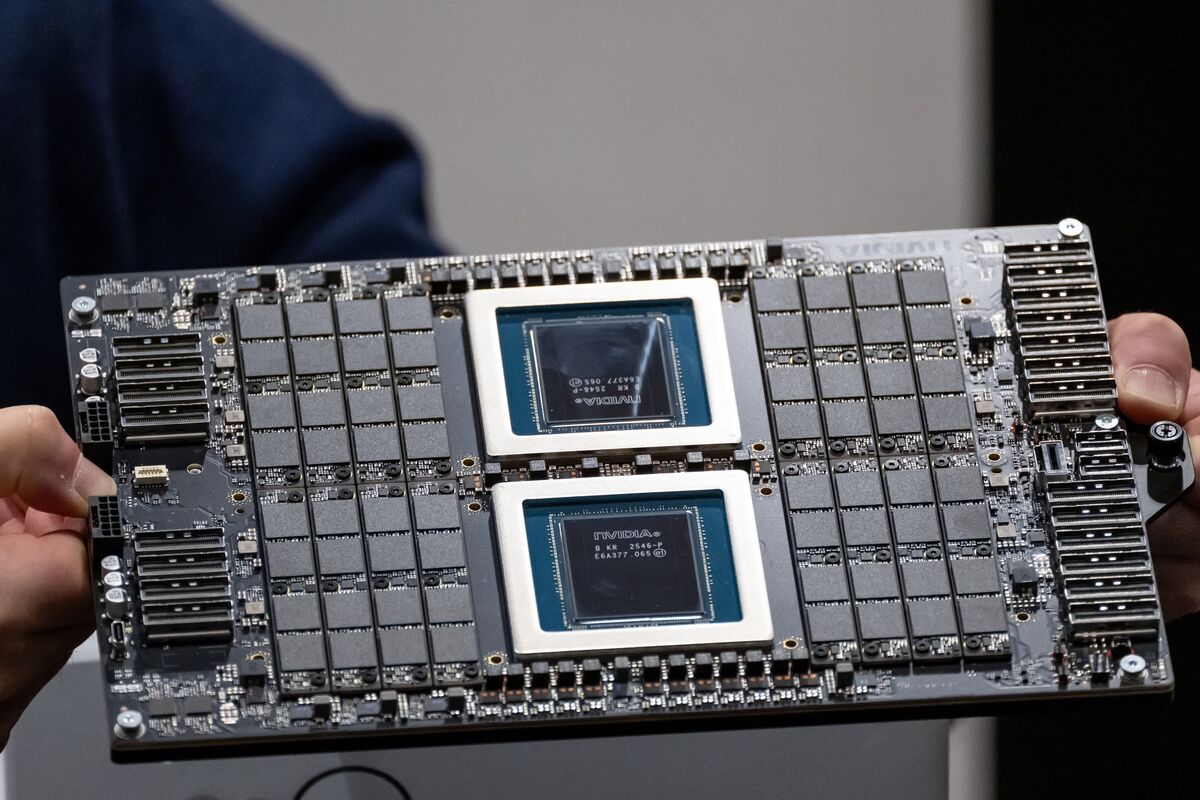

- •Nvidia is shifting toward a 'Superchip' strategy, combining Grace CPUs with Hopper or Blackwell GPUs via NVLink-C2C (chip-to-chip) interconnects, which offers significantly higher bandwidth and lower latency than traditional PCIe-based CPU-GPU connections.

- •This strategy aims to reduce reliance on x86-based server architectures in data centers, allowing Nvidia to capture more of the total system value by providing a vertically integrated, Arm-based compute stack.

📊 Competitor Analysis▸ Show

| Feature | Nvidia Grace (Arm) | Intel Xeon (x86) | AMD EPYC (x86) |

|---|---|---|---|

| Architecture | Arm Neoverse | x86-64 | x86-64 |

| Interconnect | NVLink-C2C (900 GB/s) | PCIe Gen5 / CXL | PCIe Gen5 / CXL |

| Memory | Integrated LPDDR5X | DDR5 | DDR5 |

| Primary AI Role | AI Superchip Host | General Purpose / Inference | General Purpose / Inference |

🛠️ Technical Deep Dive

- NVLink-C2C Interconnect: Provides up to 900 GB/s of bidirectional bandwidth, enabling cache-coherent memory access between the CPU and GPU, which is critical for large language model (LLM) performance.

- Grace CPU Superchip: Features 144 Arm Neoverse V2 cores, utilizing a mesh interconnect fabric to maintain high throughput across the multi-core complex.

- Memory Architecture: Supports up to 480GB of LPDDR5X memory with ECC, providing high memory bandwidth (up to 500 GB/s) while maintaining lower power consumption compared to traditional server-grade DDR5 modules.

🔮 Future ImplicationsAI analysis grounded in cited sources

Nvidia will capture a larger share of the data center CPU market by 2028.

The performance advantages of the Grace-Hopper/Blackwell integrated architecture create a strong incentive for hyperscalers to migrate away from traditional x86-based server designs.

Arm-based server processors will become the standard for AI-heavy data center workloads.

The efficiency and customizability of the Arm architecture allow for better optimization of the CPU-GPU data path required for modern generative AI training.

⏳ Timeline

2020-09

Nvidia announces intent to acquire Arm (later terminated in 2022).

2021-04

Nvidia unveils Grace, its first data center CPU based on Arm architecture.

2023-05

Nvidia begins shipping Grace CPU Superchips to major cloud service providers.

2024-03

Nvidia announces the Blackwell GPU architecture, further integrating with Grace CPUs.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Bloomberg Technology ↗