🇨🇳cnBeta (Full RSS)•Stalecollected in 23h

NVIDIA Approved for Limited H200 Exports to China

💡NVIDIA H200 now exportable to China—critical for APAC AI infra builds

⚡ 30-Second TL;DR

What Changed

US allows limited exports of H200 AI chips to China

Why It Matters

Eases China supply constraints for AI training; boosts NVIDIA revenue potential despite restrictions. Signals thawing in US-China tech trade tensions.

What To Do Next

Verify H200 availability via NVIDIA partners for China-based AI clusters.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

Web-grounded analysis with 7 cited sources.

🔑 Enhanced Key Takeaways

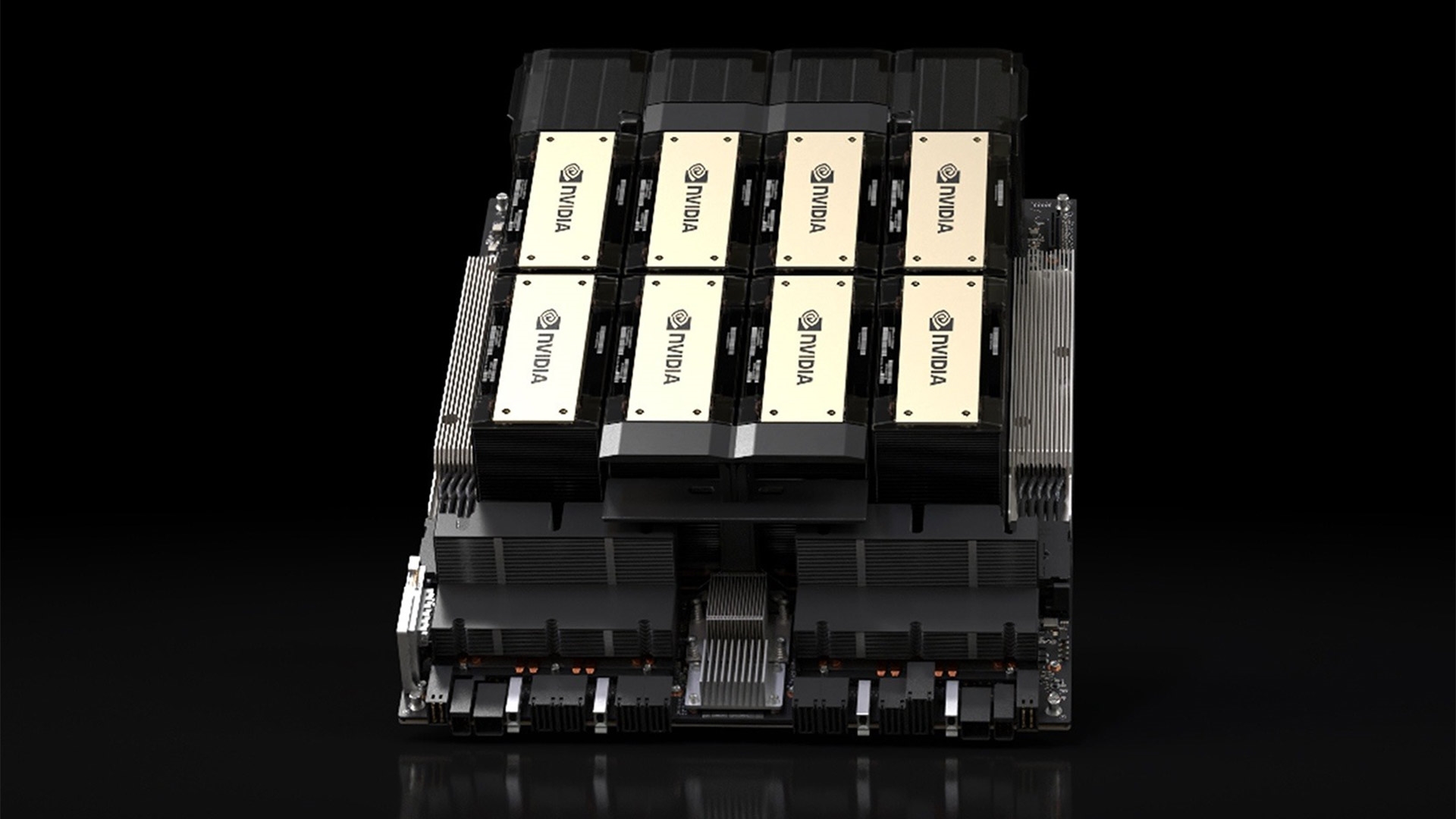

- •H200 features 141GB HBM3e memory, marking it as the first GPU with this memory type for superior AI and HPC workloads[1][2][4].

- •H200 delivers up to 3,958 TFLOPS in FP8 and INT8 Tensor Core performance, with 4.8 TB/s memory bandwidth[2][4].

- •Available in SXM (700W TDP, NVLink point-to-point) and NVL (PCIe, up to 600W) variants for different deployment scales[3][4][5].

- •Provides 1.9X faster LLM inference like Llama2 70B and up to 110X HPC performance gains over prior generations[4][5].

🛠️ Technical Deep Dive

- •Architecture: NVIDIA Hopper with Transformer Engine supporting mixed FP8/FP16 precisions for transformer-based AI models[1][4][6].

- •Memory: 141GB HBM3e across 6 stacks of 24GB each, delivering 4.8 TB/s bandwidth (1.4X over H100)[2][3][4].

- •Performance: FP64: 34 TFLOPS; TF32 Tensor Core: 989 TFLOPS; BF16/FP16 Tensor Core: 1,979 TFLOPS; FP8/INT8 Tensor Core: 3,958 TFLOPS[4].

- •Interconnect: NVLink at 900 GB/s (SXM), PCIe Gen5 at 128 GB/s; supports up to 7 MIGs at 18GB each[4][5].

- •Power: TDP up to 700W (SXM, configurable), optimized for liquid cooling in large-scale clusters[2][3][6].

- •Additional: 7 NVDEC and 7 JPEG decoders; confidential computing supported[2][4].

🔮 Future ImplicationsAI analysis grounded in cited sources

NVIDIA regains partial China market access, potentially capturing 5-10% AI chip demand

Limited H200 exports fill a gap left by H100 bans, targeting lower-spec needs amid China's push for domestic AI infrastructure.

US inspections and 25% tariffs limit export volumes to under 10,000 units annually

Restrictive measures ensure compliance while allowing minimal re-entry, balancing national security with commercial interests.

H200's HBM3e memory advantage accelerates China's LLM inference by 1.9X

Enhanced memory capacity and bandwidth enable efficient handling of large models despite export constraints.

📎 Sources (7)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗