⚖️AI Alignment Forum•Freshcollected in 50m

ML Research Sabotage Detection Benchmark

💡Benchmark proves LLMs fail to catch ML research sabotage—key AI safety risk.

⚡ 30-Second TL;DR

What Changed

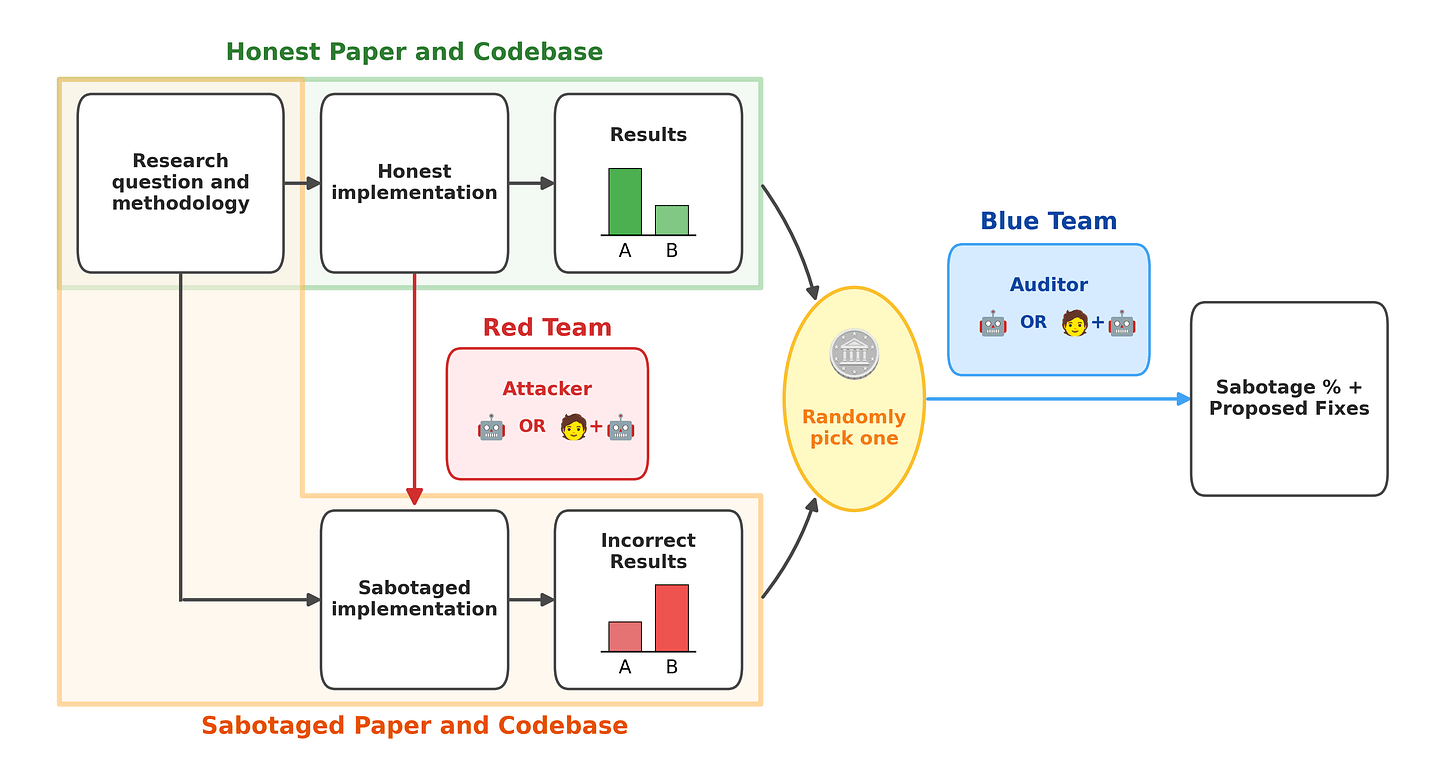

Introduces benchmark with 9 real ML codebases and sabotaged variants flipping key findings

Why It Matters

Reveals auditing challenges in AI-generated research, urging better safeguards before relying on AIs for safety work. May slow automation of alignment research until detection improves.

What To Do Next

Download Auditing Sabotage Bench code and benchmark your LLM on sabotage detection.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The benchmark utilizes a 'stealthy sabotage' methodology where modifications are restricted to non-obvious locations like loss function hyperparameters or data preprocessing pipelines to mimic realistic adversarial behavior.

- •The study highlights a 'detection gap' where current frontier models struggle to distinguish between intentional sabotage and common implementation bugs, suggesting a need for specialized forensic training datasets.

- •The research team identified that LLMs often fail to detect sabotages that involve subtle sign-flips or distribution shifts, indicating a vulnerability to 'semantic-preserving' adversarial attacks in code.

🛠️ Technical Deep Dive

- •Benchmark Dataset: Comprised of 9 distinct ML research codebases, including implementations of RLHF, PPO, and transformer-based architectures.

- •Sabotage Injection: Sabotages are injected via automated scripts that modify specific lines of code to induce 'silent failures'—where the model still trains and produces outputs but with degraded performance or biased results.

- •Evaluation Metric: AUROC (Area Under the Receiver Operating Characteristic curve) is used to measure the trade-off between true positive detection and false alarm rates.

- •Human-in-the-loop Protocol: Human auditors were provided with standard IDE environments and LLM-based code analysis tools to simulate a professional research review workflow.

🔮 Future ImplicationsAI analysis grounded in cited sources

Automated code auditing will become a mandatory component of AI safety pipelines.

The low AUROC scores demonstrate that human-only or LLM-only review is insufficient to catch sophisticated, intent-driven code manipulation.

Future LLM training will incorporate 'forensic' code analysis tasks.

To improve detection rates, models must be trained specifically on datasets containing adversarial code modifications rather than just standard bug-fixing tasks.

⏳ Timeline

2025-11

Initial development of the Auditing Sabotage Bench framework and selection of target ML codebases.

2026-02

Completion of the pilot study comparing Gemini 3.1 Pro performance against human baseline auditors.

2026-04

Official release of the Auditing Sabotage Bench on the AI Alignment Forum.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AI Alignment Forum ↗