🇨🇳cnBeta (Full RSS)•Freshcollected in 3h

Micron CEO: AI Early, No Storage Relief

💡AI storage crunch hikes costs—no price relief soon. Secure supplies for your infra now

⚡ 30-Second TL;DR

What Changed

AI wave in early stage per Micron CEO

Why It Matters

Rising storage costs will pressure AI training/inference budgets for enterprises. Practitioners face procurement challenges amid tight supply.

What To Do Next

Assess Micron HBM or SSD options for AI clusters to secure supply ahead of shortages.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Micron is prioritizing HBM3E and high-capacity LPDDR5X production to satisfy AI server demand, which has constrained wafer capacity for legacy NAND and DRAM products.

- •The industry shift toward 'AI-native' architectures requires significantly higher memory bandwidth per processor, forcing Micron to reallocate capital expenditure toward advanced packaging facilities rather than traditional capacity expansion.

- •Micron's inventory levels for standard consumer-grade storage remain at historic lows as the company maintains strict supply discipline to protect margins amidst the AI-driven supercycle.

📊 Competitor Analysis▸ Show

| Feature | Micron (HBM3E) | Samsung (HBM3E) | SK Hynix (HBM3E) |

|---|---|---|---|

| Capacity | Up to 36GB (12-high) | Up to 36GB (12-high) | Up to 36GB (12-high) |

| Bandwidth | 1.2 TB/s+ | 1.2 TB/s+ | 1.2 TB/s+ |

| Market Position | Aggressive ramp-up | High-volume leader | Primary NVIDIA supplier |

🛠️ Technical Deep Dive

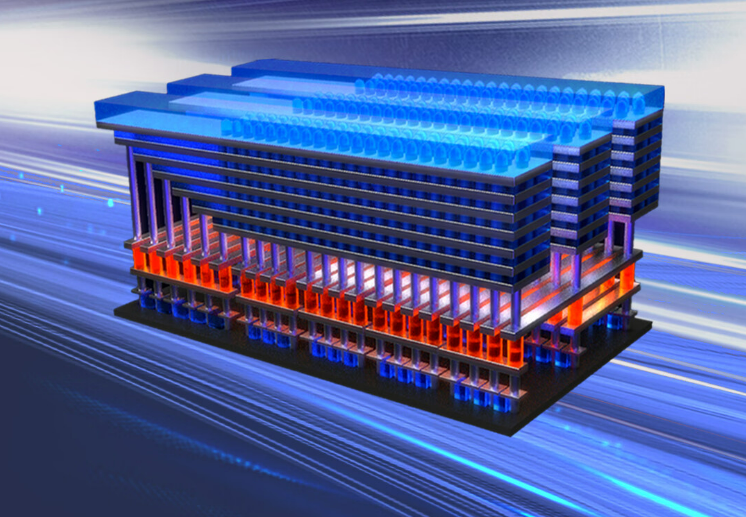

- HBM3E (High Bandwidth Memory 3E): Utilizes 8-high and 12-high TSV (Throughput Silicon Via) stacks to achieve 1.2 TB/s bandwidth.

- LPDDR5X: Optimized for edge AI, supporting data rates up to 9.6 Gbps, critical for on-device LLM inference.

- 1-gamma (1γ) DRAM process node: Micron's latest node deployment aimed at increasing bit density and power efficiency for AI-heavy workloads.

🔮 Future ImplicationsAI analysis grounded in cited sources

Consumer SSD prices will remain elevated through Q4 2026.

The continued diversion of wafer starts to high-margin HBM products limits the supply available for the commodity NAND market.

Micron will increase capital expenditure for advanced packaging by at least 15% in 2026.

To remain competitive in the HBM market, Micron must expand its backend assembly and test capabilities to handle complex 3D-stacked memory.

⏳ Timeline

2024-02

Micron begins mass production of HBM3E for NVIDIA's H200 Tensor Core GPUs.

2024-07

Micron announces expansion of advanced packaging and test facility in Taichung, Taiwan.

2025-03

Micron reports record-breaking quarterly revenue driven by AI-related memory demand.

2025-11

Micron achieves volume production of 1-gamma (1γ) DRAM node.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗